Topic Overview

This topic covers the infrastructure and tooling used to run large language models and AI workloads efficiently across chips and servers: from on-device inference to cloud and on‑prem inference server platforms. As of 2026-04-28, demand for low-latency, cost‑efficient inference and secure execution has pushed architectures toward heterogeneous accelerators (GPUs, NPUs, and edge inference chips), hybrid on-device/cloud deployments, and more standardized runtime integrations. Key patterns include on-device LLM inference for privacy and latency, inference server platforms that pool accelerator resources, and Model Context Protocol (MCP) deployment tooling that connects LLMs to operational systems. Representative tools: Daytona provides secure, isolated sandboxes for executing AI‑generated code; Minima offers an on‑prem RAG stack for local retrieval and LLM hosting; mcp-memory-service supplies a production-ready hybrid semantic memory store; and MCP servers for Pinecone, Google Cloud Run, Cloudflare, and AWS expose vector DBs, serverless hosts, edge platforms, and cloud services through a common interface. Kubernetes MCP integrations let teams manage pods, deployments, and services consistently across clusters and edge nodes. Together these components address real-world needs: secure execution of generated code, local-first RAG workflows, persistent and synchronized assistant memory, and deployment portability across cloud, edge, and on‑prem hardware. Operational priorities in 2026 emphasize predictable latency, cost control, security boundaries, and interoperability—making MCP standards and Kubernetes integrations important levers for productionizing inference on diverse accelerators and server platforms.

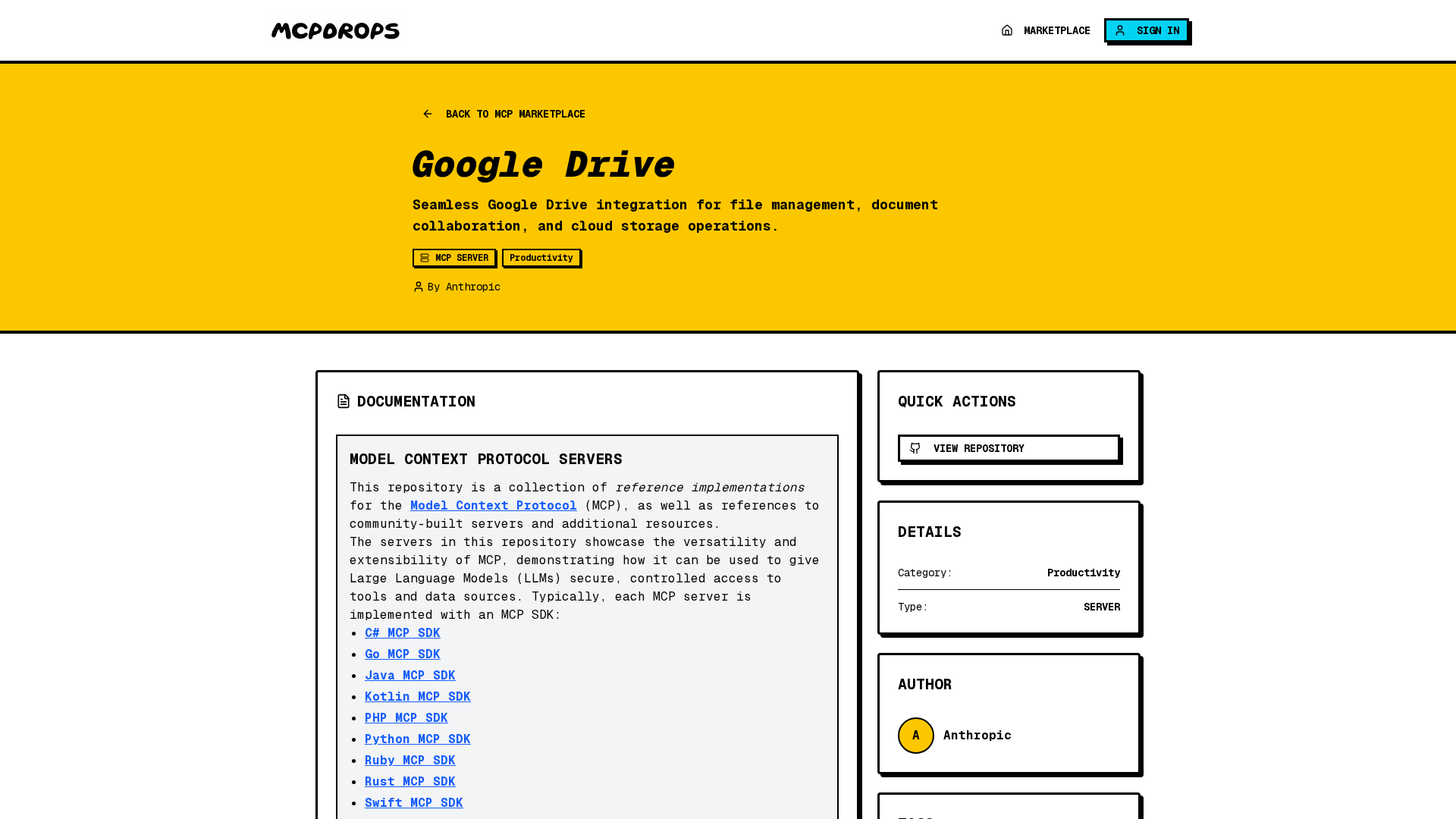

MCP Server Rankings – Top 8

Fast and secure execution of your AI generated code with Daytona sandboxes

Connect to Kubernetes cluster and manage pods, deployments, and services.

MCP server that connects AI tools with Pinecone projects and documentation.

MCP server for RAG on local files

Production-ready MCP memory service with zero locks, hybrid backend, and semantic memory search.

Deploy code to Google Cloud Run

Deploy, configure & interrogate your resources on the Cloudflare developer platform (e.g. Workers/KV/R2/D1)

Perform operations on your AWS resources using an LLM.