Topic Overview

AI cybersecurity and model‑protection covers the policies, controls and platforms used to prevent data leakage, protect intellectual property, detect misuse, and manage third‑party risk across the model lifecycle. This topic is timely: the rapid adoption of generative models and foundation models has expanded attack surfaces (data exposure, model theft, prompt‑injection and supply‑chain risk), and regulators and industry controls increasingly require demonstrable governance and monitoring. Tools in this space now span dedicated governance platforms, cloud ML stacks, open model vendors and enterprise LLM providers. Monitaur represents a sector‑focused governance layer that centralizes policy, monitoring, model validation and vendor governance for highly regulated industries such as insurance. Cloud platforms like Google’s Vertex AI combine model discovery (Model Garden), training, fine‑tuning, evaluation, deployment and runtime monitoring to make secure operations part of the managed ML stack. Providers such as Mistral AI are advancing enterprise‑oriented open and efficient foundation models paired with production platforms emphasizing privacy and governance controls. Cohere delivers private, customizable LLM services—embeddings, retrieval and generation—that support controlled inference, private fine‑tuning and searchable embeddings for secure applications. Across these categories—AI Security Governance and AI Governance Tools—common capabilities include policy enforcement, model validation and testing, telemetry and drift detection, vendor risk management, and privacy‑preserving inference. Organizations should evaluate combinations of platform‑level controls and specialized governance tools to cover both technical protections and compliance workflows. The current market trend is toward tighter integration between MLOps and security functions, and toward vendors offering built‑in governance primitives to meet regulatory and operational requirements.

Tool Rankings – Top 4

Insurance-focused enterprise AI governance platform centralizing policy, monitoring, validation, vendor governance and证e

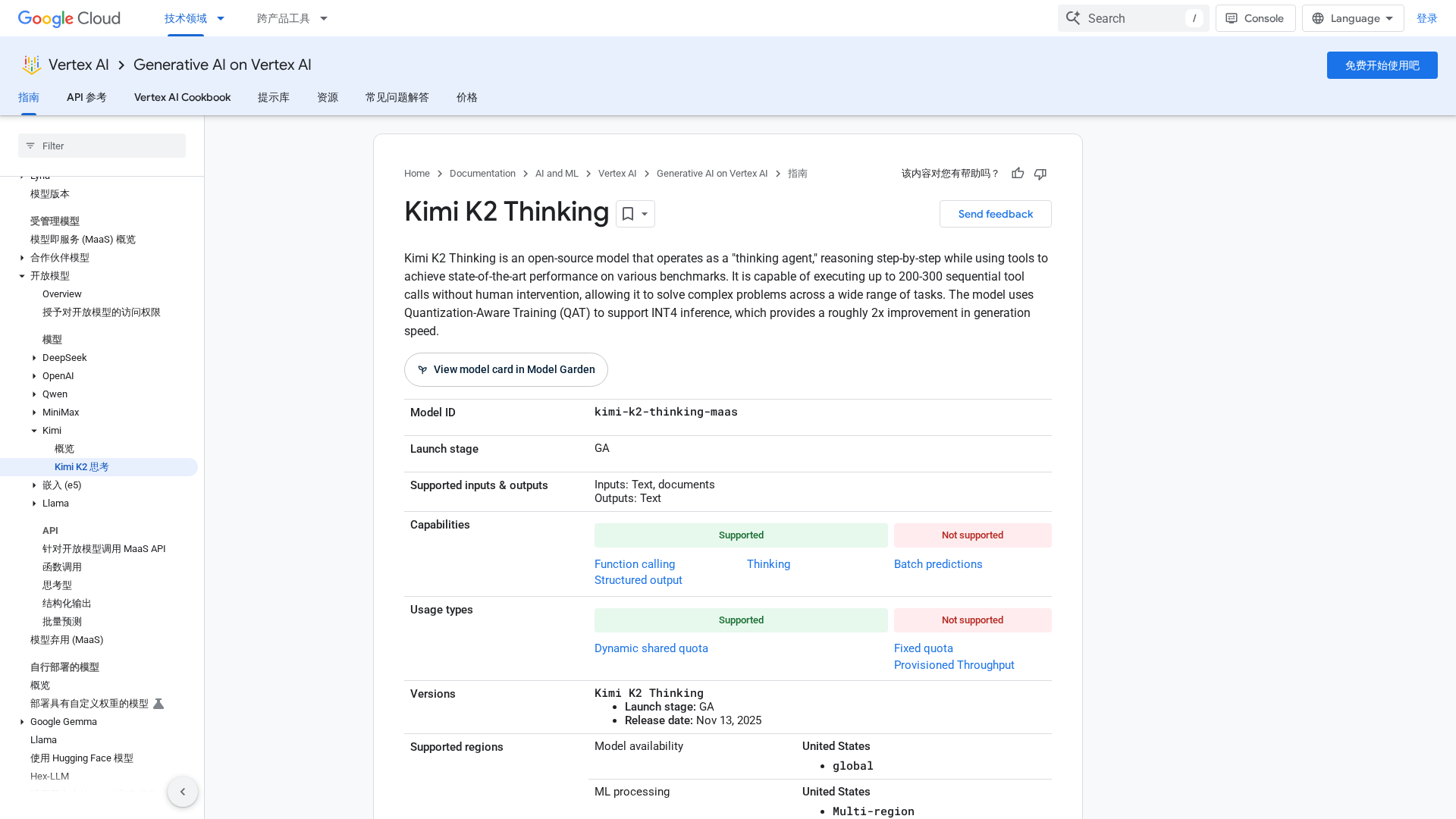

Unified, fully-managed Google Cloud platform for building, training, deploying, and monitoring ML and GenAI models.

Enterprise-focused provider of open/efficient models and an AI production platform emphasizing privacy, governance, and

Enterprise-focused LLM platform offering private, customizable models, embeddings, retrieval, and search.

Latest Articles (28)

A practical, prompt-based playbook showing how Gemini 3 reshapes work, with a 90‑day plan and guardrails.

Cohere's blog offers AI news, insights, and innovation, plus a demo of a secure, private AI platform to boost business productivity.

An open-source thinking agent on Vertex AI that performs long chain-of-thought reasoning with autonomous tool use and INT4-accelerated inference.

Cohere announces a HIPAA-compliant BAA to enable secure custom AI model development for healthcare clients.

AI governance must be designed from day one to ensure performance and risk mitigation, not a checkbox exercise.