Topic Overview

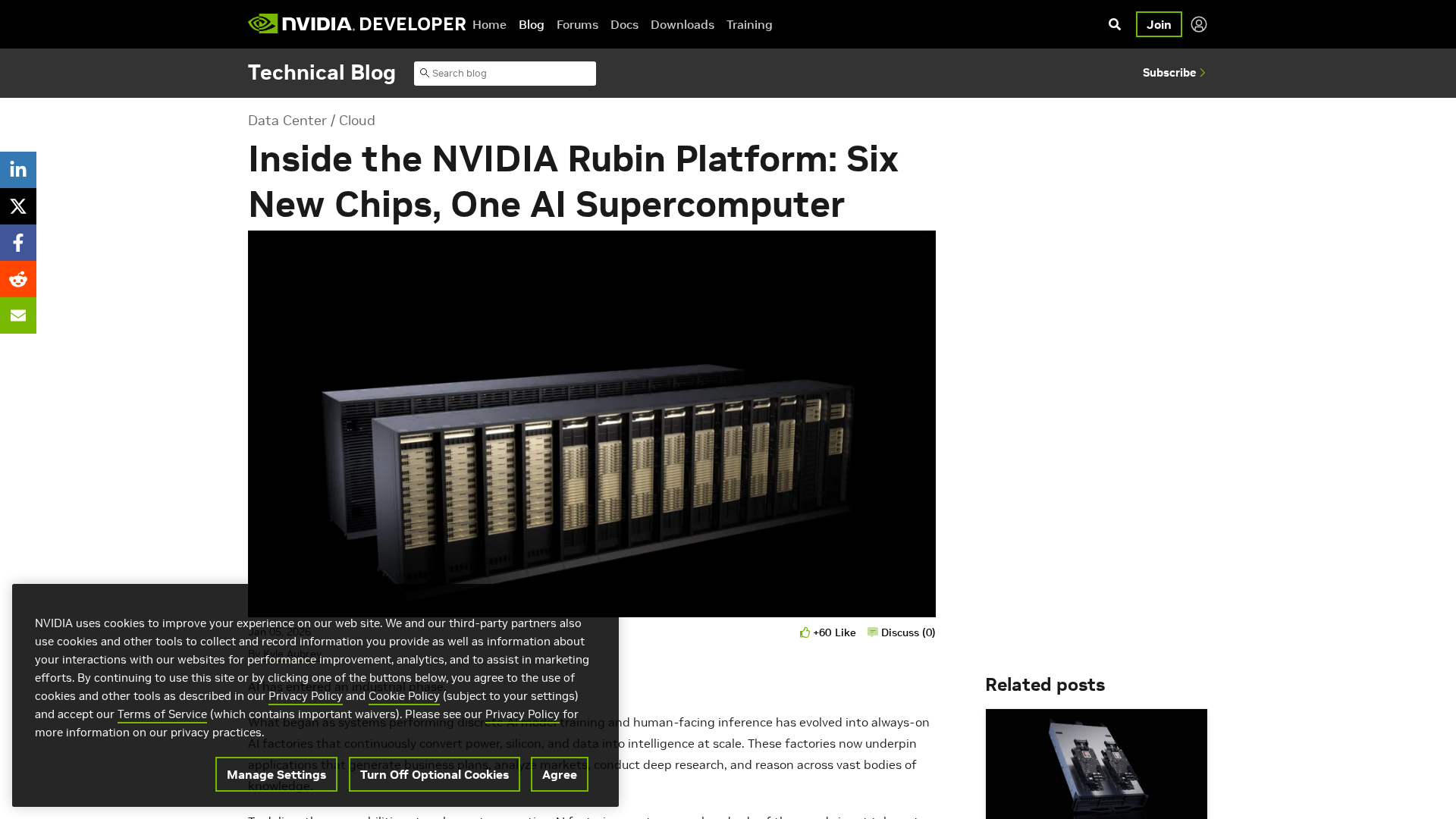

This topic examines the intersection of AI accelerator chips and data‑center infrastructure providers — how specialized silicon, cloud and on‑prem deployments, and vendor partnerships together determine the performance, cost and compliance profile of modern AI systems. Demand for large‑scale training and low‑latency inference has made accelerator efficiency, memory architecture and software stacks central to deployment decisions; industry conversations increasingly focus on supplier alliances (for example, collaborations between hyperscalers and GPU vendors) and on-chip designs such as Arm‑based CPU families paired with accelerator GPUs. Relevance (as of 2026‑02‑23) stems from continued growth in model size and multimodal applications, rising energy and data‑sovereignty pressures, and a drive toward more modular, interoperable stacks. These trends amplify the role of three categories: Decentralized AI Infrastructure (distributed training, edge inference and hybrid cloud fabrics), AI Data Platforms (data curation and pipeline tooling to prepare high‑quality training sets), and AI Security & Governance (policy, monitoring and vendor risk controls for regulated deployments). Representative tools illustrate the stack: Google Cloud’s Vertex AI provides an end‑to‑end managed platform for model training and deployment; Google Gemini supplies multimodal model APIs used on those platforms; DatologyAI focuses on automated data curation to produce model‑ready datasets that reduce training cost and size; and Monitaur addresses enterprise governance needs by centralizing policy, monitoring and vendor validation. Together, these hardware, platform and governance layers show how chip choice and data‑center partnerships influence both technical outcomes and operational risk, making informed selection and integration of accelerators, software runtimes and governance tools essential for reliable, scalable AI deployments.

Tool Rankings – Top 4

Unified, fully-managed Google Cloud platform for building, training, deploying, and monitoring ML and GenAI models.

Google’s multimodal family of generative AI models and APIs for developers and enterprises.

Insurance-focused enterprise AI governance platform centralizing policy, monitoring, validation, vendor governance and证e

Data-curation-as-a-service to train models faster, better, and smaller.

Latest Articles (34)

Overview of the Gemini CLI v0.36.0-preview release series, highlighting architectural, CLI, and UI changelogs across multiple pre-release versions.

OpenAI rolls out global group chats in ChatGPT, supporting up to 20 participants in shared AI-powered conversations.

A detailed, use-case-driven comparison of Gemini 3 Pro and GPT-5.1 across context windows, multimodal capabilities, tooling, benchmarks, and pricing.

Google launches Gemini 3.0 with the Antigravity IDE, aiming to outpace Cursor 2.0 in AI-powered coding.

Google plans to extend AI Mode’s agentic bookings to hotel and flight reservations with major partners and user control.