Topic Overview

This topic covers AI systems that learn to perform complex computer and UI tasks by watching screen recordings or demonstration videos—exemplified by models like FDM-1 and a growing set of rivals. These video-to-action systems combine video-language understanding, imitation learning and program synthesis to convert visual demonstrations into reproducible steps, scripts or agent policies for automation, testing and developer assistance. The topic is timely in 2026 because advances in multimodal foundation models, improved video datasets, and production-ready agent frameworks have made video-driven automation practical for many software workflows. Organizations are adopting these capabilities for GUI automation, end-to-end test generation, onboarding scripted workflows, and agentic developer tooling, while facing new evaluation, reliability and safety challenges. Key tooling and categories: AI Automation Platforms and GenAI Test Automation use video-learned behaviors to drive UI workflows and generate tests—Qodo focuses on quality-first test generation and SDLC governance, while platform builders (LangChain, MindStudio) provide the engineering and low-code/no-code frameworks to compose, evaluate and deploy agentic applications that operationalize video-derived policies. AI-native and online IDEs (Windsurf, Replit) and developer assistants (GitHub Copilot, Aider) are the integration points where video-to-code or video-to-action outputs become part of the developer loop, enabling live previews, context-aware edits, and deployment. Practical adoption hinges on rigorous test harnesses, reproducible benchmarks, privacy-aware training data, and mechanisms to validate robustness across UI variants. For teams evaluating FDM-1–style systems, balance potential automation gains with requirements for continuous evaluation, governance, and secure handling of sensitive screen content.

Tool Rankings – Top 6

AI-native IDE and agentic coding platform (Windsurf Editor) with Cascade agents, live previews, and multi-model support.

Engineering platform and open-source frameworks to build, test, and deploy reliable AI agents.

No-code/low-code visual platform to design, test, deploy, and operate AI agents rapidly, with enterprise controls and a

AI-powered online IDE and platform to build, host, and ship apps quickly.

Open-source AI pair-programming tool that runs in your terminal and browser, pairing your codebase with LLM copilots to:

An AI pair programmer that gives code completions, chat help, and autonomous agent workflows across editors, theterminal

Latest Articles (53)

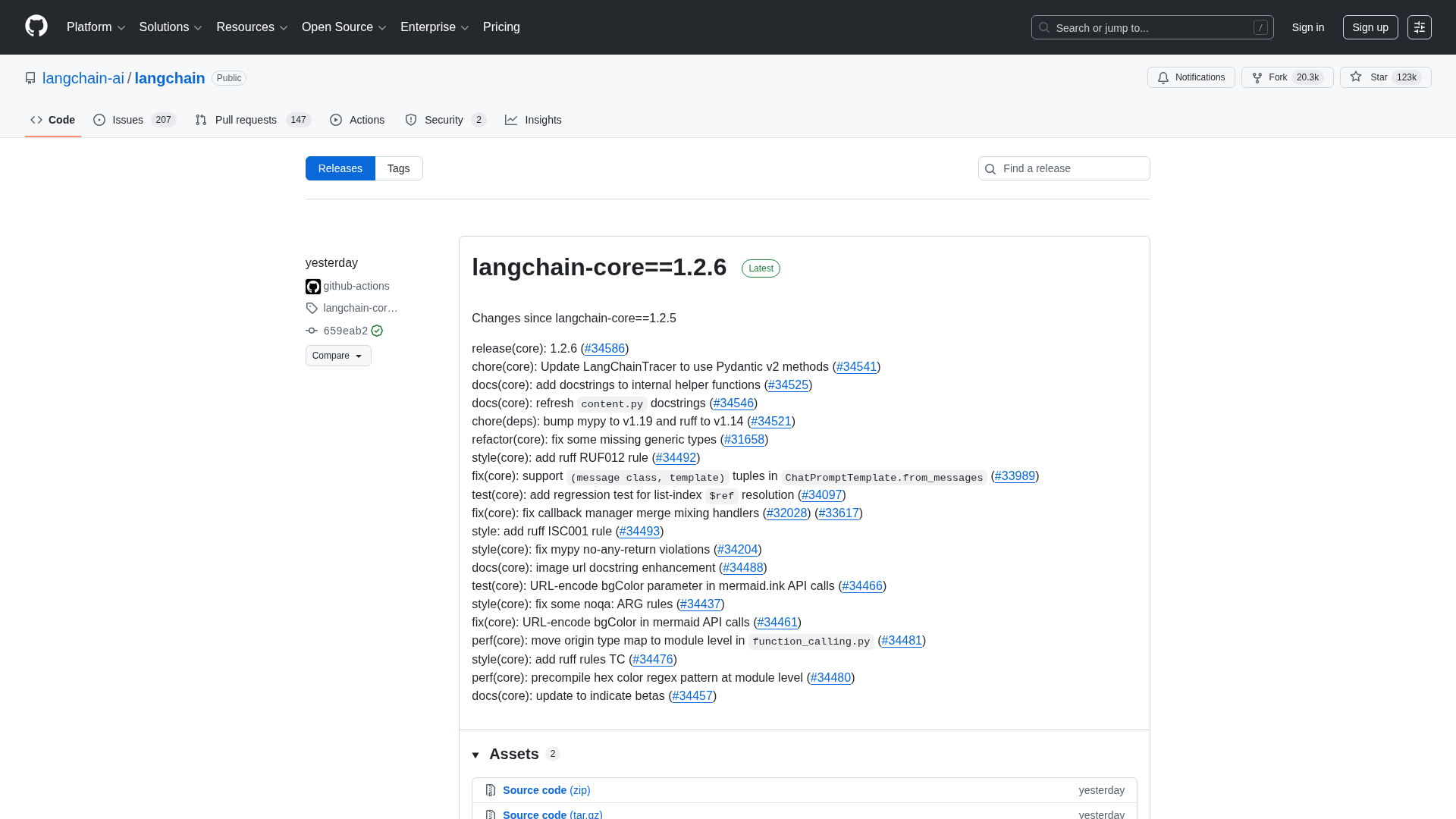

A comprehensive LangChain releases roundup detailing Core 1.2.6 and interconnected updates across XAI, OpenAI, Classic, and tests.

A step-by-step guide to building an AI-powered Reliability Guardian that reviews code locally and in CI with Qodo Command.

A developer chronicles switching to Zed on Linux, prototyping on a phone, and a late-night video correction.

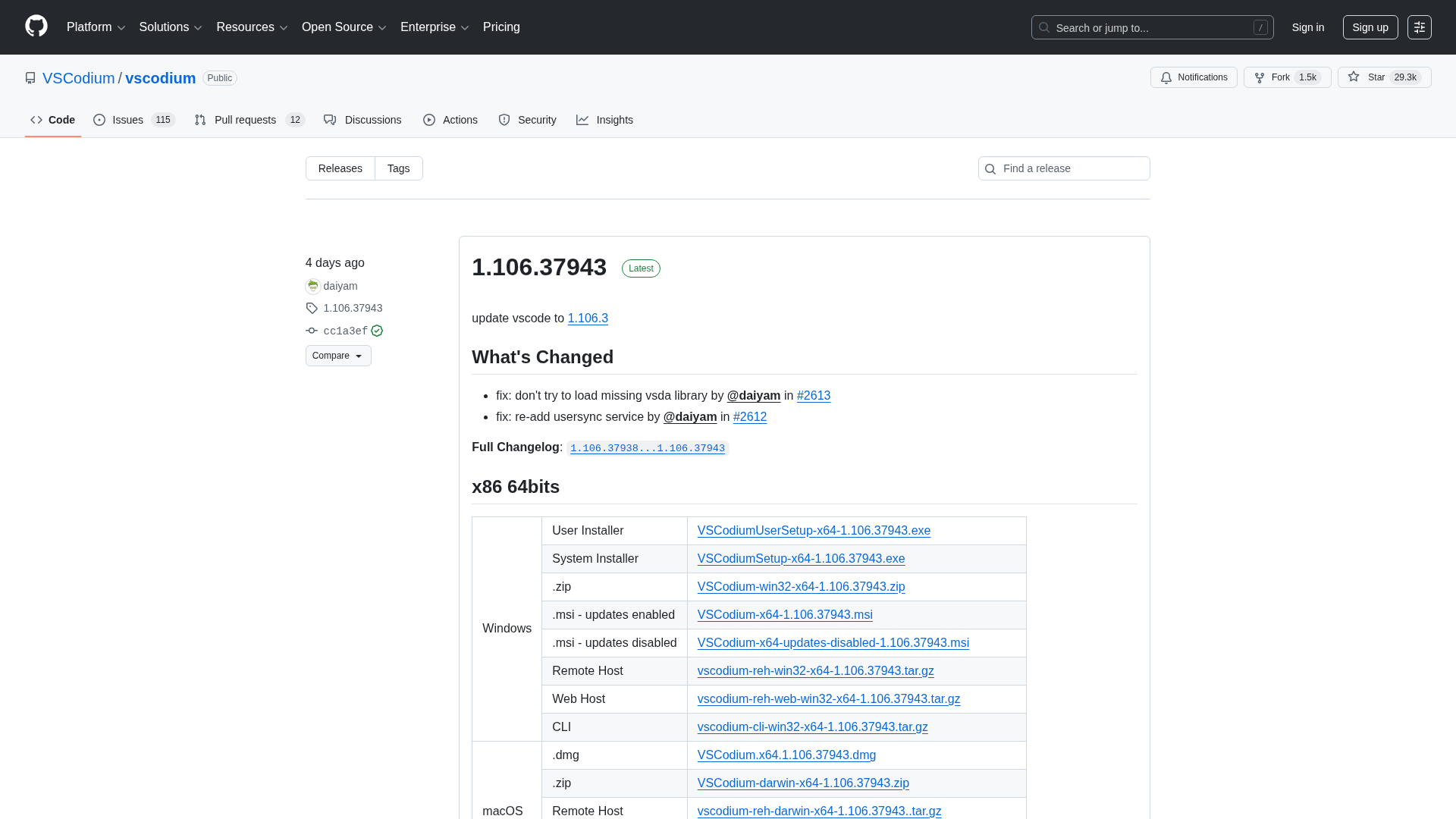

A comprehensive releases page for VSCodium with multi-arch downloads and versioned changelogs across 1.104–1.106 revisions.

Cannot access the article content due to an access-denied error, preventing summarization.