Topic Overview

Confidential computing and secure AI inference platforms focus on protecting sensitive inputs, model weights, and inference outputs by executing model workloads inside hardware‑backed trusted execution environments (TEEs), audited enclaves, or equivalent cryptographic approaches. This topic covers the technologies, governance controls, and platform integrations organizations use to run AI agents and assistants without exposing data to cloud host operators or unmanaged third‑party services. Relevance in 2026 is driven by widespread production use of agentic AI, stricter data‑protection rules, and demand for verifiable isolation when using third‑party models or cloud inference. Enterprises must balance privacy, compliance and operational needs—choosing between enclave‑based confidential VMs, attestation and BYOK key management, or cryptographic alternatives such as MPC and FHE—while managing latency and cost tradeoffs. Key platform categories and examples: secure inference providers (platforms such as Nightfall and other hardware‑backed services) that offer enclave execution and attestation; AI infrastructure and visibility tools like Xilos that monitor agentic activity across services; no‑code/low‑code agent platforms such as StackAI and enterprise assistants like IBM watsonx Assistant that require secure inference to protect customer data; conversational and LLM providers (Anthropic’s Claude family) whose models are commonly deployed under confidential computing constraints; industry vertical solutions like Observe.AI for contact centers that process sensitive voice data; and inference/cloud accelerators such as Together AI that provide scalable GPU inference and can be paired with confidential compute stacks. Selection and governance should start from the threat model: required guarantees (attestation, BYOK, provable isolation), integration with existing AI platforms, performance and cost implications, and tooling for monitoring, auditability and policy enforcement. Confidential computing reduces exposure but is one part of a broader AI security and governance program.

Tool Rankings – Top 6

Intelligent Agentic AI Infrastructure

End-to-end no-code/low-code enterprise platform for building, deploying, and governing AI agents that automate work onun

Enterprise virtual agents and AI assistants built with watsonx LLMs for no-code and developer-driven automation.

Anthropic's Claude family: conversational and developer AI assistants for research, writing, code, and analysis.

Enterprise conversation-intelligence and GenAI platform for contact centers: voice agents, real-time assist, auto QA, &洞

A full-stack AI acceleration cloud for fast inference, fine-tuning, and scalable GPU training.

Latest Articles (66)

A vendor‑agnostic guide to the 14 best AI governance platforms in 2025, with criteria, comparisons, and practical buying guidance.

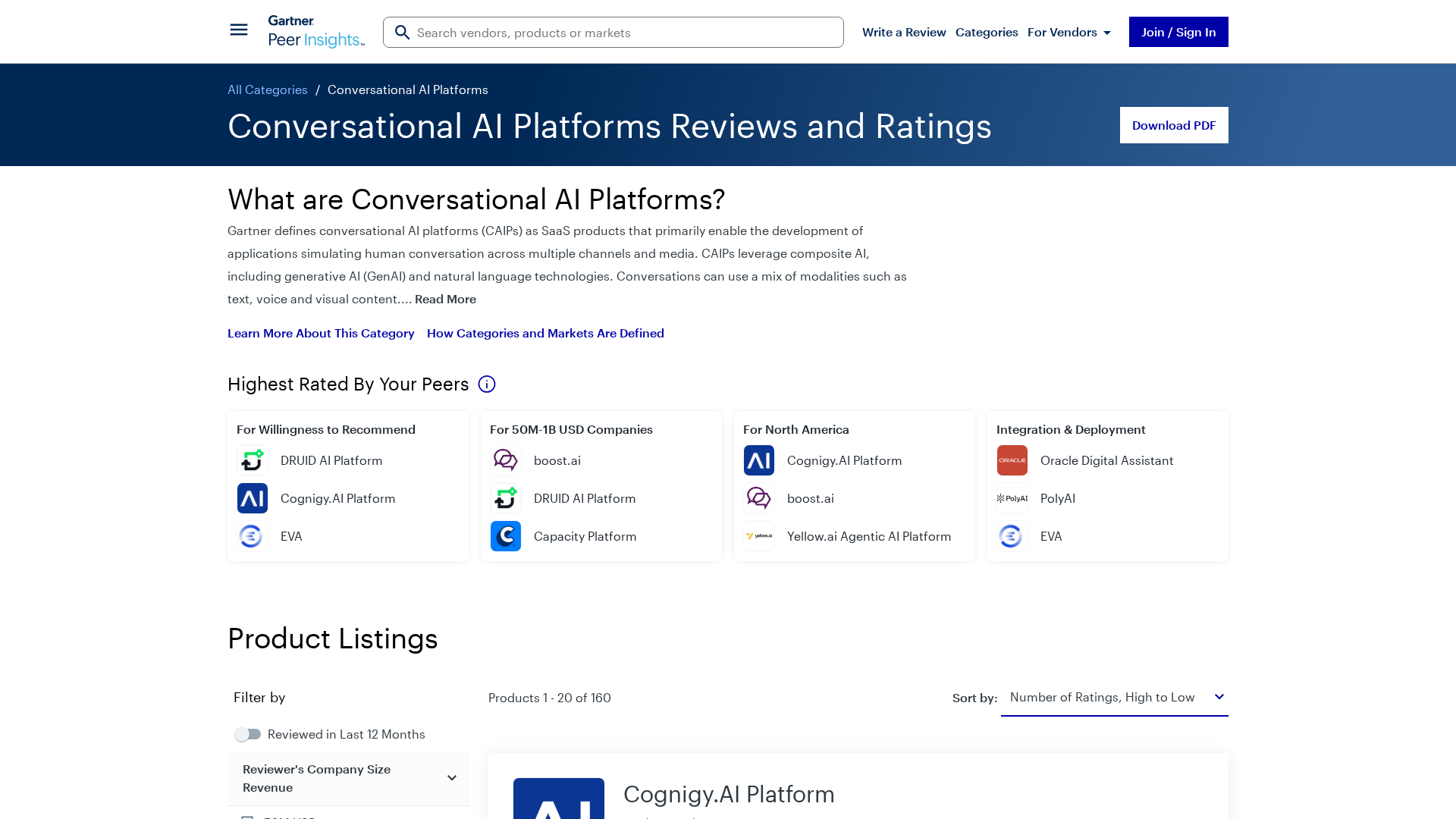

Gartner’s market view on conversational AI platforms, outlining trends, vendors, and buyer guidance.

OpenAI’s bypass moment underscores the need for governance that survives inevitable user bypass and hardens system controls.

A call to enable safe AI use at work via sanctioned access, real-time data protections, and frictionless governance.

Baseten launches an AI training platform to compete with hyperscalers, promising simpler, more transparent ML workflows.