Topic Overview

This topic examines how organizations evaluate and deploy AI content moderation and safety tools in the wake of reported model image/safety concerns (e.g., public incidents that exposed gaps in image handling and policy enforcement). It covers the intersecting categories of AI Content Detectors, AI Governance Tools, Regulatory Compliance Tools, and AI Security Governance, and explains how buyers should combine detection, monitoring, and governance to reduce operational and regulatory risk. Relevance: heightened regulatory scrutiny, emerging standards, and real-world model failures have pushed safety and traceability to the top of enterprise procurement lists. Buyers now expect end-to-end capabilities: robust detectors for harmful or disallowed content, continuous model and data monitoring, vendor and policy management, and evidence suitable for audits and incident response. Key tools and roles: AI Content Detectors provide automated flags and confidence signals for text and images; AI Governance Tools (e.g., Monitaur) centralize policy, monitoring, validation and vendor oversight—useful in heavily regulated sectors like insurance. Model and infrastructure providers such as Mistral AI and Vertex AI combine foundation models and managed platforms with controls for privacy, fine‑tuning, and deploy-time safeguards. Platforms like StackAI enable no‑code/low‑code agent orchestration and governance for operational teams. Data-focused services such as DatologyAI improve training and evaluation datasets to reduce risky model behavior upstream. Practical implications: effective programs integrate detectors, governance platforms, model lifecycle controls, and curated data pipelines so incidents can be detected, investigated, and remediated with audit trails. Procurement decisions should weigh interoperability, explainability, incident response workflows, and compliance reporting to meet evolving legal and security expectations.

Tool Rankings – Top 5

Insurance-focused enterprise AI governance platform centralizing policy, monitoring, validation, vendor governance and证e

Enterprise-focused provider of open/efficient models and an AI production platform emphasizing privacy, governance, and

End-to-end no-code/low-code enterprise platform for building, deploying, and governing AI agents that automate work onun

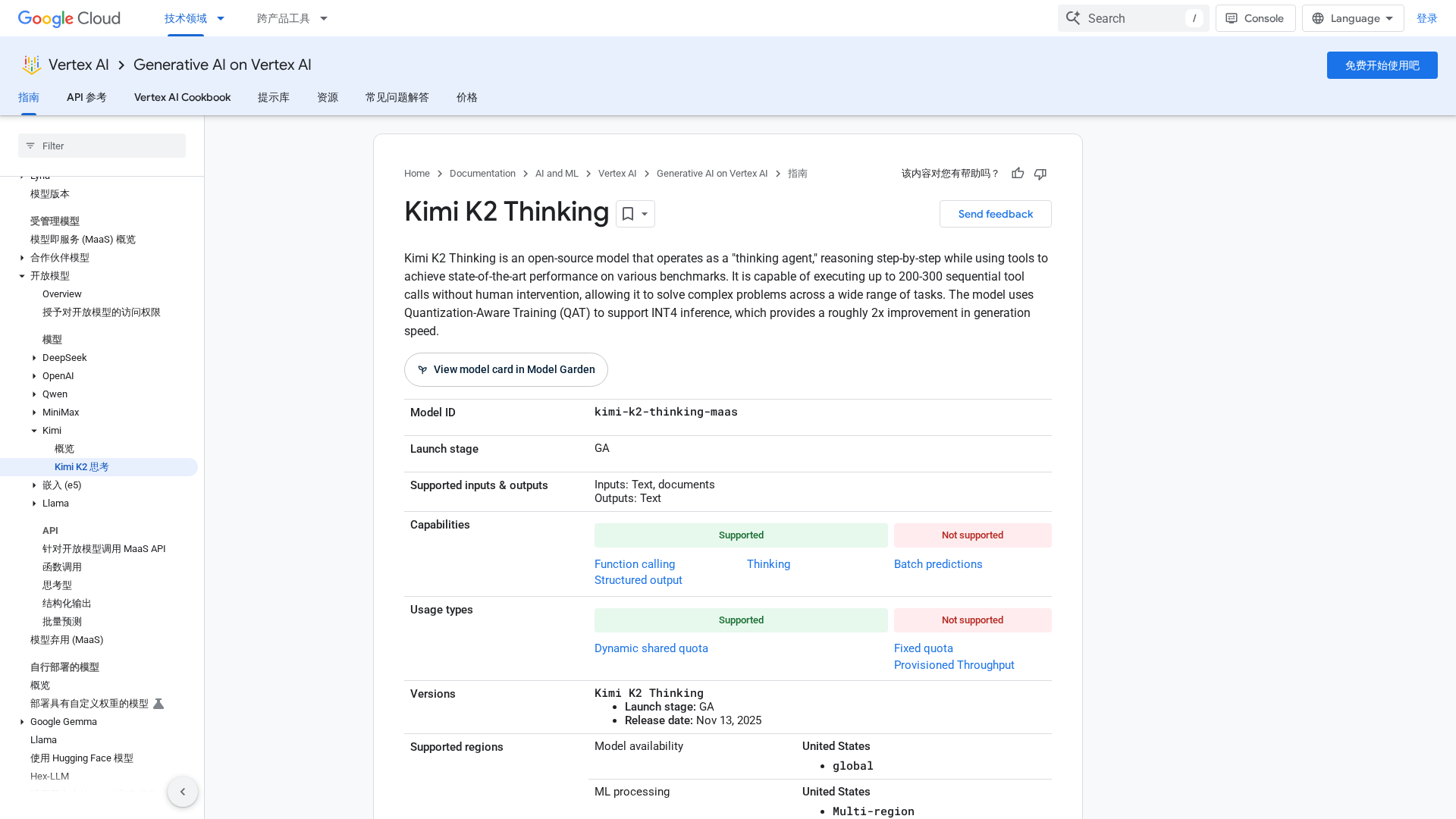

Unified, fully-managed Google Cloud platform for building, training, deploying, and monitoring ML and GenAI models.

Data-curation-as-a-service to train models faster, better, and smaller.

Latest Articles (30)

Exclusive Dallas dinner for tech leaders to discuss secure AI agents, ROI-focused workflows, and governance-driven AI strategy.

Promotes Retrieval-Augmented Generation (RAG) as a means to ground enterprise AI in company data for accuracy, relevance, and governance.

An open-source thinking agent on Vertex AI that performs long chain-of-thought reasoning with autonomous tool use and INT4-accelerated inference.

AI governance must be designed from day one to ensure performance and risk mitigation, not a checkbox exercise.

A 2025 guide comparing the top AEO tools to track, optimize, and secure AI citations.