Topic Overview

This topic compares the landscape of AI inference chips and supporting hardware in 2026, focusing on the contest between hyperscaler/consumer-platform players (e.g., Meta), incumbent GPU vendors (e.g., NVIDIA), and newer specialists. Demand for high-throughput, low-latency inference for LLMs and multimodal workloads has pushed architectures toward purpose-built accelerators (chiplets, SoCs) and software stacks that optimize power, latency, and cost across edge and datacenter contexts. Key evaluation axes include throughput, latency, watts-per-inference, software ecosystem maturity, ease of deployment, and implications for privacy and decentralization. Representative tools highlight current directions: Rebellions.ai targets hyperscale data centers with energy-efficient inference accelerators and a GPU-class software stack for high-throughput LLM and multimodal serving; Stable Code (Stability AI) represents an edge-focused model family—compact, instruction-tuned code LLMs optimized for private, low-latency code completion on-device; Xilos provides enterprise infrastructure for managing agentic AI activity and visibility across distributed services, a nod to rising demand for operational tooling in decentralized and hybrid deployments. Why this matters in 2026: organizations are balancing the cost and carbon footprint of large-scale inference with regulatory and user expectations for privacy and on-device processing. The market is fragmenting: general-purpose GPUs remain strong for training and flexible inference, while chiplet-based SoCs and bespoke accelerators promise energy gains for sustained production workloads. Edge AI vision platforms and decentralized AI infrastructure are converging—compact models and efficient hardware enable private, near-user inference while orchestration layers and observability tools manage distributed agents and services. Comparing hardware therefore requires assessing silicon, system design, software integration, and operational controls together rather than in isolation.

Tool Rankings – Top 3

Energy-efficient AI inference accelerators and software for hyperscale data centers.

Edge-ready code language models for fast, private, and instruction‑tuned code completion.

Intelligent Agentic AI Infrastructure

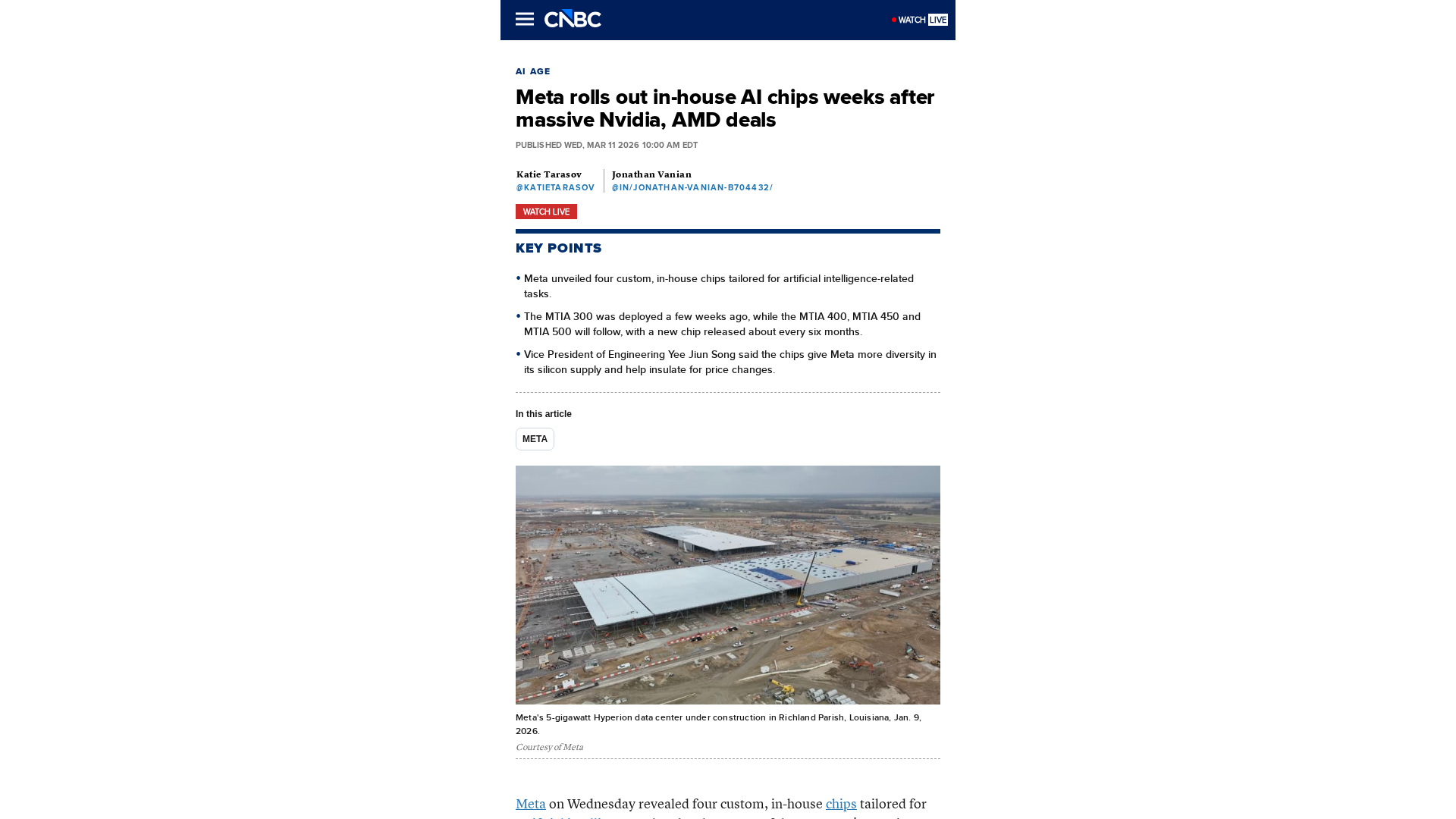

Latest Articles (20)

OpenAI’s bypass moment underscores the need for governance that survives inevitable user bypass and hardens system controls.

A call to enable safe AI use at work via sanctioned access, real-time data protections, and frictionless governance.

A real-world look at AI in SOCs, debunking myths and highlighting the human role behind automation with Bell Cyber experts.

Explores the human role behind AI automation and how Bell Cyber tackles AI hallucinations in security operations.

Identity won’t secure agentic AI; you need runtime visibility and action-based policy.