Topic Overview

This topic covers the stack of inference‑focused hardware and accelerator platforms that power AI outside of training clusters: edge vision modules, server accelerators, and decentralized compute fabrics. Interest in purpose‑built silicon (examples include Groq’s next‑generation inference chips and Tesla’s inference‑oriented designs) and rack‑scale accelerators has grown because production AI workloads increasingly demand low latency, high throughput, and significantly lower energy per inference than general‑purpose GPUs. Key themes are hardware/software co‑design, chiplet and SoC architectures, and inference‑optimized runtimes that enable quantized LLMs and multimodal models to run on constrained devices or energy‑sensitive data centers. Tools in this space illustrate practical approaches: Rebellions.ai builds energy‑efficient inference accelerators and a GPU‑class software stack aimed at hyperscale LLM and multimodal inference; Stability’s Stable Code family targets compact, edge‑ready code models for private, fast on‑device code completion; EchoComet demonstrates privacy‑first workflows by assembling code context locally for device‑side prompts. Relevance in 2026 stems from three converging trends: wider deployment of large models at the edge (vision and code assistants), pressure to reduce carbon and operational costs in inference fleets, and a move toward decentralized or hybrid infrastructure that keeps data and compute closer to users. For architects and product teams, the choices now include selecting accelerators that match model precision and throughput needs, integrating inference runtimes that support quantization and batching, and adopting data‑local tooling to preserve privacy while minimizing network costs.

Tool Rankings – Top 3

Energy-efficient AI inference accelerators and software for hyperscale data centers.

Edge-ready code language models for fast, private, and instruction‑tuned code completion.

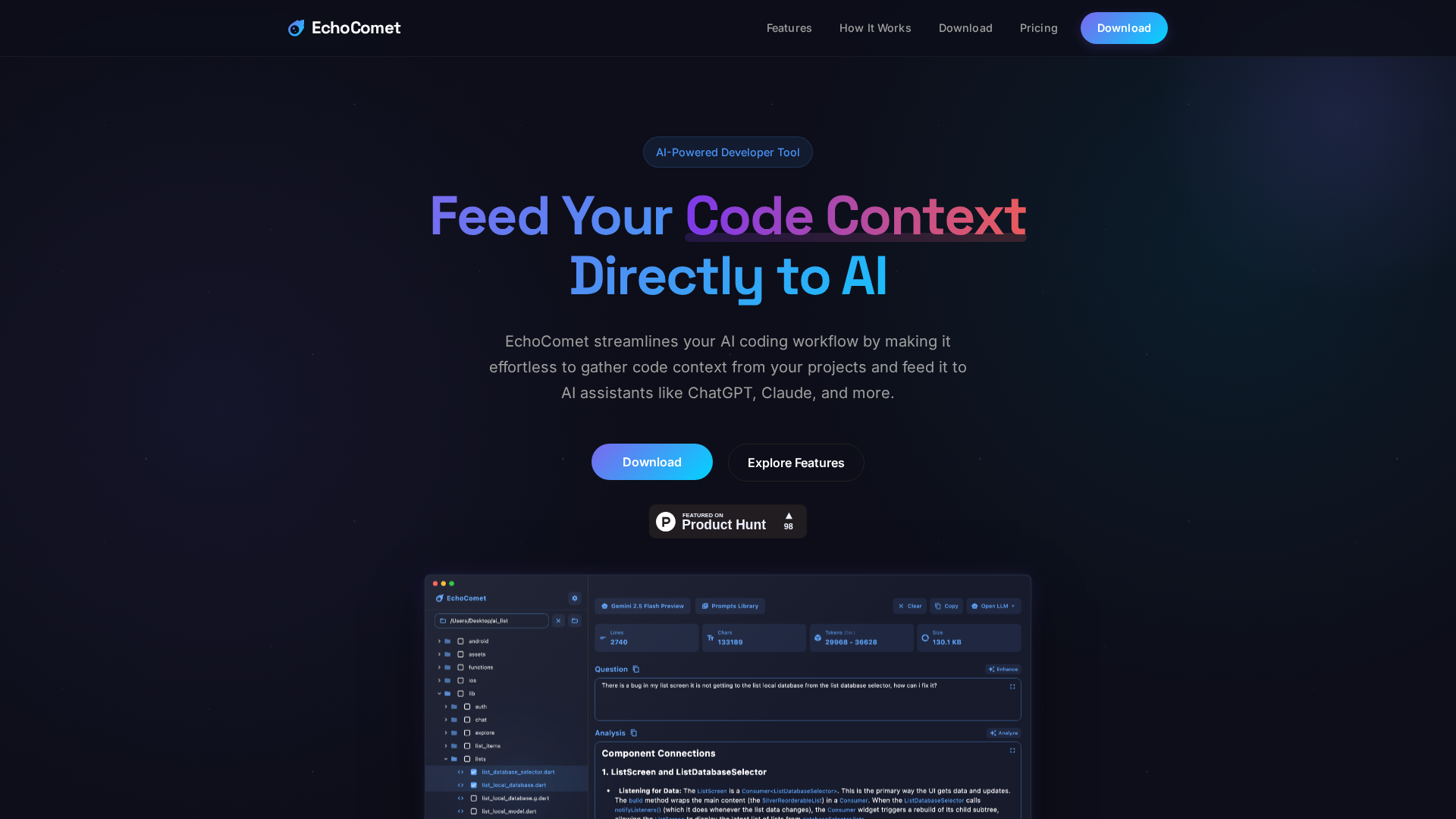

Feed your code context directly to AI

Latest Articles (20)

EchoComet's contact page provides fast support, license recovery, and device limits for macOS.

EchoComet lets you gather code context locally and feed it to AI with large-context prompts for smarter, private AI assistance.

ProteanTecs expands in Japan with a new office and Noritaka Kojima as GM Country Manager.

Rebellions names a new CBO and EVP to drive global expansion, while NST commends Qatar’s sustainability leadership.

Rebellions appoints Marshall Choy as CBO to drive global expansion and establish a U.S. market hub.