Topic Overview

This topic covers the ecosystem of AI accelerator hardware and inference server platforms used to run large language models (LLMs) and multimodal workloads—spanning general‑purpose GPUs and TPUs, deterministic/streamlined architectures often associated with “Groq‑class” chips, and newer purpose‑built inference accelerators (chiplets, SoCs, servers). It emphasizes both silicon and the supporting software stacks (compilers, runtimes, orchestration) needed for high throughput, low latency, and energy efficiency. As of 2026, demand for inference capacity has shifted the market toward specialized hardware and vertically integrated server platforms that reduce cost and power per query. Hyperscalers and startups alike are deploying inference‑optimized silicon and software to meet requirements for multimodal models, on‑prem and edge deployments, and privacy‑sensitive applications. Key trends include hardware specialization (inference vs. training), chiplet and SoC designs for density and efficiency, quantization and compiler toolchain advances, and orchestration layers that place workloads across cloud, edge, and decentralized nodes. Representative tools from the provided set illustrate these vectors: Rebellions.ai focuses on energy‑efficient inference accelerators and a GPU‑class software stack for hyperscale LLM and multimodal serving; Windsurf (formerly Codeium) demonstrates how AI‑native developer platforms integrate multi‑model support and agentic workflows that benefit from low‑latency inference backends; Stable Code shows the push toward compact, instruction‑tuned code models (3B‑class) optimized for private, edge or on‑prem inference. Together they reflect a broader move toward heterogeneous hardware, tighter hardware‑software co‑design, and deployment patterns aimed at decentralized, energy‑aware AI infrastructure.

Tool Rankings – Top 3

Energy-efficient AI inference accelerators and software for hyperscale data centers.

AI-native IDE and agentic coding platform (Windsurf Editor) with Cascade agents, live previews, and multi-model support.

Edge-ready code language models for fast, private, and instruction‑tuned code completion.

Latest Articles (18)

ProteanTecs expands in Japan with a new office and Noritaka Kojima as GM Country Manager.

Windsurf unveils SWE-1.5 and a bold plan for affordable, enterprise-ready AI coding.

Windsurf adds GPT-5.1 family, including Codex variants, to its AI toolkit for developers and enterprises.

Windsurf launches SWE-1.5 and shares its mission to deliver fast, affordable AI-powered coding tools.

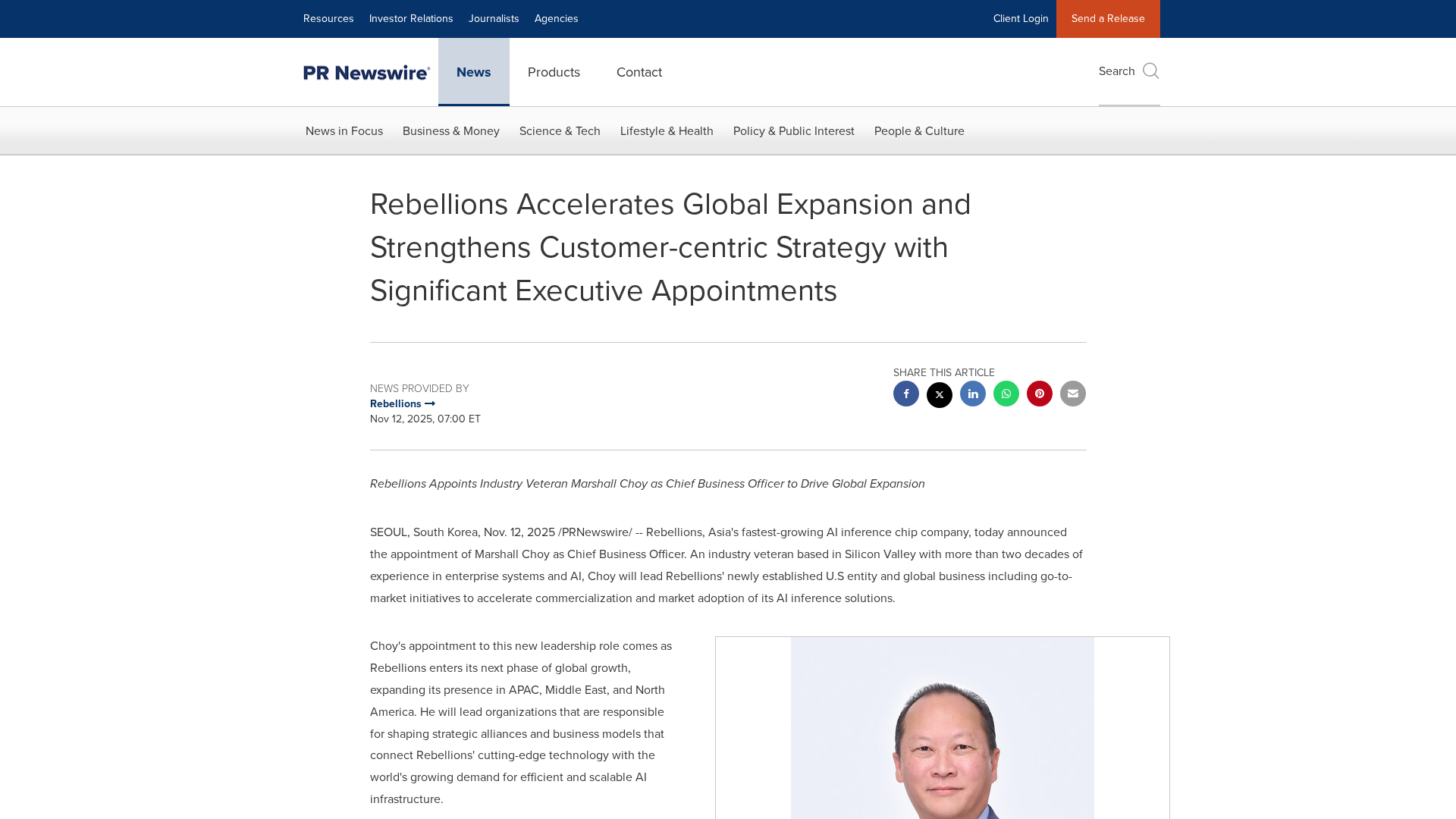

Rebellions appoints Marshall Choy as CBO to drive global expansion and establish a U.S. market hub.