Topic Overview

AI-generated smart contracts have accelerated development but increased the risk of on‑chain bugs, exploits and unexpected economic behaviors. This topic covers the toolchains and practices used to automatically detect, explain and prevent vulnerabilities in contracts produced or modified by generative AI. Key approaches include static analysis, symbolic execution and formal verification, fuzzing and property‑based tests, differential and adversarial testing, provenance/BOM checks, and live on‑chain monitoring with rollback or mitigation controls. Practical tool categories span AI security governance (policy, monitoring, validation), GenAI test automation, AI code assistants, and code‑generation platforms. Platform and framework components include LangChain for orchestrating agentic audit workflows and debugging pipelines; AutoGPT‑style autonomous agents for continuous fuzzing, regression checks and triage; LlamaIndex for retrieval‑augmented auditors that combine vulnerability corpora, CVE histories and annotated testcases; Vertex AI for model training, fine‑tuning, evaluation and scalable deployment of auditing models; and governance offerings like Monitaur to centralize policy, vendor validation and monitoring for regulated or insured deployments. As of 2026‑02‑24, the practical trend is toward automation integrated into CI/CD and pre‑deploy gates, backed by RAG-enabled explainability and continuous post‑deploy monitoring. However, automated auditors are complementary to — not replacements for — formal verification and expert review for high‑value contracts. Effective pipelines combine AI assistants and autonomous test agents with deterministic verification, transparent audit trails, and governance controls to reduce false positives, provide human‑readable explanations, and manage operational and compliance risk for on‑chain applications.

Tool Rankings – Top 5

Engineering platform and open-source frameworks to build, test, and deploy reliable AI agents.

Platform to build, deploy and run autonomous AI agents and automation workflows (self-hosted or cloud-hosted).

Developer-focused platform to build AI document agents, orchestrate workflows, and scale RAG across enterprises.

Unified, fully-managed Google Cloud platform for building, training, deploying, and monitoring ML and GenAI models.

Insurance-focused enterprise AI governance platform centralizing policy, monitoring, validation, vendor governance and证e

Latest Articles (40)

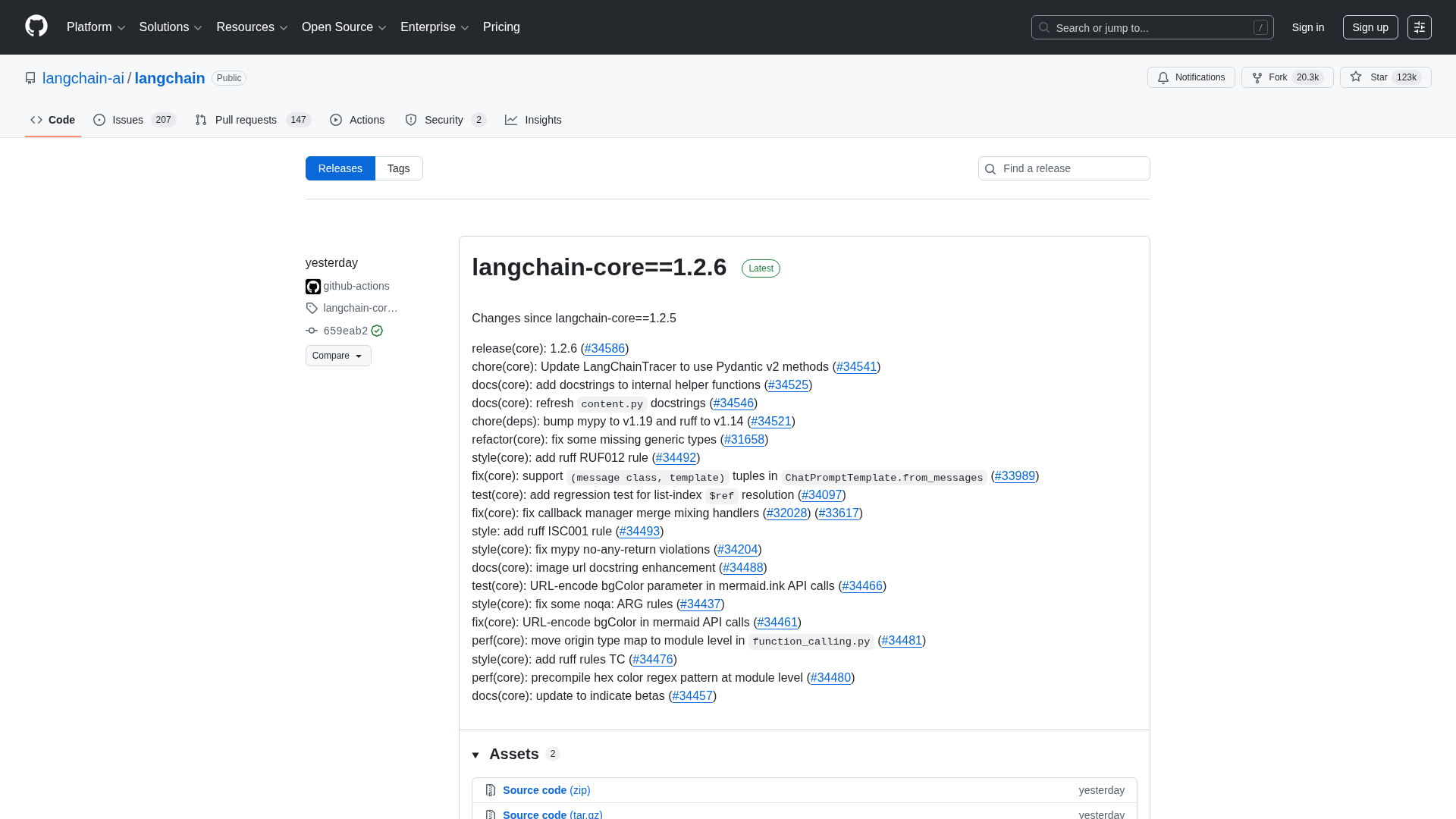

A comprehensive LangChain releases roundup detailing Core 1.2.6 and interconnected updates across XAI, OpenAI, Classic, and tests.

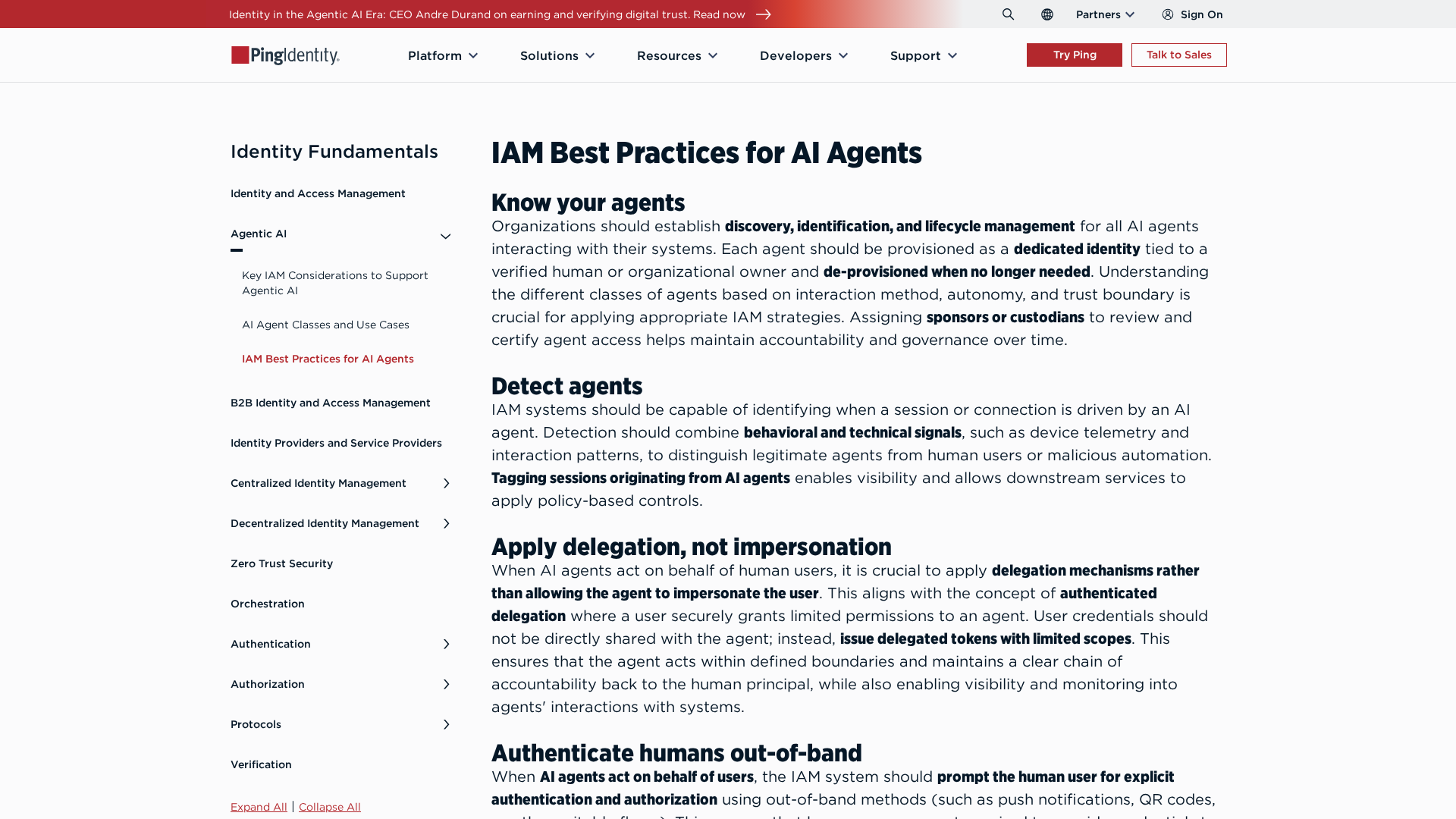

Best-practices for securing AI agents with identity management, delegated access, least privilege, and human oversight.

Cannot access the article content due to an access-denied error, preventing summarization.

A quick preview of POE-POE's pros and cons as seen in G2 reviews.

Get daily, curated trending ML papers delivered straight to your inbox.