Topic Overview

This topic covers the software and model stacks that make AI practical on local and edge hardware in 2026: lightweight inference runtimes, model compilers and quantization flows, agent/ orchestration frameworks, and platform integrations that connect on‑device inference to decentralized infrastructure and data pipelines. Demand for local inference has risen because of latency-sensitive vision and control workloads, tighter data‑privacy regulation, and the availability of efficient open models and edge accelerators. Key categories include Edge AI Vision Platforms (real‑time sensor and video inference), Decentralized AI Infrastructure (federated/peer and localized orchestration), and AI Data Platforms (on‑device labeling, sync, and feedback loops). Representative tools illustrate how these pieces come together: LangChain for engineering stateful agentic applications and deployment workflows; Mistral AI providing efficient open foundation models plus enterprise production tooling oriented to privacy and governance; Tabby as an open, self‑hosted coding assistant with local model serving; Windsurf (formerly Codeium) and JetBrains AI Assistant as agentic, in‑IDE platforms that blend local and cloud inference; and Code Llama, StarCoder, and WizardLM families as code‑specialized or instruction‑tuned open models often used for on‑prem or edge inference. Amazon CodeWhisperer exemplifies counterpart commercial developer assistants evolving toward hybrid local/cloud operation. Practically, choosing a local/edge stack in 2026 means matching model format and optimization toolchains to target hardware, using multi‑model orchestrators for fallbacks and privacy boundaries, and integrating with data platforms to collect labeled feedback while keeping sensitive data local. The result is an ecosystem where open models, compact runtimes, and orchestration frameworks enable production AI across constrained, regulated, or disconnected environments.

Tool Rankings – Top 6

Engineering platform and open-source frameworks to build, test, and deploy reliable AI agents.

Enterprise-focused provider of open/efficient models and an AI production platform emphasizing privacy, governance, and

.avif)

Open-source, self-hosted AI coding assistant with IDE extensions, model serving, and local-first/cloud deployment.

AI-native IDE and agentic coding platform (Windsurf Editor) with Cascade agents, live previews, and multi-model support.

Code-specialized Llama family from Meta optimized for code generation, completion, and code-aware natural-language tasks

StarCoder is a 15.5B multilingual code-generation model trained on The Stack with Fill-in-the-Middle and multi-query ува

Latest Articles (45)

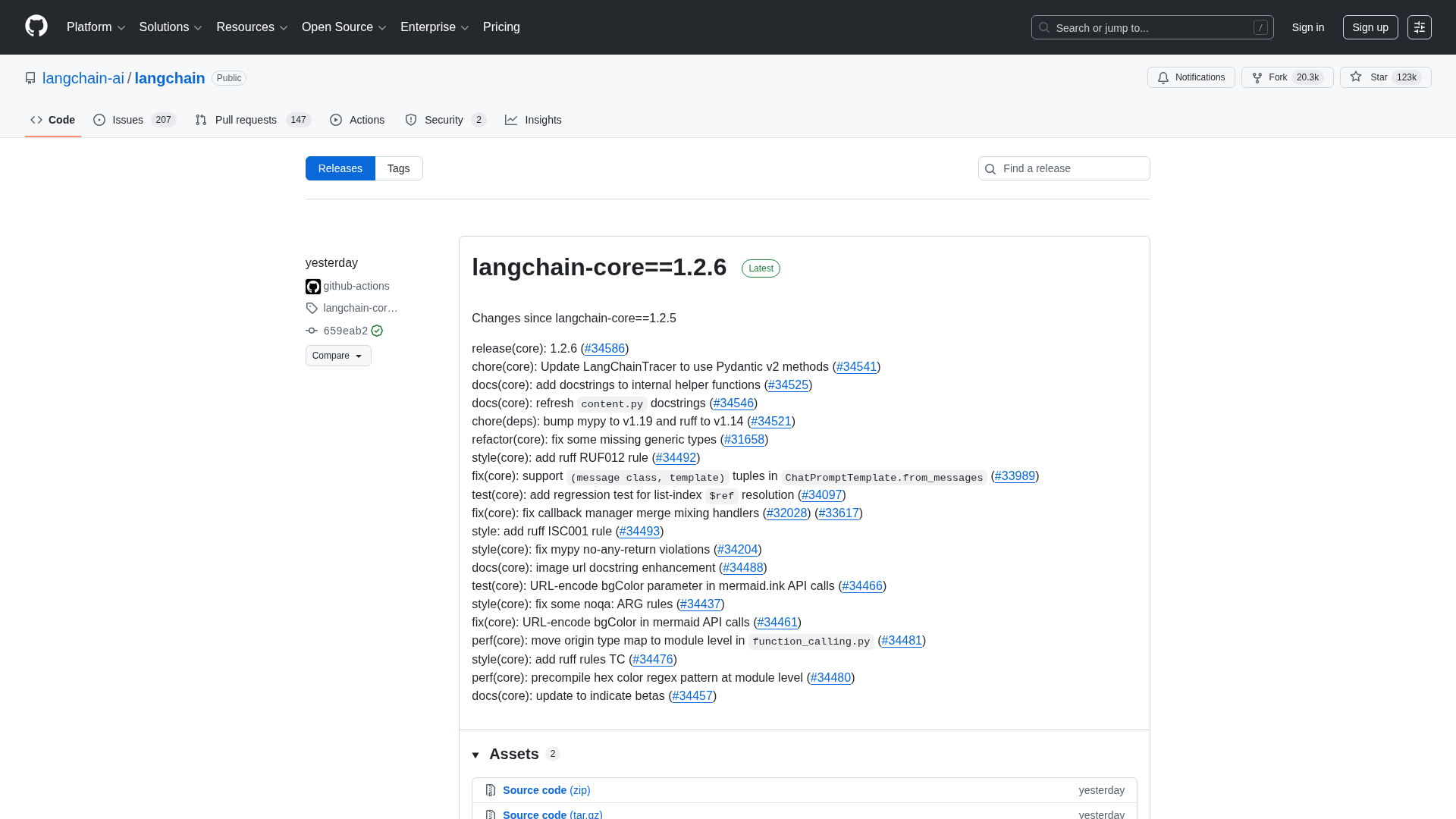

A comprehensive LangChain releases roundup detailing Core 1.2.6 and interconnected updates across XAI, OpenAI, Classic, and tests.

Cannot access the article content due to an access-denied error, preventing summarization.

Adobe nears a $19 billion deal to acquire Semrush, expanding its marketing software capabilities, according to WSJ reports.

A quick preview of POE-POE's pros and cons as seen in G2 reviews.

Get daily, curated trending ML papers delivered straight to your inbox.