Topic Overview

This topic explores the practical tradeoffs between AI code generators that accelerate smart‑contract development and AI auditing tools that surface security, correctness and governance issues. Code generators — from in‑IDE copilots like GitHub Copilot and JetBrains AI Assistant to model families such as Meta’s Code Llama, StarCoder, Stable Code, and Amazon CodeWhisperer — produce completions, whole‑function suggestions and natural‑language‑driven edits that cut boilerplate and speed prototyping. Open‑source and local options (Tabby, Aider, StarCoder, Stable Code) also support privacy and self‑hosted workflows for sensitive codebases. Auditing and quality platforms (exemplified by Qodo/Codium) focus on context‑aware code review, automated test generation, SDLC governance, and multi‑repo quality controls. In practice, teams pair generators for rapid authoring with auditing tools for fuzzing, symbolic checks, test synthesis and policy enforcement in CI/CD. Key trends through early 2026 include tighter integration of audit checks into developer workflows, rise of smaller edge‑ready models for low‑latency private completions, and broader adoption of automated test generation to catch logic errors in DeFi and on‑chain code. Despite productivity gains, limitations remain: LLMs can hallucinate, lack formal guarantees, and struggle with long, interdependent contracts without up‑to‑date on‑chain context. Effective use combines AI generation with deterministic verification (formal methods), human review, and security testing. For teams building smart contracts, the central question is not whether to use AI, but how to pair copilot‑style generation with rigorous auditing and governance to manage risk while accelerating delivery.

Tool Rankings – Top 6

An AI pair programmer that gives code completions, chat help, and autonomous agent workflows across editors, theterminal

Code-specialized Llama family from Meta optimized for code generation, completion, and code-aware natural-language tasks

StarCoder is a 15.5B multilingual code-generation model trained on The Stack with Fill-in-the-Middle and multi-query ува

Quality-first AI coding platform for context-aware code review, test generation, and SDLC governance across multi-repo,팀

AI-driven coding assistant (now integrated with/rolling into Amazon Q Developer) that provides inline code suggestions,

Edge-ready code language models for fast, private, and instruction‑tuned code completion.

Latest Articles (55)

A step-by-step guide to building an AI-powered Reliability Guardian that reviews code locally and in CI with Qodo Command.

A developer chronicles switching to Zed on Linux, prototyping on a phone, and a late-night video correction.

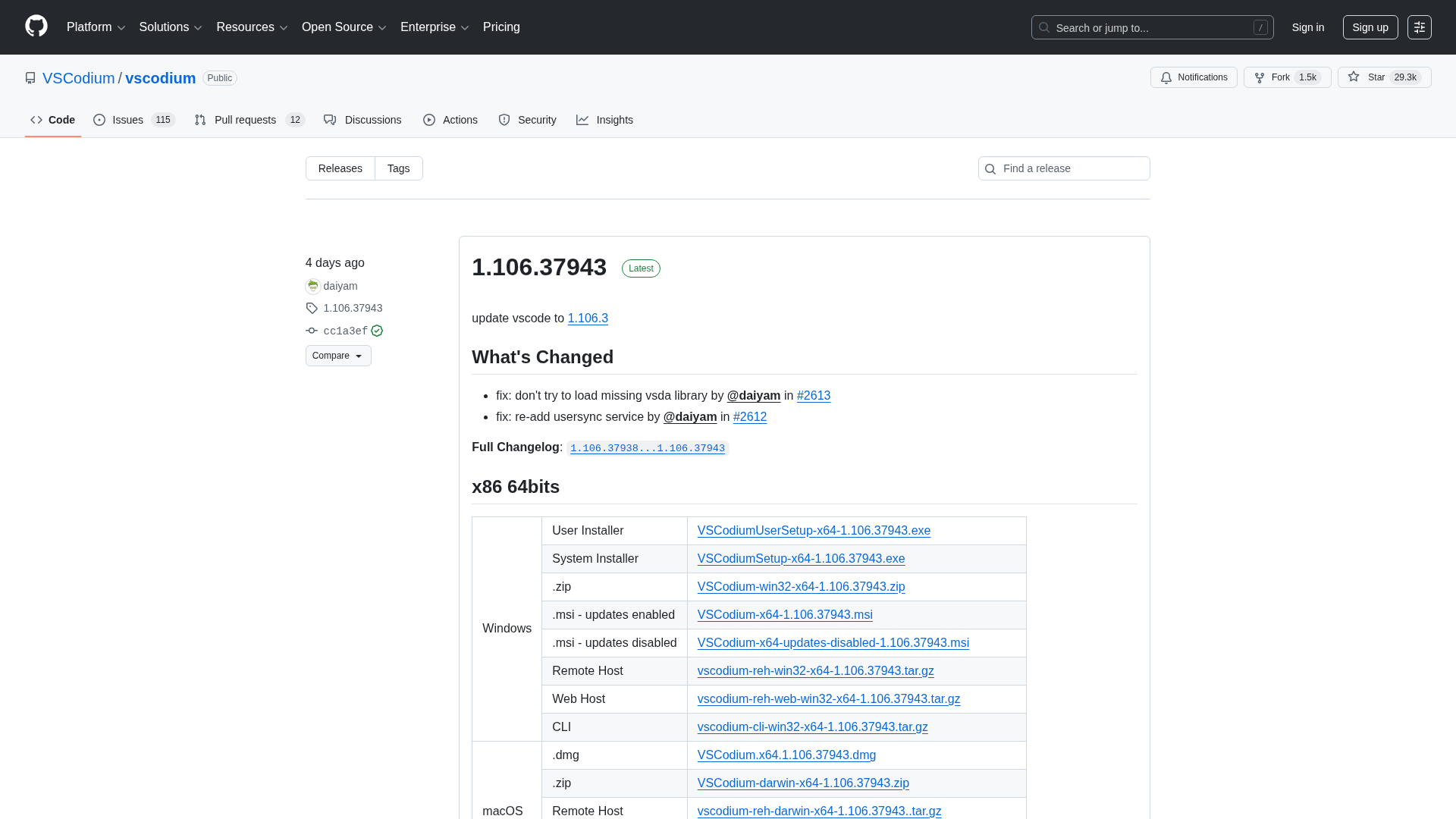

A comprehensive releases page for VSCodium with multi-arch downloads and versioned changelogs across 1.104–1.106 revisions.

Qodo ranks highest for Codebase Understanding by Gartner, highlighting cross-repo context as essential for scalable AI development.

Context-aware, enterprise-grade AI code review that scales across multi-repo ecosystems and enforces policies.