Topic Overview

Generative AI with local compute describes architectures and products that move model inference, data processing, or agent execution from remote cloud servers onto end‑user machines, private infrastructure, or edge devices. Anthropic’s “Use Your Computer” capability exemplifies this shift by enabling Claude to access or offload work to a user’s local environment so sensitive inputs and context can be processed without cloud data exfiltration. As of 2026‑04‑02, enterprises and developers are prioritizing on‑device and self‑hosted options to reduce latency, meet compliance and residency requirements, and retain tighter control over IP and telemetry. The space spans compact, edge‑ready models (for example Stability’s Stable Code family and open‑source variants like nlpxucan/WizardLM and Meta’s Code Llama) and tools that feed local context securely to models (EchoComet, Tabnine, JetBrains AI Assistant). Platforms such as StackAI and Xilos target enterprise agent orchestration and visibility, while Qodo focuses on code quality, testing and SDLC governance in multi‑repo environments. Together these categories form a stack: lightweight or self‑hosted models; local context ingestion and IDE integration; agent orchestration and observability; and governance controls to enforce policies and audit behavior. Key trends driving adoption include model optimization for small footprints, hybrid cloud/local deployment patterns, growing regulatory scrutiny of data flows, and demand for provenance and audit trails for agentic activity. Implementers must balance performance and UX with security: local compute reduces cloud exposure but introduces patching, endpoint hardening and policy challenges. Evaluating alternatives involves tradeoffs across model capability, deployment complexity, governance tooling and developer ergonomics—making this an active area for product and security teams planning AI beyond the public API model.

Tool Rankings – Top 6

Edge-ready code language models for fast, private, and instruction‑tuned code completion.

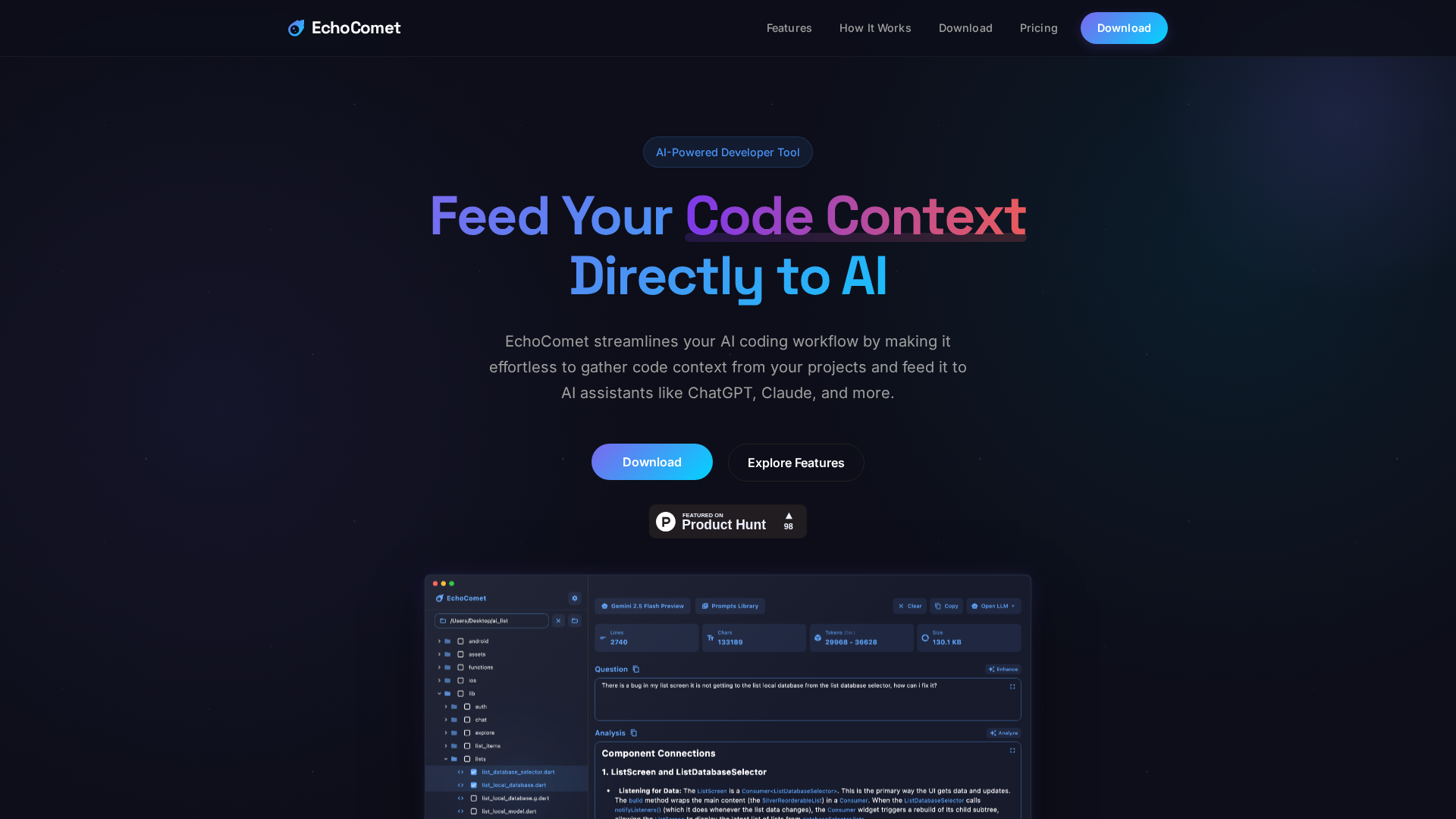

Feed your code context directly to AI

Intelligent Agentic AI Infrastructure

Enterprise-focused AI coding assistant emphasizing private/self-hosted deployments, governance, and context-aware code.

End-to-end no-code/low-code enterprise platform for building, deploying, and governing AI agents that automate work onun

Open-source family of instruction-following LLMs (WizardLM/WizardCoder/WizardMath) built with Evol-Instruct, focused on

Latest Articles (50)

EchoComet lets you gather code context locally and feed it to AI with large-context prompts for smarter, private AI assistance.

EchoComet's contact page provides fast support, license recovery, and device limits for macOS.

OpenAI’s bypass moment underscores the need for governance that survives inevitable user bypass and hardens system controls.

A call to enable safe AI use at work via sanctioned access, real-time data protections, and frictionless governance.

Explores the human role behind AI automation and how Bell Cyber tackles AI hallucinations in security operations.