Topic Overview

Long‑context and multi‑step reasoning LLMs focus on models and toolchains that can hold far larger input/state windows and reliably execute multi‑turn, multi‑step logic across documents, tools, and memory. As of 2026‑02‑23 this area matters because production use cases—complex document QA, code synthesis across large repositories, regulatory compliance checks, and automated agent workflows—depend on models that can access long context, use external tools, and maintain coherent multi‑step plans. Key model families include Anthropic’s Claude line (e.g., Sonnet variants) and Google’s Gemini family (e.g., Gemini 3.1 Pro), alongside GPT‑class long‑context variants; these prioritize expanded context windows, multimodal inputs, and interfaces for tool calling and retrieval. Complementary tooling enables production workflows: LangChain provides engineering frameworks to orchestrate agentic chains and evaluations; LlamaIndex converts unstructured corpora into retrieval‑ready indexes for RAG; Vertex AI offers managed infrastructure for training, deploying, and monitoring scaled models; and AutoGPT‑style platforms automate persistent agents and automation flows. Practical trends to watch include tighter integration of retrieval‑augmented generation, stateful memory, deterministic planning primitives for multi‑step tasks, and standardized evaluation pipelines for reasoning reliability and safety. For marketplaces, automation platforms, GenAI test automation, and AI data platforms, the focus is shifting from single‑prompt outputs to reproducible, debuggable pipelines that combine large contexts, tool use, and rigorous testing. Organizations evaluating these technologies should balance context capacity, latency/cost, orchestration tooling, and evaluation frameworks to deploy multi‑step applications reliably and safely.

Tool Rankings – Top 6

Anthropic's Claude family: conversational and developer AI assistants for research, writing, code, and analysis.

Google’s multimodal family of generative AI models and APIs for developers and enterprises.

Engineering platform and open-source frameworks to build, test, and deploy reliable AI agents.

Developer-focused platform to build AI document agents, orchestrate workflows, and scale RAG across enterprises.

Unified, fully-managed Google Cloud platform for building, training, deploying, and monitoring ML and GenAI models.

Platform to build, deploy and run autonomous AI agents and automation workflows (self-hosted or cloud-hosted).

Latest Articles (65)

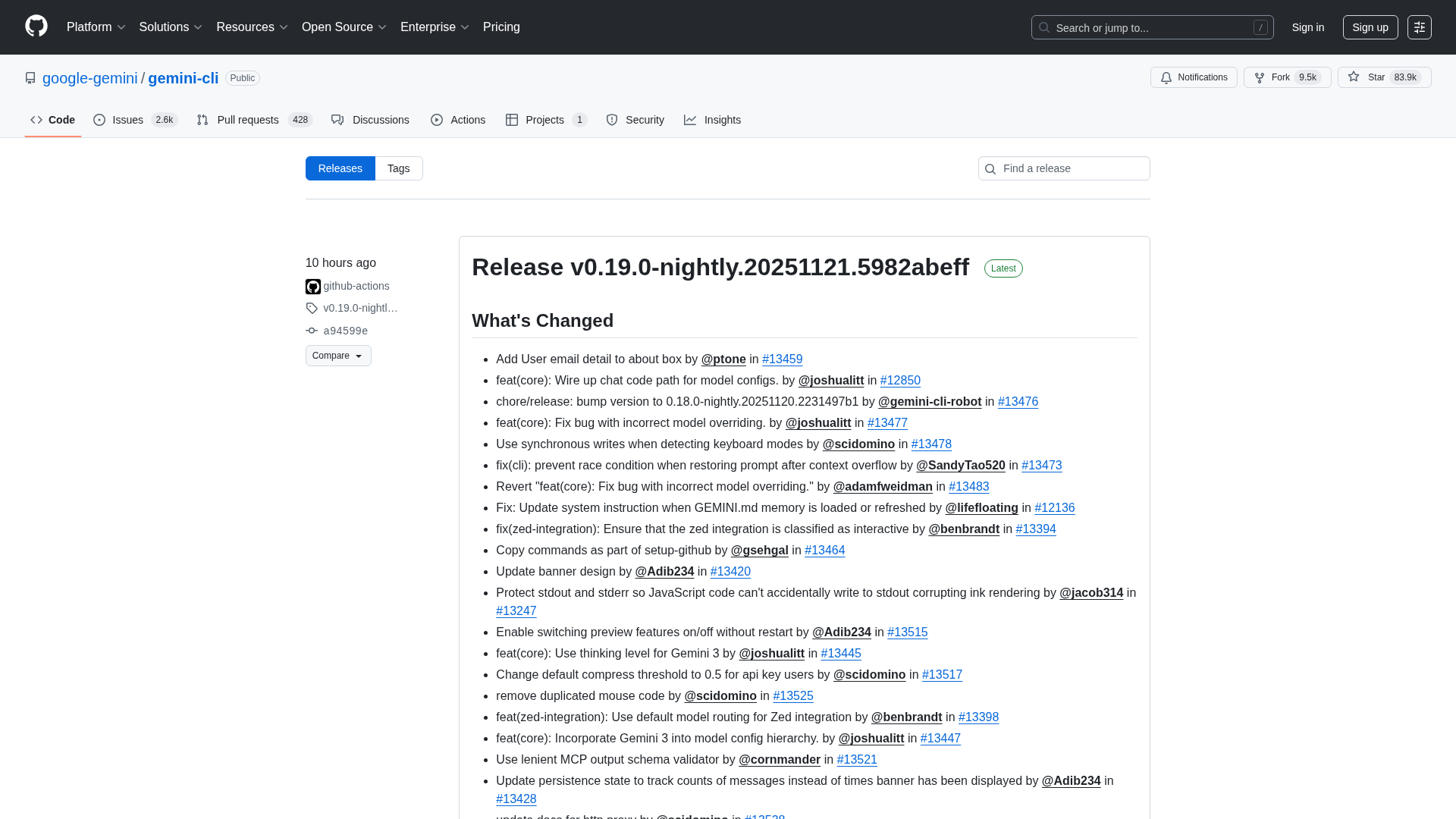

Overview of the Gemini CLI v0.36.0-preview release series, highlighting architectural, CLI, and UI changelogs across multiple pre-release versions.

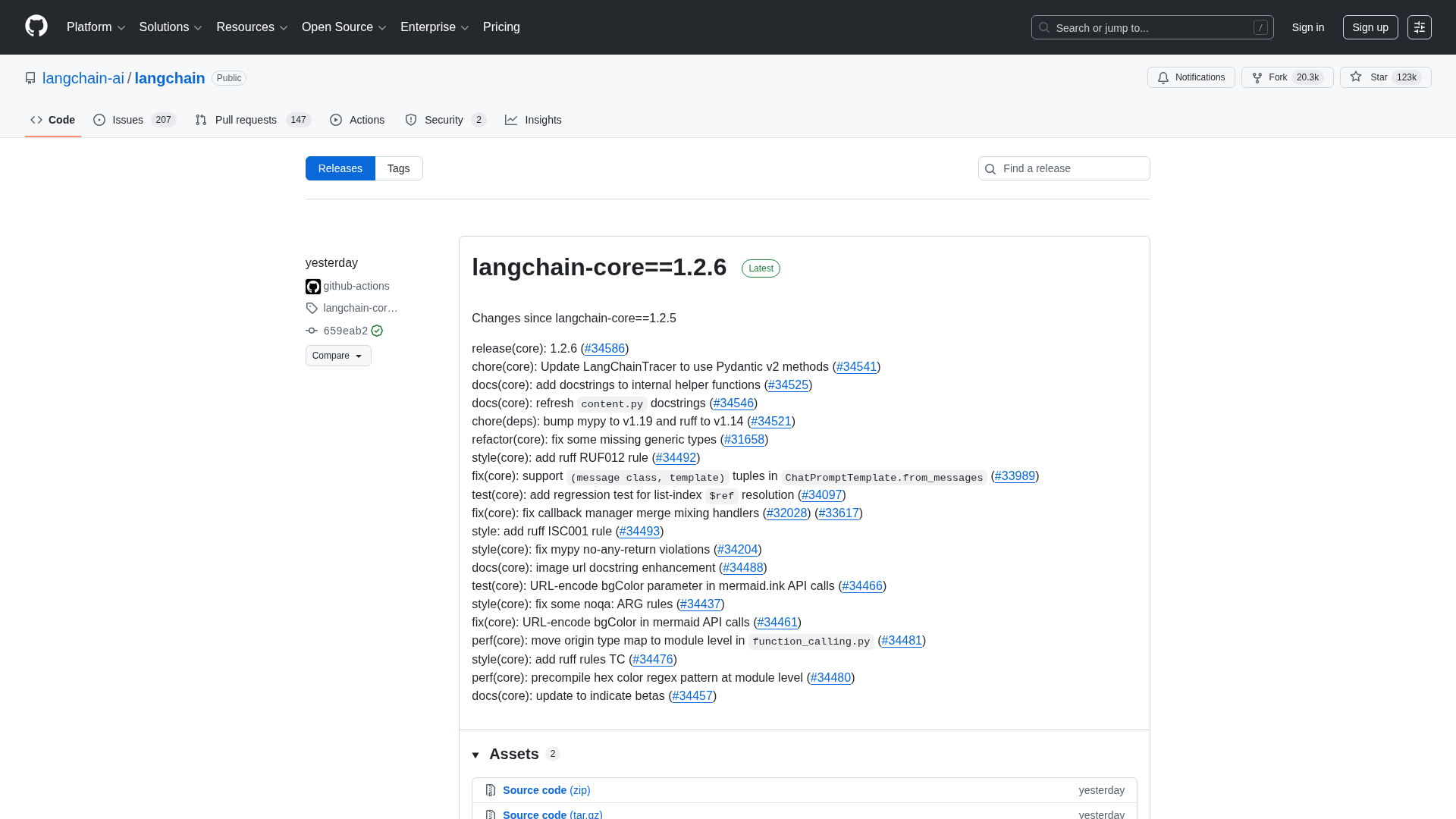

A comprehensive LangChain releases roundup detailing Core 1.2.6 and interconnected updates across XAI, OpenAI, Classic, and tests.

Best-practices for securing AI agents with identity management, delegated access, least privilege, and human oversight.

Cannot access the article content due to an access-denied error, preventing summarization.

A practical, step-by-step guide to fine-tuning large language models with open-source NLP tools.