Topic Overview

This topic examines multi‑model enterprise research assistants—platforms that combine large language and multimodal models, retrieval‑augmented search, and agent orchestration—to support complex research, coding, and data analysis workflows. As of 2026‑04‑06, organizations increasingly evaluate offerings like Microsoft Critique alongside rival Copilot/agent systems and specialized enterprise stacks. Key differences center on model families, integration points, governance, and observability. Enterprise tool categories involved include agent frameworks and automation platforms (for composing and supervising multi‑step agents), AI agent and tool marketplaces (for sourcing prebuilt agents and connectors), and AI research tools (for interactive exploration and reproducible workflows). Representative tools: GitHub Copilot (developer‑focused code completions, Copilot Chat, and editor/terminal agent workflows); Google Gemini (multimodal model family and developer APIs); IBM watsonx Assistant (no‑code and developer options for enterprise virtual agents and multi‑agent orchestrations); Anthropic’s Claude family (conversational and developer assistants tuned for analysis); Yellow.ai (CX/EX agentic automation); infrastructure and observability offerings like Xilos; and specialist platforms such as Qodo for code quality and AskCodi for multi‑provider model routing. The practical tradeoffs organizations face include model capability versus control, multimodal/o2m (one‑to‑many) data handling, integration with internal knowledge stores, compliance/audit trails, and the ability to compose, monitor, and monetize agent bundles via marketplaces. Evaluations should emphasize data governance, reproducibility, and latency/cost profiles as much as raw model performance. In this competitive landscape, decisions are driven by how well platforms connect enterprise data, enforce policies, and operationalize multi‑model agent workflows for research and engineering teams.

Tool Rankings – Top 6

An AI pair programmer that gives code completions, chat help, and autonomous agent workflows across editors, theterminal

Google’s multimodal family of generative AI models and APIs for developers and enterprises.

Enterprise virtual agents and AI assistants built with watsonx LLMs for no-code and developer-driven automation.

Anthropic's Claude family: conversational and developer AI assistants for research, writing, code, and analysis.

Enterprise agentic AI platform for CX and EX automation, building autonomous, human-like agents across channels.

Intelligent Agentic AI Infrastructure

Latest Articles (106)

A vendor‑agnostic guide to the 14 best AI governance platforms in 2025, with criteria, comparisons, and practical buying guidance.

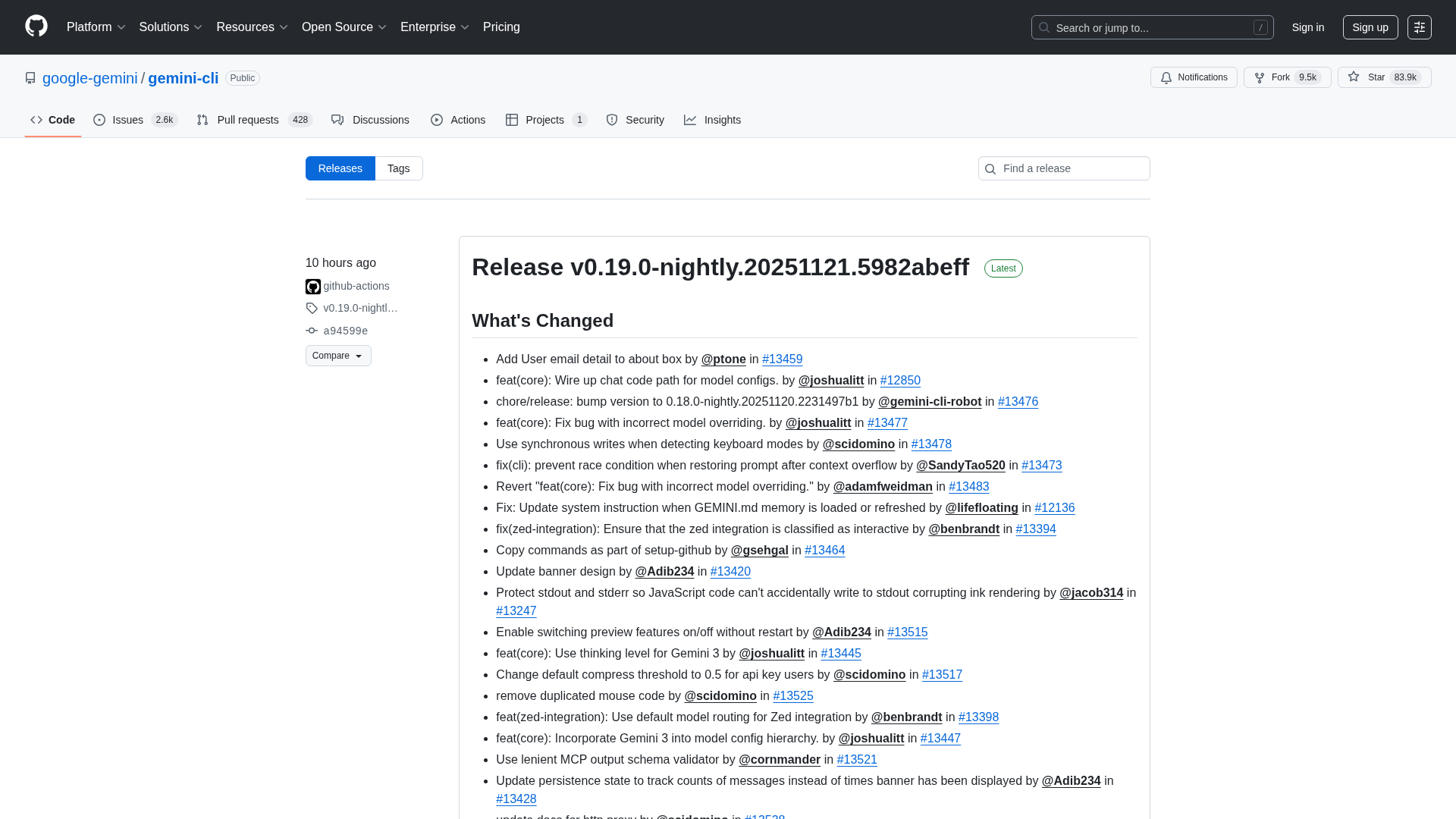

Overview of the Gemini CLI v0.36.0-preview release series, highlighting architectural, CLI, and UI changelogs across multiple pre-release versions.

OpenAI’s bypass moment underscores the need for governance that survives inevitable user bypass and hardens system controls.

A call to enable safe AI use at work via sanctioned access, real-time data protections, and frictionless governance.

Explores the human role behind AI automation and how Bell Cyber tackles AI hallucinations in security operations.