Topic Overview

This topic examines how enterprises and builders choose compute and cloud partners to scale large language models (LLMs) and agent systems, contrasting capacity options from Anthropic, Google Cloud, and infrastructure vendors such as Broadcom within the context of decentralized AI infrastructure and AI data platforms. As model sizes, multimodal workloads, and real‑time agent demands continue to grow in 2026, teams must balance throughput, latency, cost, data residency, and governance when placing training and inference workloads across cloud, on‑prem, and edge environments. Key software and platform roles are: orchestration and agent frameworks (LangChain) for building, testing, and deploying reliable LLM agents; retrieval and document‑agent platforms (LlamaIndex) for production RAG; cloud/AWS‑focused DevOps for AI (StationOps) to automate deployments and observability; no/low‑code design and operations (MindStudio) for rapid prototyping with enterprise controls; and developer productivity/IDE assistants (JetBrains AI Assistant, Amazon CodeWhisperer/Amazon Q Developer, Tabnine, Replit) to accelerate integration and app delivery. These tools plug into compute choices: model hosts and managed inference from Anthropic and Google Cloud, and underlying networking/accelerator capacity and DPUs from vendors like Broadcom that influence throughput and co‑location decisions. The practical tradeoffs in 2026 center on: selecting managed vs. co‑located capacity for compliance and latency; using decentralized or hybrid stacks to reduce egress/costs; integrating vector DBs and RAG pipelines for data efficiency; and adopting platform‑level observability and governance to meet regulatory and reliability requirements. This overview helps teams map which compute partner and supporting tools best fit workloads, operational readiness, and data governance objectives.

Tool Rankings – Top 6

An open-source framework and platform to build, observe, and deploy reliable AI agents.

The AI DevOps Engineer for AWS

Developer-focused platform to build AI document agents, orchestrate workflows, and scale RAG across enterprises.

AI-driven coding assistant (now integrated with/rolling into Amazon Q Developer) that provides inline code suggestions,

No-code/low-code visual platform to design, test, deploy, and operate AI agents rapidly, with enterprise controls and a

In‑IDE AI copilot for context-aware code generation, explanations, and refactorings.

Latest Articles (43)

A concise look at how an internal developer platform on AWS accelerates delivery with governance and self-service.

A managed internal developer platform for AWS that simplifies provisioning, deployment, and governance to accelerate software delivery.

An AWS-centric internal developer platform comparison between StationOps and Netlify.

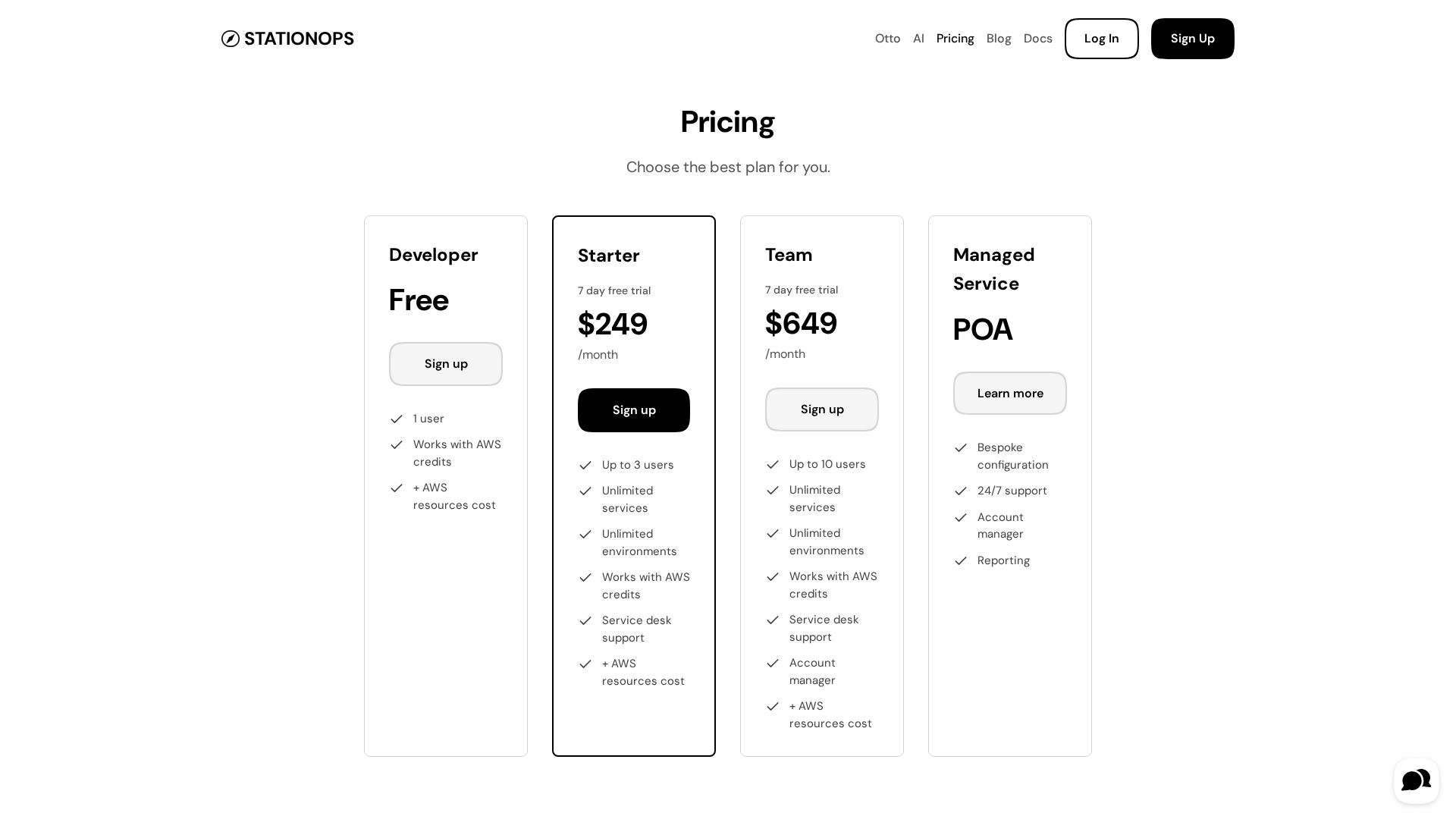

Pricing details for StationOps' AWS Internal Developer Platform, including tiers and features.

A guided look at StationOps’ internal Dev Platform for AWS—enabling governed, self‑serve environments at scale.