Topic Overview

This topic covers the tools, workflows, and governance needed to identify, test, and mitigate malicious or unsafe outputs from generative AI systems. By 2026 the rapid adoption of LLMs and agentic platforms has increased exposure to prompt‑based exploits, model jailbreaks, data‑poisoning, and downstream misuse, making integrated red‑teaming and model‑hardening part of routine MLOps and AI security governance. Practical techniques include automated adversarial testing, continuous regression testing, adversarial fine‑tuning, retrieval‑augmented safety filters, prompt‑and‑response sanitization, runtime monitoring, and incident response playbooks. Key tool categories supporting these capabilities are: AI Security Governance (policy, audit trails, and compliance), GenAI Test Automation (automated red‑team test suites and evaluation metrics), and AI Test Automation (CI/CD for models and agents). Several platforms and frameworks illustrate current approaches: LangChain provides developer SDKs and orchestration patterns for building and observing agent behavior; Vertex AI offers end‑to‑end managed model life‑cycle features for training, evaluation, and deployment at scale; Cohere supplies enterprise LLMs and embeddings for controlled, private model hosting; StackAI targets no‑code/low‑code enterprise teams to build, deploy, and govern agents; Observe.AI and Yellow.ai focus on conversational and agentic deployments where runtime safety, real‑time assist, and post‑interaction QA are critical; Google Gemini represents the multimodal model families that these practices must secure. The landscape is moving toward integrated toolchains that embed adversarial testing and observability into deployment pipelines, combined with clearer governance requirements and standardized evaluation metrics to reduce misuse while enabling responsible GenAI adoption.

Tool Rankings – Top 6

Enterprise conversation-intelligence and GenAI platform for contact centers: voice agents, real-time assist, auto QA, &洞

End-to-end no-code/low-code enterprise platform for building, deploying, and governing AI agents that automate work onun

An open-source framework and platform to build, observe, and deploy reliable AI agents.

Unified, fully-managed Google Cloud platform for building, training, deploying, and monitoring ML and GenAI models.

Enterprise-focused LLM platform offering private, customizable models, embeddings, retrieval, and search.

Enterprise agentic AI platform for CX and EX automation, building autonomous, human-like agents across channels.

Latest Articles (75)

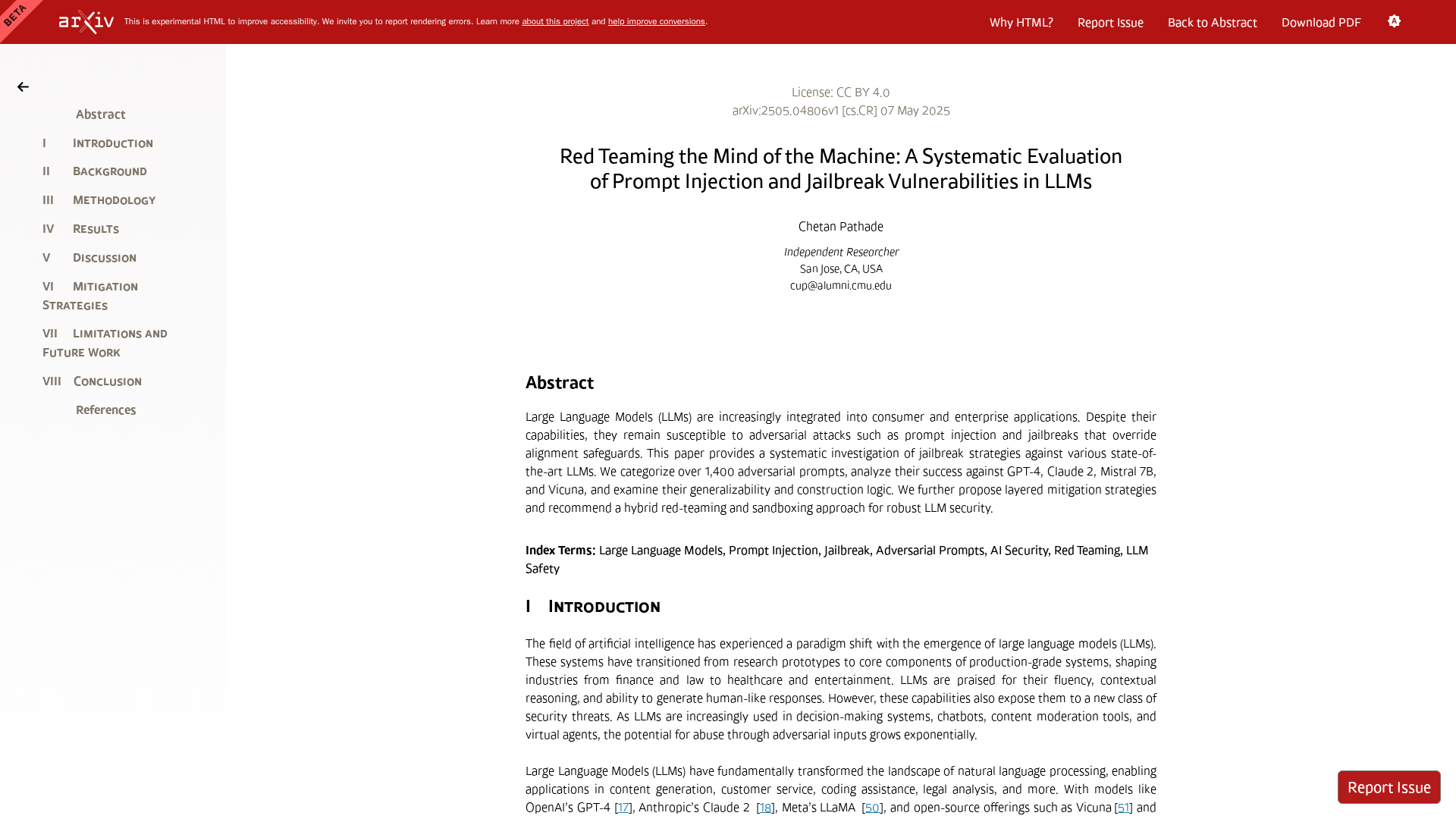

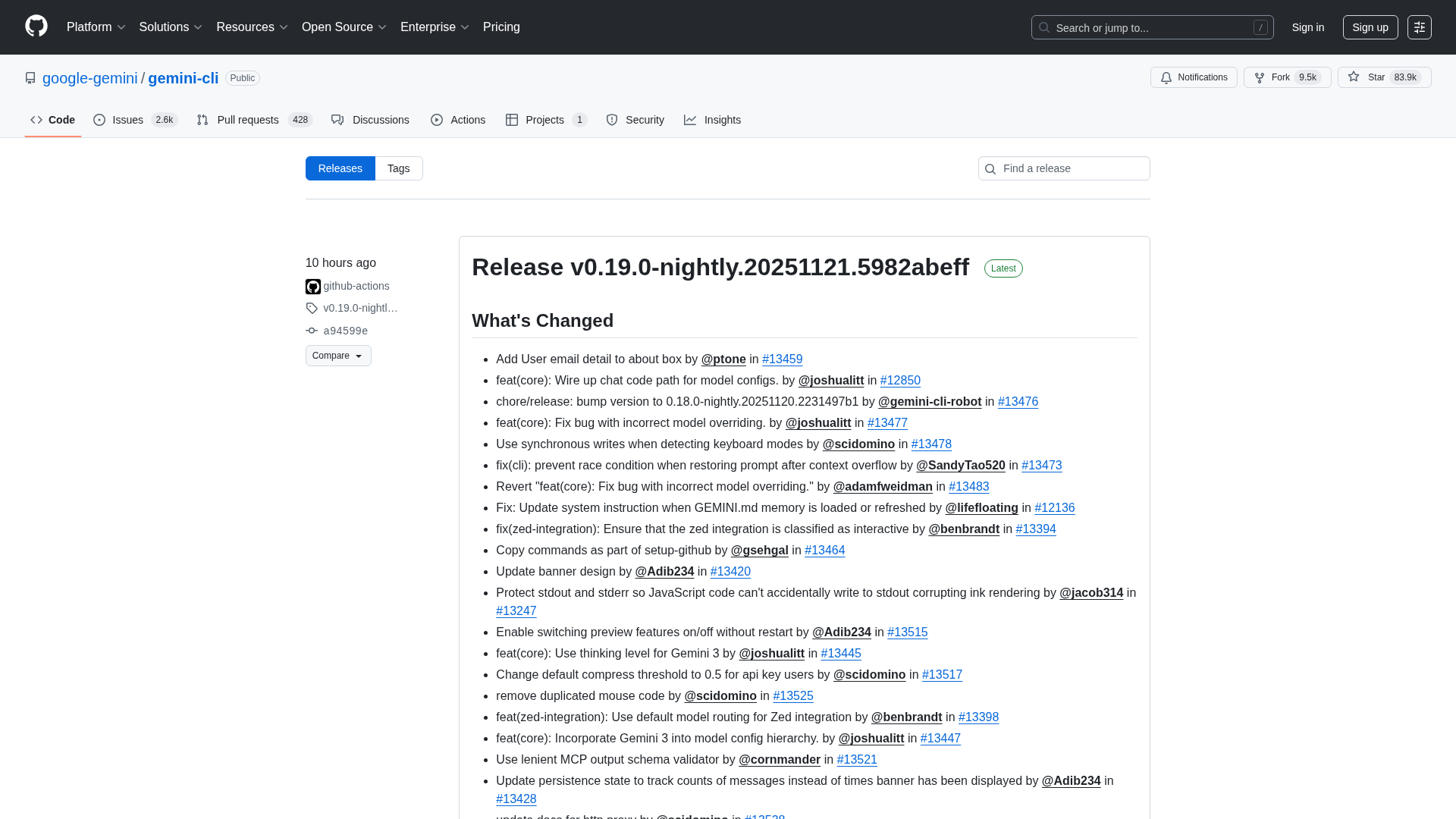

Overview of the Gemini CLI v0.36.0-preview release series, highlighting architectural, CLI, and UI changelogs across multiple pre-release versions.

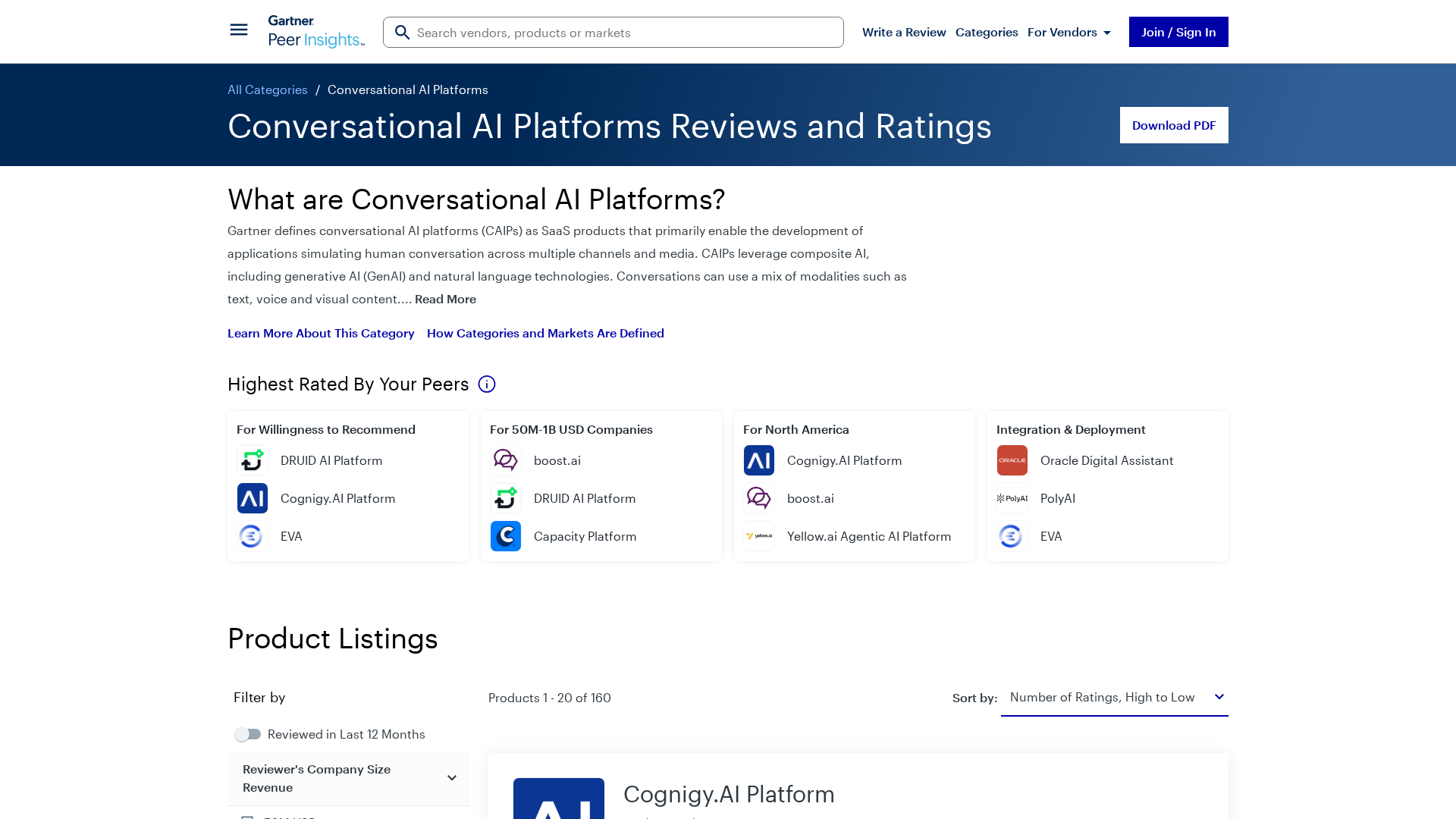

Gartner’s market view on conversational AI platforms, outlining trends, vendors, and buyer guidance.

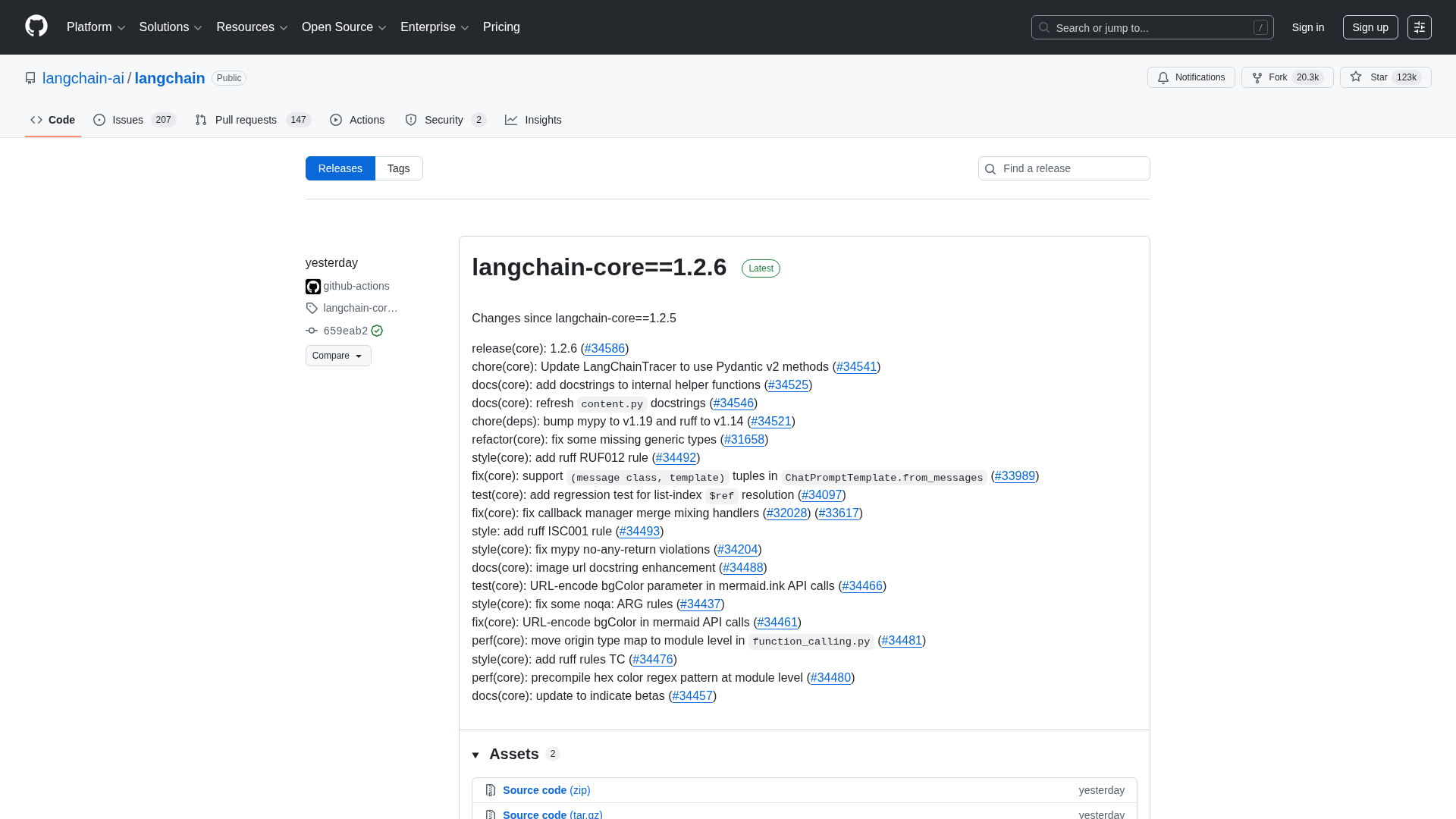

A comprehensive LangChain releases roundup detailing Core 1.2.6 and interconnected updates across XAI, OpenAI, Classic, and tests.

A reproducible bug where LangGraph with Gemini ignores tool results when a PDF is provided, even though the tool call succeeds.

A CLI tool to pull LangSmith traces and threads directly into your terminal for fast debugging and automation.