Topic Overview

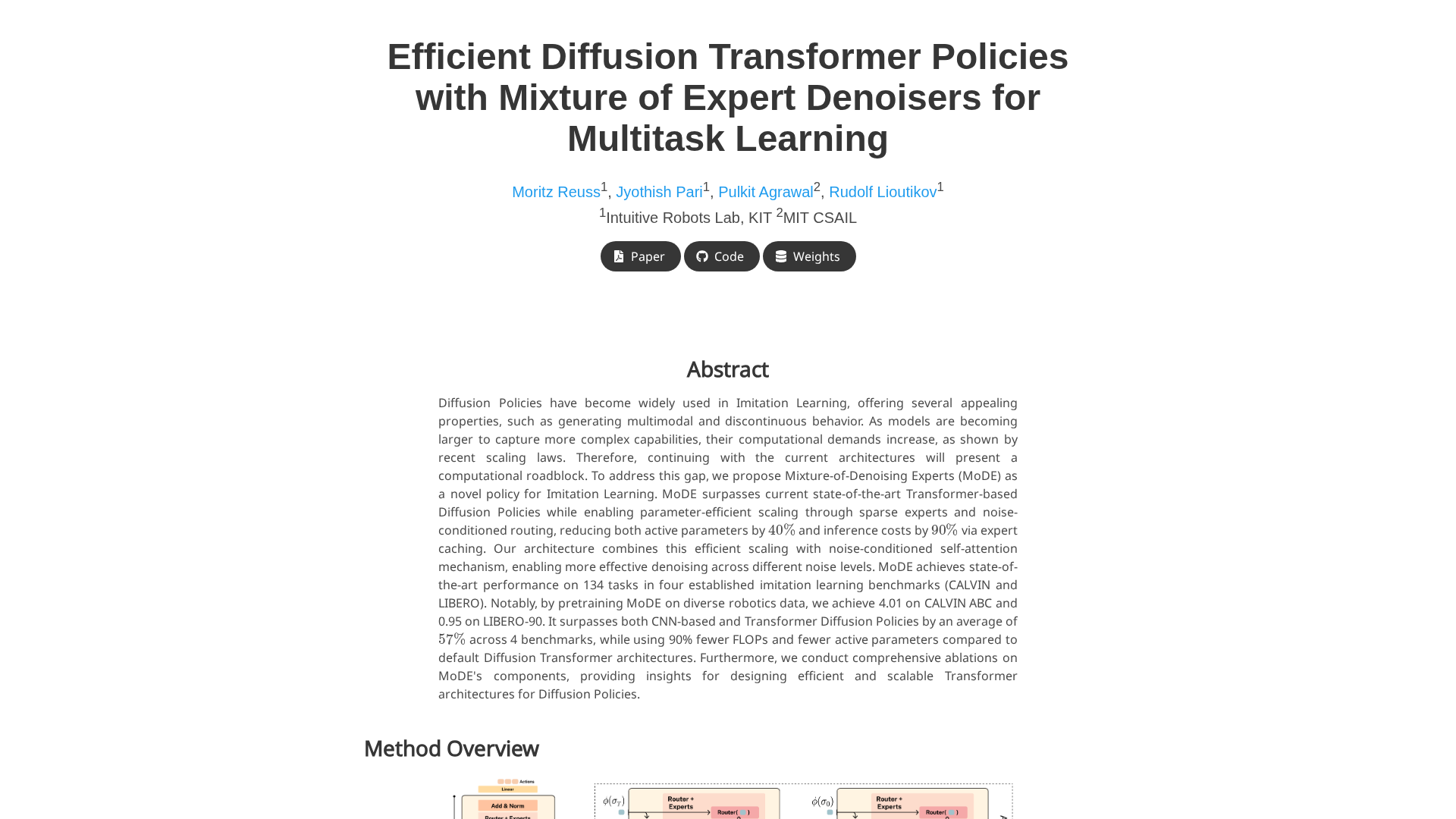

This topic compares diffusion‑based reasoning methods and transformer‑based approaches for real‑time applications, with a focus on practical trade‑offs, deployment patterns, and test automation. Diffusion methods—originally developed for iterative generative synthesis—are being adapted as reasoning primitives that refine outputs through multiple denoising steps, which can improve multimodal planning and robustness for iterative tasks. Transformers (autoregressive and encoder‑decoder LLMs) remain the dominant choice for low‑latency text generation, streaming responses, and retrieval‑augmented workflows thanks to mature quantization, sparse/efficient attention variants, and broad hardware support. Why it matters in 2026: real‑time services (coding assistants, interactive search, autonomous agents) demand predictable latency, deterministic behavior, and integrated safety checks. Diffusion variants have narrowed the latency gap via step reduction, cascades, and optimized kernels, making them viable for certain planning and multimodal refinement tasks. However, transformers still offer superior single‑pass throughput, easier streaming, and simpler integration into RAG and agent stacks. Key tools and roles: LlamaIndex enables production RAG and document agents that pair retrieval with either transformer or iterative models; AutoGPT and AgentGPT provide autonomous agent orchestration for workflow automation and are useful testbeds for real‑time agent behavior; Windsurf (Codeium) offers an AI‑native IDE and multi‑model agent support for developer workflows; Ockto Chat provides model switching and A/B testing across 300+ models; Phind targets developer multimodal search and interactive results. These platforms are useful for GenAI test automation—benchmarking latency, consistency, and safety across model classes. Recommendation: use transformers for strict low‑latency interactive services and RAG pipelines; consider diffusion‑based or hybrid pipelines for tasks that benefit from iterative refinement or multimodal planning. In practice, orchestrate and benchmark both families using agent platforms and automated tests to validate latency, determinism, and safety for production real‑time systems.

Tool Rankings – Top 6

Developer-focused platform to build AI document agents, orchestrate workflows, and scale RAG across enterprises.

Platform to build, deploy and run autonomous AI agents and automation workflows (self-hosted or cloud-hosted).

A browser-based platform to create and deploy autonomous AI agents with simple goals.

AI-native IDE and agentic coding platform (Windsurf Editor) with Cascade agents, live previews, and multi-model support.

Chat to Multiple AI Models at Once

AI-powered search for developers that returns visual, interactive, and multimodal answers focused on coding queries.

Latest Articles (33)

An all-in-one platform orchestrating conversations across multiple AI models in a single interface.

A quick preview of Gemini 2.5 Pro's model card and its potential impact on multi-AI chat platforms.

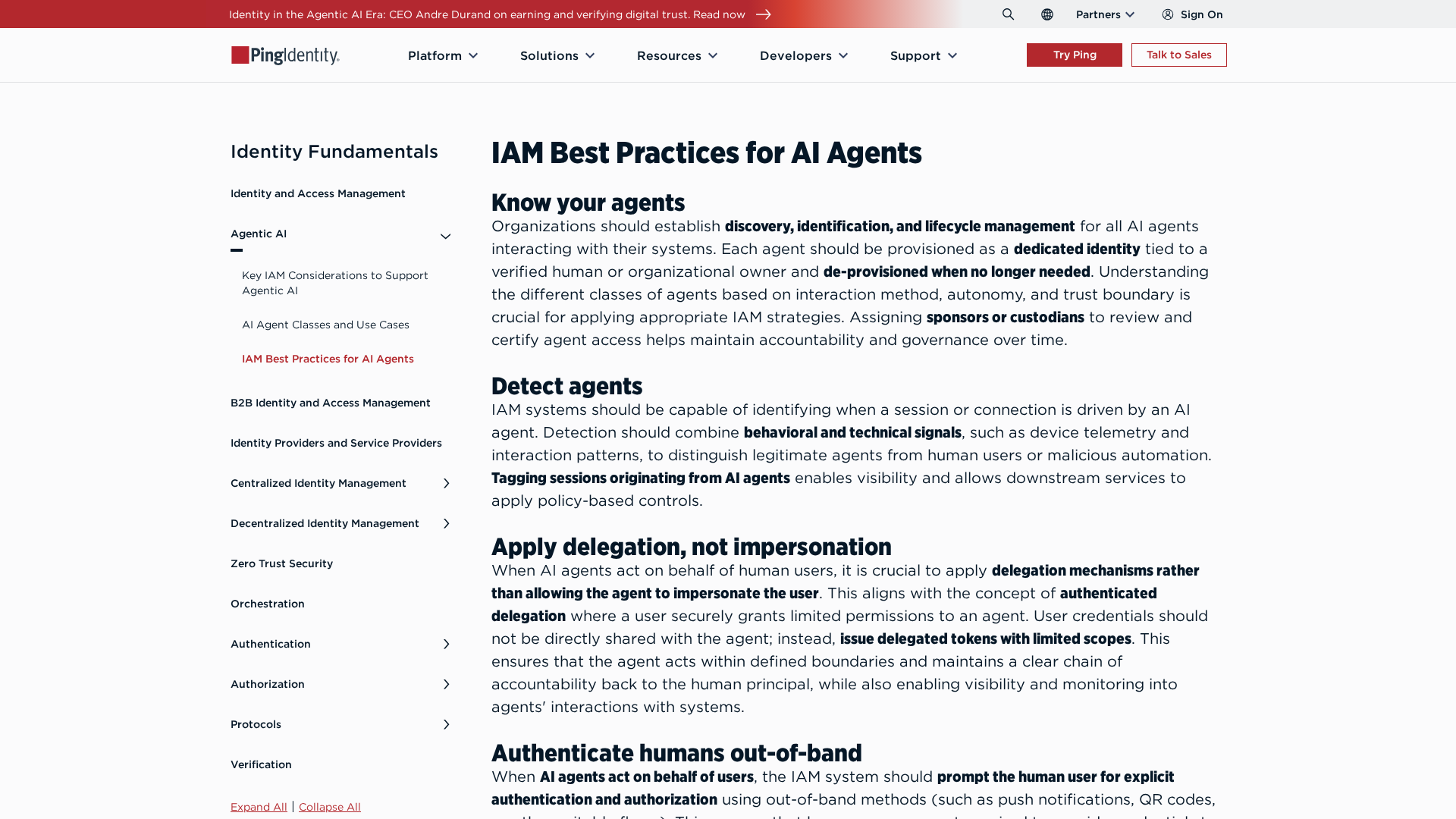

Best-practices for securing AI agents with identity management, delegated access, least privilege, and human oversight.

Google expands Canvas travel planning, global Flight Deals, and agentic booking to handle travel research and reservations inside Search AI Mode.

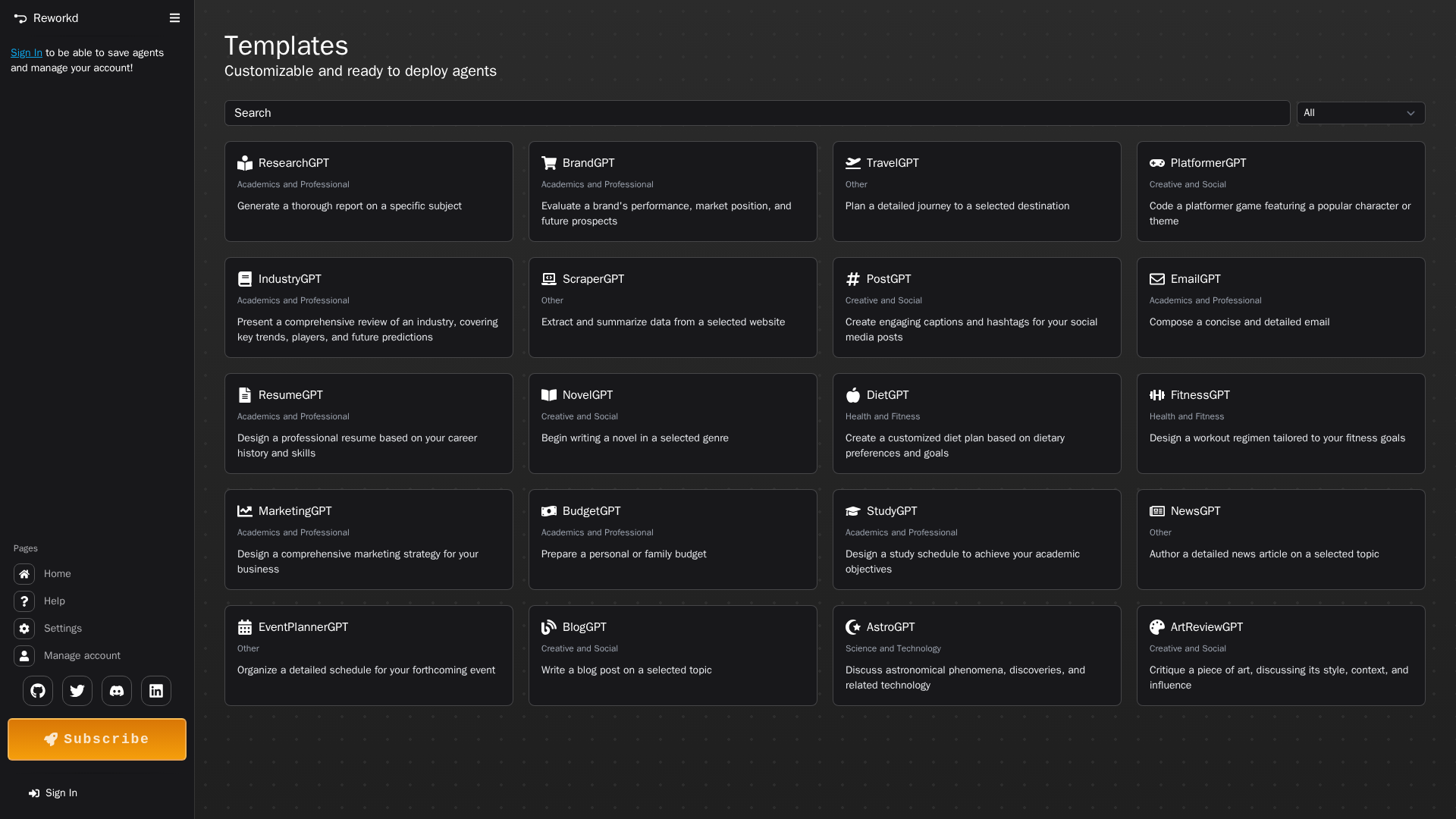

A catalog of customizable in-browser AI agents ready to deploy for research, planning, creativity, health, and more.