Topic Overview

This topic covers end-to-end training platforms for self‑improving AI agents and compares Prime Intellect Lab with alternative stacks across AI Automation, Data, Agent Frameworks, test automation, and marketplaces. Platforms in this space bundle model training/fine‑tuning, data curation, continuous evaluation, and deployment so agents can iterate on behavior in production. That makes them central to organizations moving from single‑model inference to autonomous, continuously evolving agents. As of 2026‑05‑09 the space is driven by three operational trends: scalable compute and serverless inference for rapid iteration (e.g., Together AI’s GPU acceleration and serverless APIs); automated data pipelines that produce model‑ready datasets (DatologyAI’s curation-as-a-service); and no‑code/low‑code agent builders for enterprise workflows and governance (StackAI). Quality and safety tooling is also converging with development workflows—Qodo (formerly Codium) focuses on context‑aware code review, automated test generation, and SDLC governance, while GenAI test automation capabilities are increasingly required to validate agent behavior. Foundational and developer models (Code Llama, Claude) and developer assistants (GitHub Copilot) provide the model and coding building blocks that feed and accelerate agent development. Evaluations of Prime Intellect Lab vs. alternatives should therefore consider: end‑to‑end training throughput, data curation and label quality, automated testing and safety tooling, deployment/inference scalability, governance/traceability, and integration with agent marketplaces. This synthesis frames practical tradeoffs—speed vs. control, turnkey agent creation vs. custom training pipelines—that teams must weigh when choosing a platform for production, self‑improving agents.

Tool Rankings – Top 6

A full-stack AI acceleration cloud for fast inference, fine-tuning, and scalable GPU training.

End-to-end no-code/low-code enterprise platform for building, deploying, and governing AI agents that automate work onun

Quality-first AI coding platform for context-aware code review, test generation, and SDLC governance across multi-repo,팀

Data-curation-as-a-service to train models faster, better, and smaller.

An AI pair programmer that gives code completions, chat help, and autonomous agent workflows across editors, theterminal

Code-specialized Llama family from Meta optimized for code generation, completion, and code-aware natural-language tasks

Latest Articles (72)

Baseten launches an AI training platform to compete with hyperscalers, promising simpler, more transparent ML workflows.

A step-by-step guide to building an AI-powered Reliability Guardian that reviews code locally and in CI with Qodo Command.

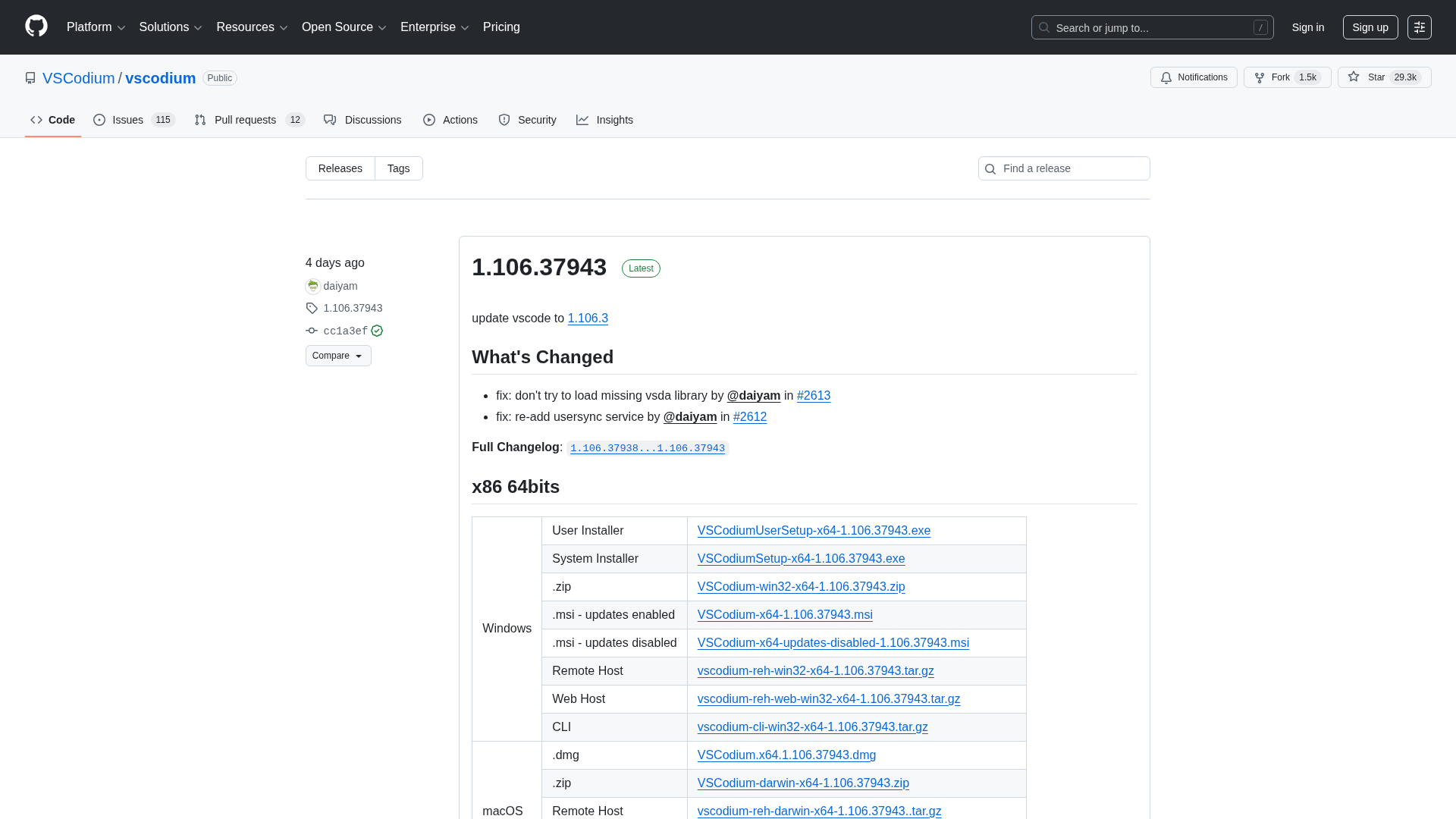

A comprehensive releases page for VSCodium with multi-arch downloads and versioned changelogs across 1.104–1.106 revisions.

A developer chronicles switching to Zed on Linux, prototyping on a phone, and a late-night video correction.

Qodo ranks highest for Codebase Understanding by Gartner, highlighting cross-repo context as essential for scalable AI development.