Topic Overview

Enterprise Agent Development Platforms cover the end-to-end stack for building, running, and observing LLM-powered agents: from vendor-supplied agent templates (e.g., Anthropic) and cloud-managed agent services (Sage/AWS, Microsoft integrations) to developer frameworks, IDE agents, and retrieval/observability tooling. This topic is timely in 2026 because organizations are moving beyond prototypes to production agents that require standard templates, multi-model orchestration, security, cost control, and SDLC-grade governance. Key categories include agent frameworks (LangChain for composing, testing, and deploying reliable agents), AI agent marketplaces and cloud platforms (Vertex AI for unified model discovery, training, deployment, and monitoring), and AI automation platforms that operationalize workflows. Document- and RAG-focused tooling—LlamaIndex—turns unstructured content into retrieval-augmented agents, while enterprise LLM providers like Cohere supply private, customizable models and embeddings for secure inference and retrieval. Developer tooling is shifting toward agentic workflows: Windsurf (AI-native IDE) and in‑IDE copilots (GitHub Copilot, JetBrains AI Assistant) integrate agents into coding flow, while quality-focused platforms such as Qodo enforce test generation and SDLC governance across repositories. Practical enterprise concerns drive adoption: interoperability between SDKs and cloud services, observability and auditing of agent decisions, prompt and retrieval governance, and multi-model routing for cost/latency trade-offs. Organizations adopting Anthropic templates, Sage/AWS, or Microsoft integrations gain prebuilt orchestration patterns but must pair them with frameworks (LangChain, LlamaIndex) and governance layers (testing, monitoring, private models) to meet compliance and reliability needs. In short, the space now emphasizes composable, auditable agent stacks that combine cloud-managed services, developer-centric frameworks, and production-grade tooling for secure, maintainable agent deployments.

Tool Rankings – Top 6

An open-source framework and platform to build, observe, and deploy reliable AI agents.

Unified, fully-managed Google Cloud platform for building, training, deploying, and monitoring ML and GenAI models.

Developer-focused platform to build AI document agents, orchestrate workflows, and scale RAG across enterprises.

AI-native IDE and agentic coding platform (Windsurf Editor) with Cascade agents, live previews, and multi-model support.

Quality-first AI coding platform for context-aware code review, test generation, and SDLC governance across multi-repo,팀

An AI pair programmer that gives code completions, chat help, and autonomous agent workflows across editors, theterminal

Latest Articles (60)

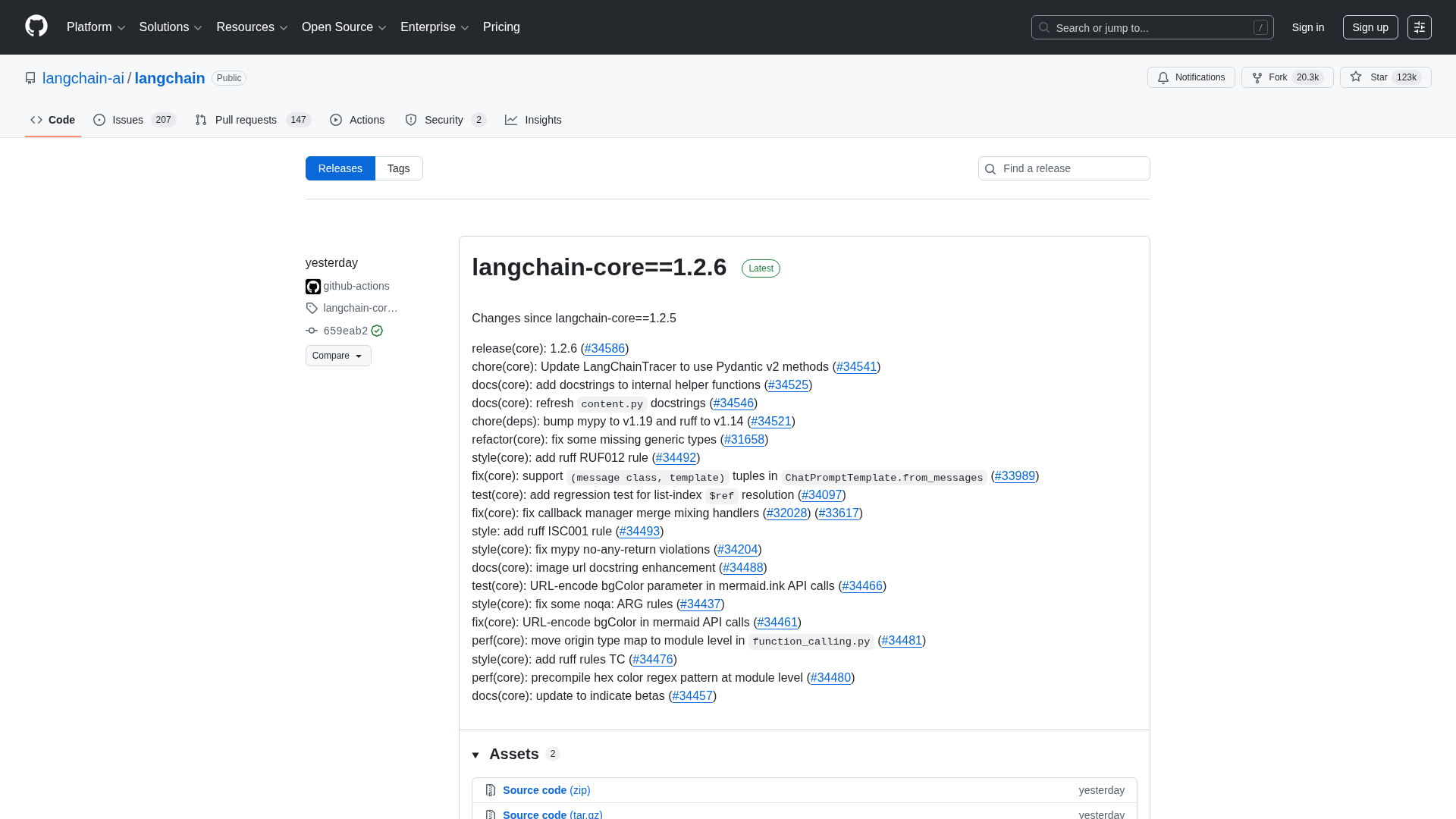

A comprehensive LangChain releases roundup detailing Core 1.2.6 and interconnected updates across XAI, OpenAI, Classic, and tests.

A reproducible bug where LangGraph with Gemini ignores tool results when a PDF is provided, even though the tool call succeeds.

A practical guide to debugging deep agents with LangSmith using tracing, Polly AI analysis, and the LangSmith Fetch CLI.

A CLI tool to pull LangSmith traces and threads directly into your terminal for fast debugging and automation.

A step-by-step guide to building an AI-powered Reliability Guardian that reviews code locally and in CI with Qodo Command.