Topic Overview

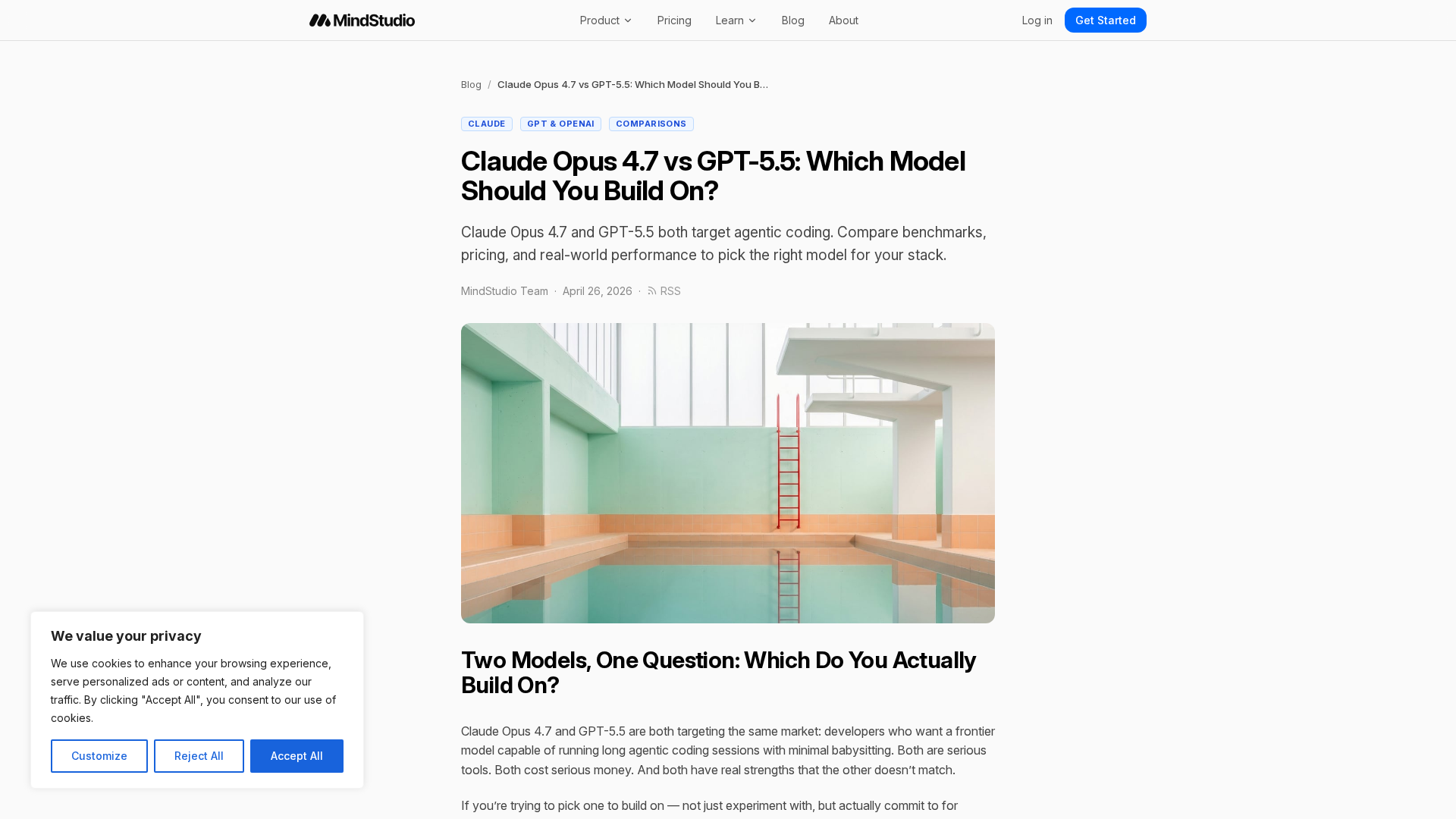

This topic covers how today’s frontier foundation models — exemplified by large proprietary families (e.g., OpenAI, Google/DeepMind) and a growing open-source ecosystem — are evaluated, integrated, and operationalized for AI research, competitive intelligence, and GenAI test automation. As organizations move from experimentation to production, choices about model family, specialization, deployment footprint and orchestration increasingly determine developer productivity, cost, and compliance. Key tools illustrate how models are used in practice: Cursor and GitHub Copilot embed AI across editing and agent workflows to speed development; Amazon CodeWhisperer (integrating into Amazon Q Developer) provides inline suggestions within an AWS-centric stack; Code Llama, WizardLM and Stable Code represent open-source and code-specialized model families for on-premise or edge inference; Phind targets developer search and multimodal retrieval; AskCodi provides an OpenAI-compatible API layer and routing for hybrid or multi-provider deployments. Important evaluation axes include multimodal reasoning, code generation fidelity, latency and cost trade-offs, instruction-following robustness, safety/alignment behavior, and the ability to run at the edge or behind enterprise controls. Practical trends to watch are: specialization (code-focused models), model routing and orchestration layers, continuous GenAI test automation to catch regressions, and the maturation of open-source alternatives for privacy-sensitive or edge scenarios. For buyers and builders, the right choice depends on integration needs (IDE/agent support), governance constraints, cost profile and whether you require on-device/edge inference or cloud-hosted latest-capability models. This comparison frames those trade-offs and the tools that operationalize them.

Tool Rankings – Top 6

AI-first code editor and assistant by Anysphere embedding AI across editor, agents, CLI and web workflows.

An AI pair programmer that gives code completions, chat help, and autonomous agent workflows across editors, theterminal

AI-driven coding assistant (now integrated with/rolling into Amazon Q Developer) that provides inline code suggestions,

Code-specialized Llama family from Meta optimized for code generation, completion, and code-aware natural-language tasks

Open-source family of instruction-following LLMs (WizardLM/WizardCoder/WizardMath) built with Evol-Instruct, focused on

Edge-ready code language models for fast, private, and instruction‑tuned code completion.

Latest Articles (48)

Adobe nears a $19 billion deal to acquire Semrush, expanding its marketing software capabilities, according to WSJ reports.

The article is gated behind a JavaScript/cookie wall, blocking access to its main content.

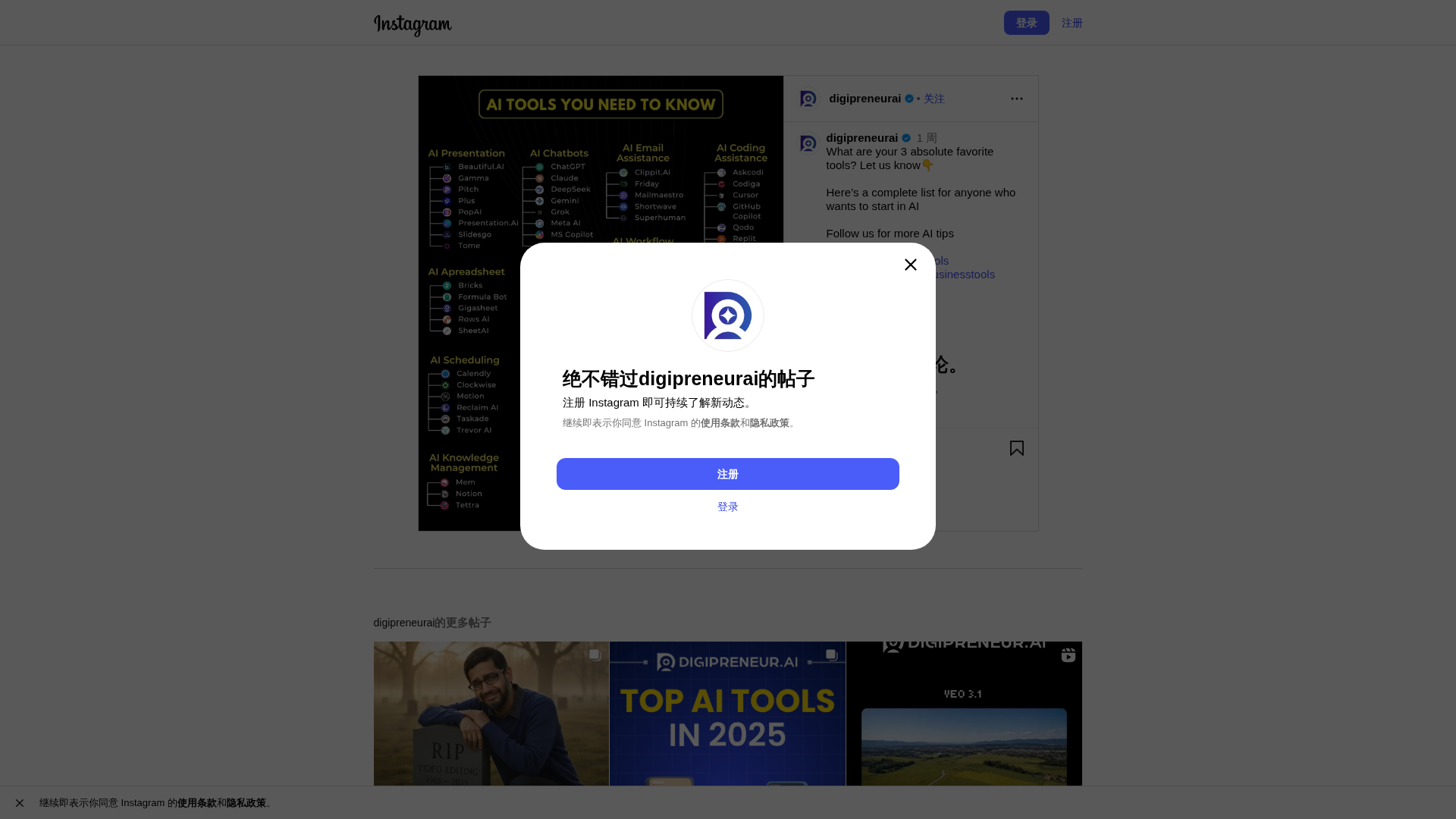

Content missing from the provided link; please supply the post's caption or description for analysis.

Discover an OpenAI-compatible API with custom models powering Continue.dev, Cline, and Codex-driven workflows.

A concise overview of a new OpenAI-compatible API enabling custom models across Continue.dev, Cline, and OpenAI Codex.