Topic Overview

This topic covers the intersection of inference hardware and on‑premise AI platforms — from third‑generation accelerators (Groq‑3) and Tesla/custom AI chips to server and edge deployments that run private models. Demand for low‑latency, privacy‑preserving inference has pushed enterprises and developer tooling providers to adopt dedicated accelerators and rack‑scale solutions that keep sensitive workloads on‑prem or at the edge. That shift is driven by regulatory scrutiny, cost trade‑offs for high‑throughput workloads, and advances in quantization, sparsity and compiler toolchains that make large models more efficient in constrained environments. Key categories and tools: Stable Code (edge‑ready, instruction‑tuned code models) is designed to run compactly for fast, private code completion close to developers. Windsurf (formerly Codeium) packages multi‑model support and agentic IDE features that benefit from localized inference to maintain developer flow. Tabnine and Tabby illustrate enterprise and open‑source approaches to private/self‑hosted coding assistants: Tabnine emphasizes governance and managed private deployments, while Tabby provides local‑first model serving and IDE extensions. Qodo (rebranded Codium) focuses on code quality, test generation and SDLC governance that often requires multi‑repo context and predictable, on‑prem inference. Together, these trends show a move toward decentralized AI infrastructure: purpose‑built chips and server designs reduce inference cost and latency, while self‑hosted developer tools prioritize data control and observability. Evaluations should consider accelerator compatibility, model compression support, orchestration and lifecycle tooling, and how well vendor stacks integrate with self‑hosted coding platforms and enterprise governance requirements.

Tool Rankings – Top 5

Edge-ready code language models for fast, private, and instruction‑tuned code completion.

AI-native IDE and agentic coding platform (Windsurf Editor) with Cascade agents, live previews, and multi-model support.

Enterprise-focused AI coding assistant emphasizing private/self-hosted deployments, governance, and context-aware code.

.avif)

Open-source, self-hosted AI coding assistant with IDE extensions, model serving, and local-first/cloud deployment.

Quality-first AI coding platform for context-aware code review, test generation, and SDLC governance across multi-repo,팀

Latest Articles (35)

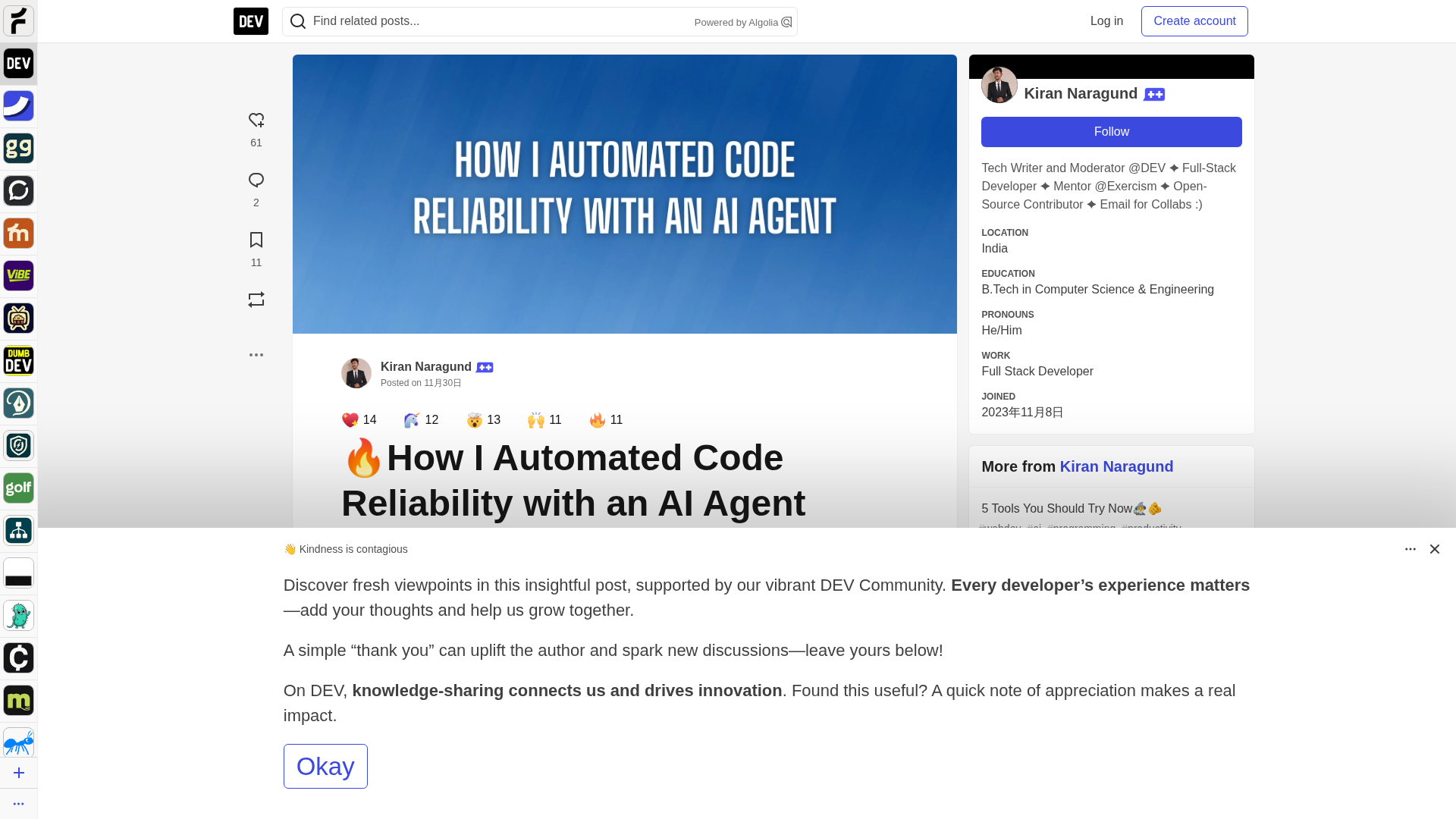

A step-by-step guide to building an AI-powered Reliability Guardian that reviews code locally and in CI with Qodo Command.

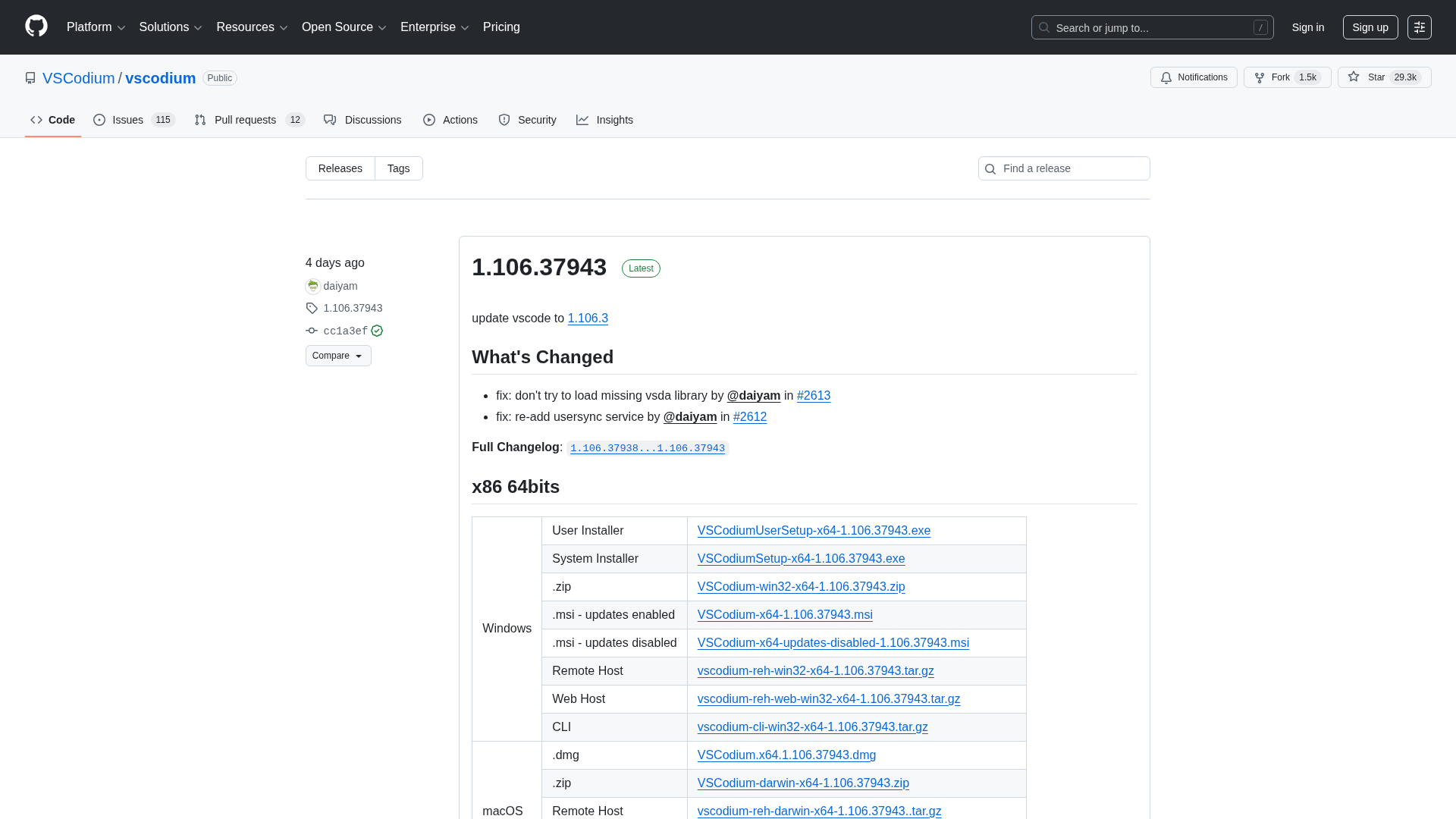

A comprehensive releases page for VSCodium with multi-arch downloads and versioned changelogs across 1.104–1.106 revisions.

A developer chronicles switching to Zed on Linux, prototyping on a phone, and a late-night video correction.

Qodo ranks highest for Codebase Understanding by Gartner, highlighting cross-repo context as essential for scalable AI development.

Context-aware, enterprise-grade AI code review that scales across multi-repo ecosystems and enforces policies.