Topic Overview

Local-execution LLM assistants are AI systems that run models or agent logic on a user’s own hardware or directly interact with local tools and files, rather than relying exclusively on cloud-only inference. This category spans in‑IDE copilots (JetBrains AI Assistant), open-source code specialists (CodeGeeX, Code Llama), autonomous-agent runtimes (AutoGPT), engineering frameworks (LangChain), and no‑code/low‑code agent builders and marketplaces (Lindy, MindStudio, Anakin.ai). The topic is timely in 2026 because hardware advances, model quantization, and smaller task-specialized models have made local inference and hybrid local/cloud workflows practical for more users. Organizations are balancing latency, offline capability, data residency, and cost control against the operational complexity of hosting and governing models. Agent frameworks and marketplaces now focus on safe tool access, state management, and discoverability—letting practitioners compose tool-using agents that call local binaries, IDEs, or enterprise data stores while enforcing governance and reproducibility. Key tool roles: CodeGeeX and Code Llama provide code-focused generation that can be integrated into local developer workflows; JetBrains AI Assistant embeds context-aware code help inside IDEs; LangChain and AutoGPT underpin agent orchestration and tool chaining; Lindy, MindStudio, and Anakin.ai lower the barrier to build, deploy, and govern agents via no/low-code interfaces or curated app libraries. Adoption trade-offs include hardware and security requirements, update and model management, and the need for standardized evaluation and controls. Teams evaluating local-execution assistants should weigh compute and integration costs against privacy, latency, and customization benefits, and prioritize platforms that support governance, modular tools, and reproducible deployment paths.

Tool Rankings – Top 6

AI-based coding assistant for code generation and completion (open-source model and VS Code extension).

Code-specialized Llama family from Meta optimized for code generation, completion, and code-aware natural-language tasks

A no-code AI platform with 1000+ built-in AI apps for content generation, document search, automation, batch processing,

In‑IDE AI copilot for context-aware code generation, explanations, and refactorings.

Platform to build, deploy and run autonomous AI agents and automation workflows (self-hosted or cloud-hosted).

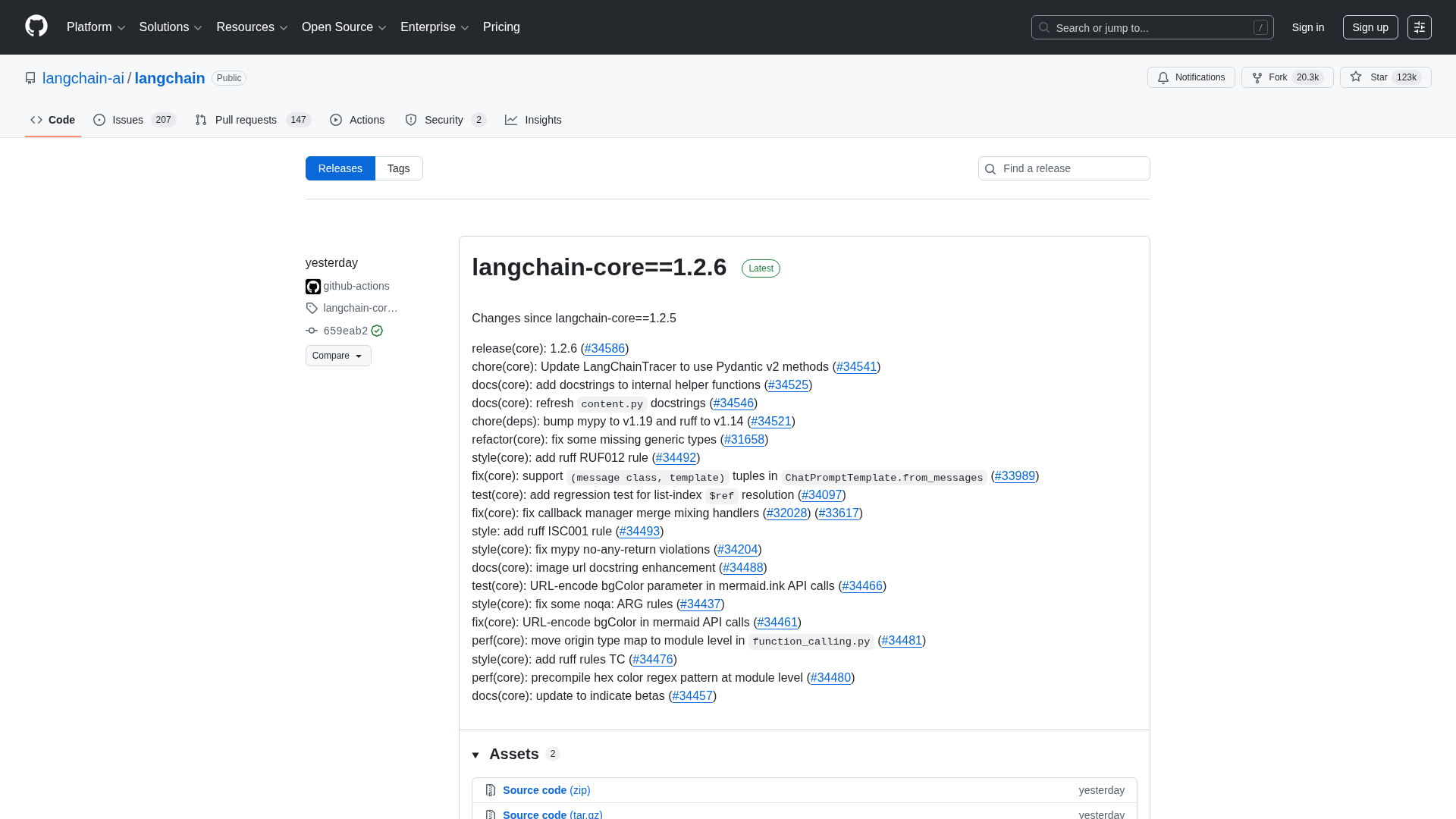

Engineering platform and open-source frameworks to build, test, and deploy reliable AI agents.

Latest Articles (48)

A comprehensive LangChain releases roundup detailing Core 1.2.6 and interconnected updates across XAI, OpenAI, Classic, and tests.

Cannot access the article content due to an access-denied error, preventing summarization.

A quick preview of POE-POE's pros and cons as seen in G2 reviews.

Meta rolls out Facebook Content Protection to detect stolen Reels and give creators options to block, track, or claim across Facebook and Instagram.

Get daily, curated trending ML papers delivered straight to your inbox.