Topic Overview

Enterprise AI agent testing and evaluation platforms provide the tooling and processes organizations need to validate reliability, safety, and business outcomes for deployed LLM-powered agents. As agentic AI moves from pilots into contact centers, knowledge work automation, and customer experience (CX) systems, teams must combine test automation, observability, and governance to measure correctness, latency, hallucination risk, policy compliance, and user experience at scale. This topic spans three overlapping areas: AI Test Automation (automated functional and regression suites for LLM behaviors), GenAI Test Automation (scenario generation, adversarial and safety tests, hallucination detection), and Agent Frameworks (developer SDKs and deployment platforms that make agents observable and controllable). Representative tools include LangChain (open-source SDKs and commercial platform for building, testing, and deploying reliable agents), StackAI (no-code/low-code end-to-end agent build, deploy, and governance), and Vertex AI (managed model lifecycle, evaluation and deployment services). Contact-center and conversational specialists—Observe.AI, PolyAI, Yellow.ai, and Crescendo.ai—focus on voice and chat agent evaluation, real-time assist, and hybrid human+AI workflows, emphasizing QA, outcome guarantees, and multilingual voice performance. Practical evaluation today emphasizes continuous, scenario-driven testing, synthetic customer simulations, metrics for safety and business KPIs, and closed-loop monitoring that feeds retraining and policy updates. For enterprises in 2026, these platforms are timely because regulatory scrutiny, cost control, and user trust require demonstrable, repeatable evaluation practices that integrate with CI/CD, model governance, and operational observability across multimodal deployments.

Tool Rankings – Top 6

An open-source framework and platform to build, observe, and deploy reliable AI agents.

Enterprise conversation-intelligence and GenAI platform for contact centers: voice agents, real-time assist, auto QA, &洞

_logo%201.svg)

AI-native CX platform combining agentic AI with human experts in a managed service model (platform + per-resolution fees

Unified, fully-managed Google Cloud platform for building, training, deploying, and monitoring ML and GenAI models.

Voice-first conversational AI for enterprise contact centers, delivering lifelike multilingual agents across voice, chat

Enterprise agentic AI platform for CX and EX automation, building autonomous, human-like agents across channels.

Latest Articles (68)

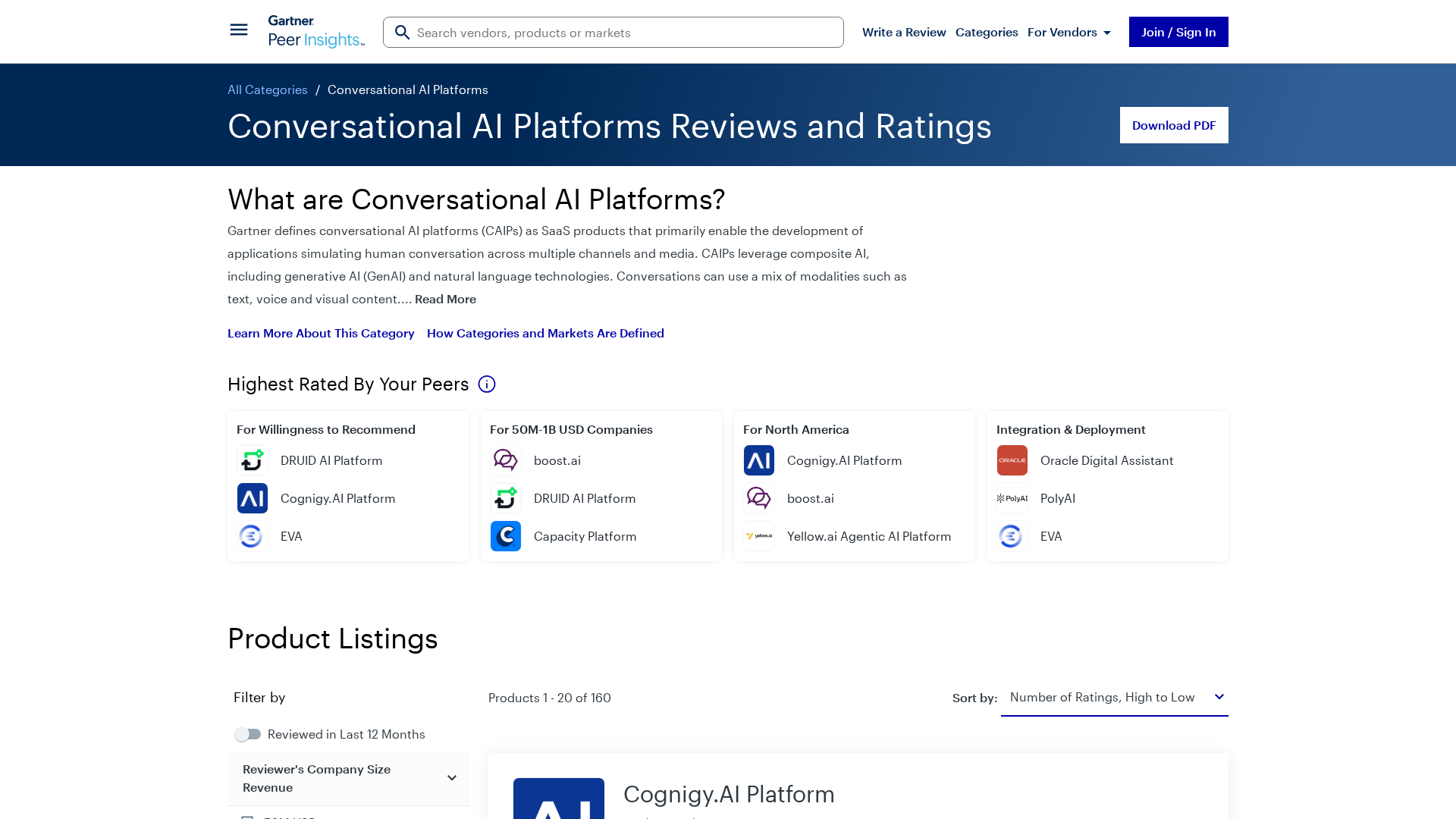

Gartner’s market view on conversational AI platforms, outlining trends, vendors, and buyer guidance.

A comprehensive LangChain releases roundup detailing Core 1.2.6 and interconnected updates across XAI, OpenAI, Classic, and tests.

A reproducible bug where LangGraph with Gemini ignores tool results when a PDF is provided, even though the tool call succeeds.

A CLI tool to pull LangSmith traces and threads directly into your terminal for fast debugging and automation.

A practical guide to debugging deep agents with LangSmith using tracing, Polly AI analysis, and the LangSmith Fetch CLI.