Topic Overview

This topic examines universal on-device AI SDKs that enable both inference and local training on edge devices, comparing QVAC SDK-style toolchains with alternatives focused on code models, self-hosted assistants, and platform integrations. On-device SDKs are increasingly relevant in 2026 because organizations seek lower-latency AI, better data privacy, and offline capabilities while model architectures and tool ecosystems have shifted toward smaller, optimized families suitable for mobile and embedded training/inference. Key components discussed include compact code-specialized LLMs (Stable Code, Code Llama, Salesforce CodeT5) used for local code completion and reasoning; open instruction-tuned models (nlpxucan/WizardLM variants) for assistant-style tasks; developer tooling that preserves project context on-device (EchoComet); and enterprise-focused assistants that prioritize governance and self-hosting (Tabnine). These tools illustrate the trade-offs between model size, accuracy, and resource requirements that universal SDKs must manage. We also place SDKs in the broader ecosystem: Edge AI Vision Platforms supply optimized runtime and hardware acceleration; Agent Frameworks orchestrate on-device chains and actions; AI Data Platforms collect and curate labeled edge data for continual learning; and AI Tool Marketplaces distribute model assets and extensions. Practical considerations include cross-platform runtimes, quantization and pruning support, differential privacy or federated-learning hooks, and integration with existing CI/CD and governance pipelines. This overview aims to help engineers and product leaders assess whether a QVAC-style universal on-device SDK or a composable alternative better fits constraints like privacy, offline operation, hardware diversity, and the need for ongoing on-device adaptation.

Tool Rankings – Top 6

Edge-ready code language models for fast, private, and instruction‑tuned code completion.

Code-specialized Llama family from Meta optimized for code generation, completion, and code-aware natural-language tasks

Open-source family of instruction-following LLMs (WizardLM/WizardCoder/WizardMath) built with Evol-Instruct, focused on

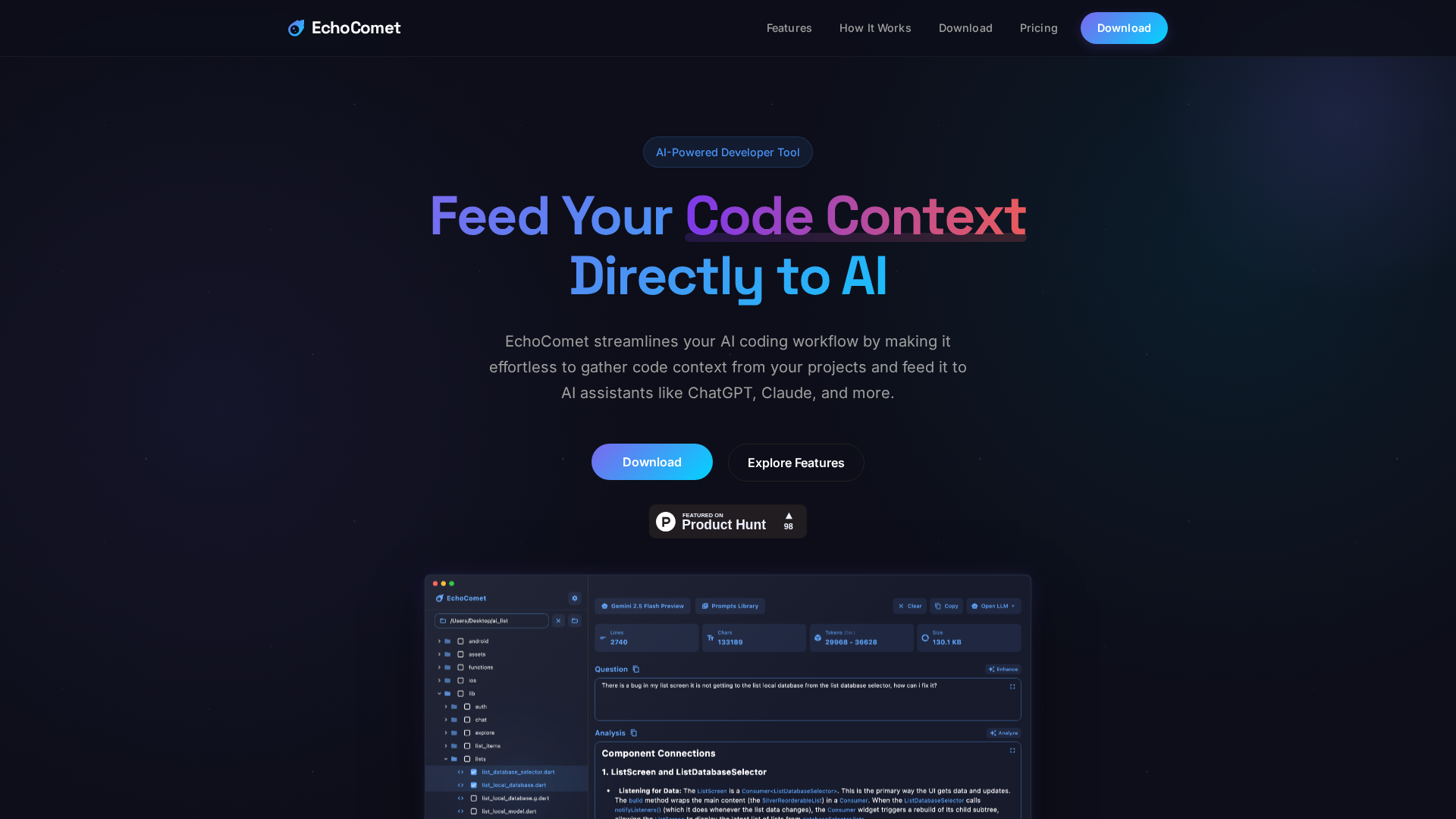

Feed your code context directly to AI

Enterprise-focused AI coding assistant emphasizing private/self-hosted deployments, governance, and context-aware code.

Official research release of CodeT5 and CodeT5+ (open encoder–decoder code LLMs) for code understanding and generation.

Latest Articles (35)

EchoComet's contact page provides fast support, license recovery, and device limits for macOS.

EchoComet lets you gather code context locally and feed it to AI with large-context prompts for smarter, private AI assistance.

Adobe nears a $19 billion deal to acquire Semrush, expanding its marketing software capabilities, according to WSJ reports.

Adobe’s Semrush acquisition signals a major AI-driven shift and potential consolidation in SEO tools.

Dell unveils 20+ advancements to its AI Factory at SC25, boosting automation, GPU-dense hardware, storage and services for faster, safer enterprise AI.