Topic Overview

This topic covers the evolving ecosystem of AI infrastructure and GPU cloud providers, focusing on how hardware partnerships, capacity expansion and new accelerator architectures are changing where and how models run. Demand for GPU compute remains high, prompting cloud vendors to expand capacity and collaborate with chipmakers and regional partners to secure supply and optimize performance. At the same time, decentralized and on‑premises approaches are gaining traction as teams balance cost, latency, governance and energy use. Key tool and platform categories include: specialized inference accelerators (e.g., Rebellions.ai’s chiplet/SoC and GPU‑class software stack for energy‑efficient high‑throughput inference), cloud orchestration and DevOps tooling (StationOps as an AI DevOps assistant for AWS), self‑hosted model serving and IDE integrations (Tabby), agent and application frameworks (LangChain), and autonomous agent platforms (AutoGPT). Together these address different layers: hardware and firmware, runtime and orchestration, developer workflows, and data/agent platforms. Current trends to watch: cloud providers expanding physical GPU capacity and forming deeper partnerships with accelerator vendors to avoid supply chokepoints; diversification toward purpose‑built accelerators and energy‑efficient inference hardware to lower TCO; increased adoption of decentralized, local‑first deployments for latency, privacy and cost control; and tighter integration between AI data platforms and runtime stacks to streamline training, fine‑tuning and inference. Practically, organizations must evaluate tradeoffs among raw cloud GPU availability, specialized on‑prem hardware, and the operational tooling required to deploy, observe and govern models across hybrid environments. This area is technical and rapidly changing; decisions hinge on workload profiles (training vs. inference), energy and cost constraints, and the maturity of orchestration and data platform ecosystems.

Tool Rankings – Top 5

Energy-efficient AI inference accelerators and software for hyperscale data centers.

The AI DevOps Engineer for AWS

.avif)

Open-source, self-hosted AI coding assistant with IDE extensions, model serving, and local-first/cloud deployment.

An open-source framework and platform to build, observe, and deploy reliable AI agents.

Platform to build, deploy and run autonomous AI agents and automation workflows (self-hosted or cloud-hosted).

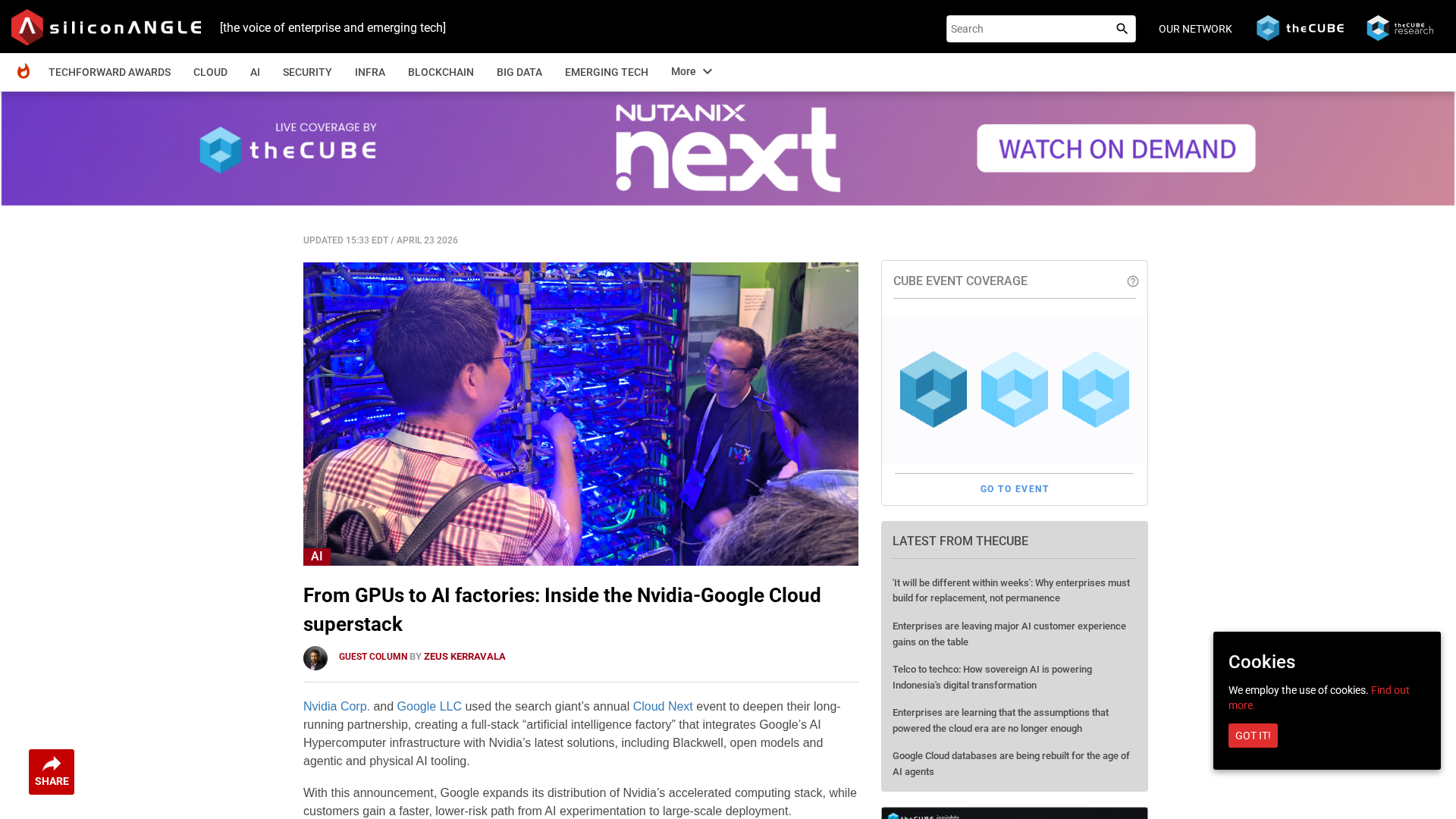

Latest Articles (31)

A concise look at how an internal developer platform on AWS accelerates delivery with governance and self-service.

A guided look at StationOps’ internal Dev Platform for AWS—enabling governed, self‑serve environments at scale.

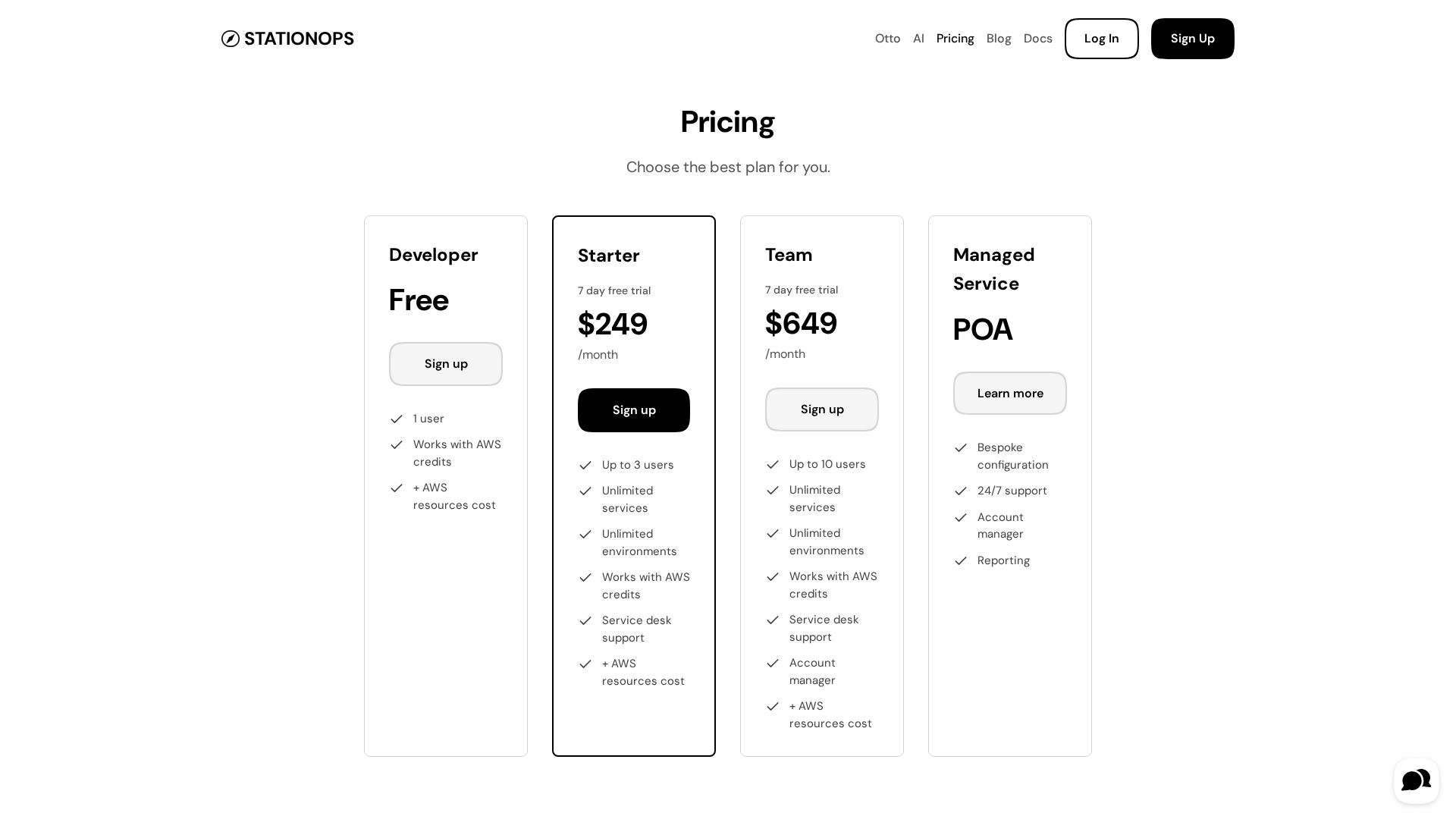

Pricing details for StationOps' AWS Internal Developer Platform, including tiers and features.

An AWS-centric internal developer platform comparison between StationOps and Netlify.

A managed internal developer platform for AWS that simplifies provisioning, deployment, and governance to accelerate software delivery.