Topic Overview

This topic covers the intersection of AI tooling and institutional finance: how model platforms, agent frameworks, and AI data stacks are used to build market‑making engines, trading orchestrators, and market‑intelligence flows for both centralized and decentralized markets (examples include market‑making engines such as Hyperdrive and DEX aggregators like 1inch). As of 2026, institutions are moving beyond proof‑of‑concepts to productionized AI for low‑latency pricing, strategy orchestration, execution routing and regulatory reporting. Key tool categories and their roles include AI tool marketplaces (for discovering and procuring specialized models and strategy primitives), market‑intelligence tools (real‑time tick data, alternative data ingestion and signal evaluation) and AI data platforms (vector stores, RAG-enabled document agents and model governance). Foundational technologies used in these stacks: Vertex AI for unified model development, training, deployment and monitoring; LangChain for building and observing LLM‑driven agents and orchestrations; LlamaIndex for turning unstructured filings, research and chat logs into retrievable knowledge; AutoGPT‑style orchestration for automated workflow loops; Together AI for scalable training and serverless inference; Cohere for enterprise‑grade LLMs and embeddings; and no/low‑code platforms such as StackAI to accelerate internal adoption. Current trends driving relevance are: the rise of autonomous orchestration that coordinates signal generation, risk checks and execution; broader adoption of retrieval‑augmented generation for research and compliance; demand for explainability and model governance in regulated settings; and continued migration to hybrid deployment models (cloud, on‑prem, private inference) for latency and privacy requirements. This topic helps buyers and architects compare tool functions, integration patterns and operational constraints when designing AI‑powered market‑making and trading orchestration systems.

Tool Rankings – Top 6

Unified, fully-managed Google Cloud platform for building, training, deploying, and monitoring ML and GenAI models.

An open-source framework and platform to build, observe, and deploy reliable AI agents.

Developer-focused platform to build AI document agents, orchestrate workflows, and scale RAG across enterprises.

Platform to build, deploy and run autonomous AI agents and automation workflows (self-hosted or cloud-hosted).

A full-stack AI acceleration cloud for fast inference, fine-tuning, and scalable GPU training.

Enterprise-focused LLM platform offering private, customizable models, embeddings, retrieval, and search.

Latest Articles (63)

Baseten launches an AI training platform to compete with hyperscalers, promising simpler, more transparent ML workflows.

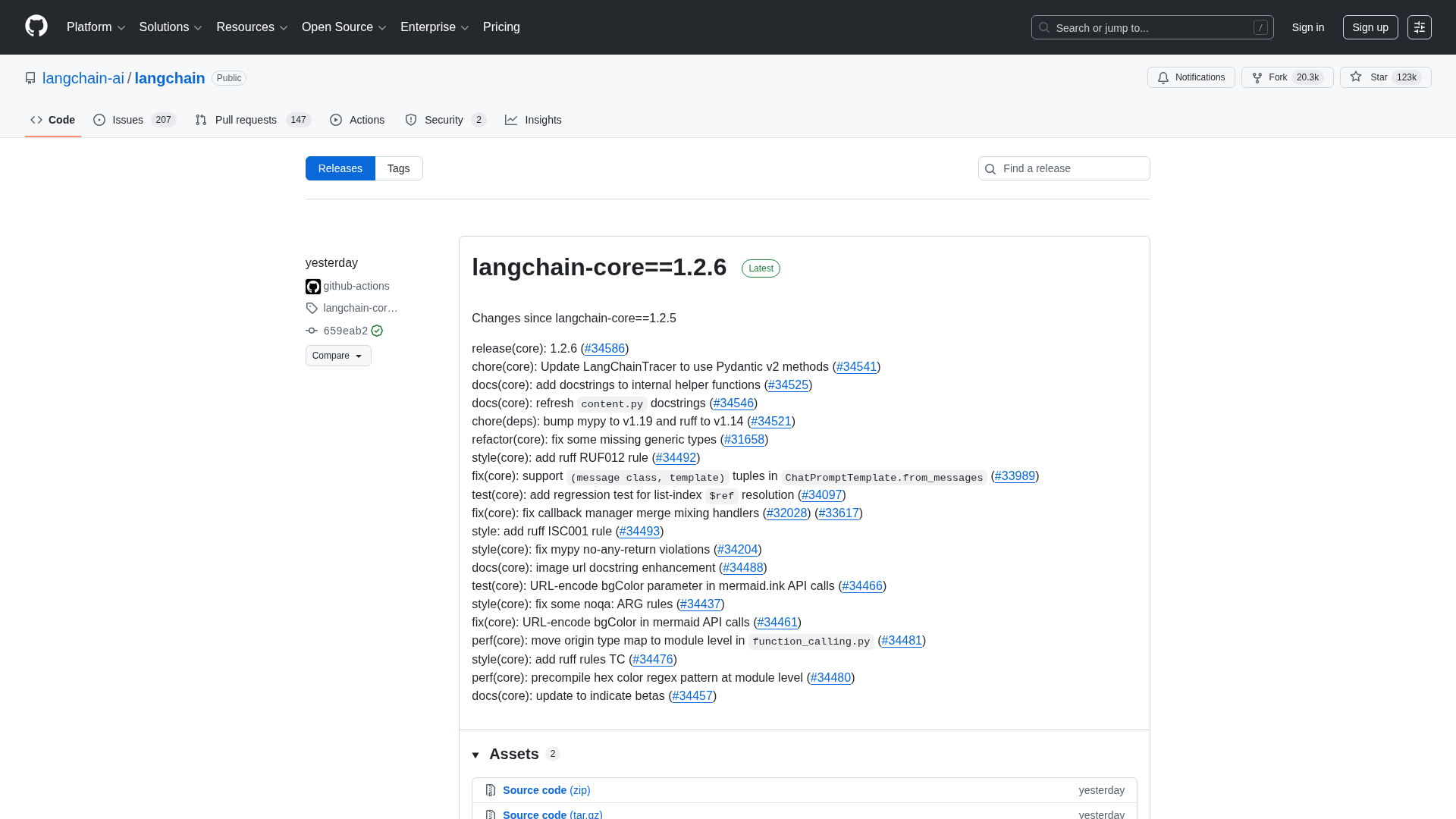

A comprehensive LangChain releases roundup detailing Core 1.2.6 and interconnected updates across XAI, OpenAI, Classic, and tests.

A reproducible bug where LangGraph with Gemini ignores tool results when a PDF is provided, even though the tool call succeeds.

A practical guide to debugging deep agents with LangSmith using tracing, Polly AI analysis, and the LangSmith Fetch CLI.

A CLI tool to pull LangSmith traces and threads directly into your terminal for fast debugging and automation.