Topic Overview

This topic examines the intersection of decentralized training frameworks and the growing ecosystem of open-source models at 100B+ parameter scale, with a focus on infrastructure and AI data platforms. By 2026 the community-driven release of large code and instruction models—alongside modular tooling for retrieval, agent orchestration, and local development—has made large-scale LLMs more accessible outside hyperscaler clouds. Key components include decentralized training and orchestration patterns (model sharding, multi-party/federated training, and peer-to-peer compute pooling) that reduce single‑provider lock-in and improve data governance, and AI data platforms that manage provenance, labeling and RAG pipelines. Open-source code models and developer stacks illustrate this shift: CodeGeeX provides an open code-assistant with IDE integration; StarCoder (15.5B) demonstrates FIM-trained, opt-out-sourced code models; Code Llama is a code-specialized variant of the Llama family optimized for generation and infilling; Salesforce CodeT5/Codet5+ offer encoder–decoder architectures for code understanding and translation; and instruction-tuned families like WizardLM/WizardCoder show how community fine-tuning drives task specialization. Complementary platforms such as LlamaIndex translate unstructured content into production-grade document agents and scalable retrieval-augmented workflows, bridging model capabilities and data infrastructure. Relevance and challenges: decentralized training and open 100B+ models promise greater transparency, cheaper experimentation, and improved data control, but they raise practical hurdles — coordinated compute orchestration, reproducible data pipelines, model alignment and safety, and secure weight provenance. For teams evaluating this space, the pragmatic focus is on interoperable tooling, reproducible data platforms, and governance mechanisms that enable teams to run, fine-tune, and deploy large open models across distributed infrastructure.

Tool Rankings – Top 6

AI-based coding assistant for code generation and completion (open-source model and VS Code extension).

StarCoder is a 15.5B multilingual code-generation model trained on The Stack with Fill-in-the-Middle and multi-query ува

Code-specialized Llama family from Meta optimized for code generation, completion, and code-aware natural-language tasks

Official research release of CodeT5 and CodeT5+ (open encoder–decoder code LLMs) for code understanding and generation.

Open-source family of instruction-following LLMs (WizardLM/WizardCoder/WizardMath) built with Evol-Instruct, focused on

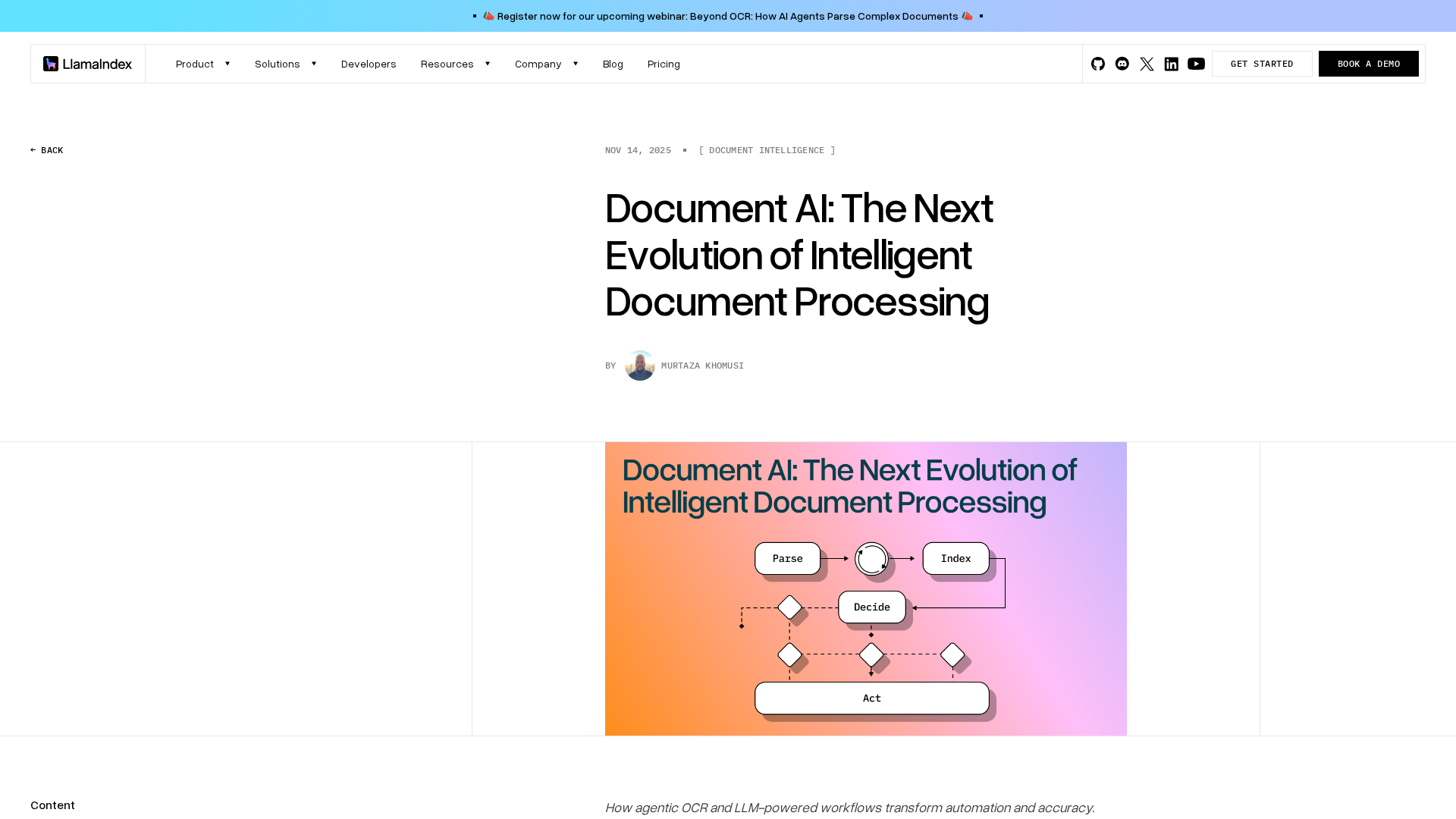

Developer-focused platform to build AI document agents, orchestrate workflows, and scale RAG across enterprises.

Latest Articles (30)

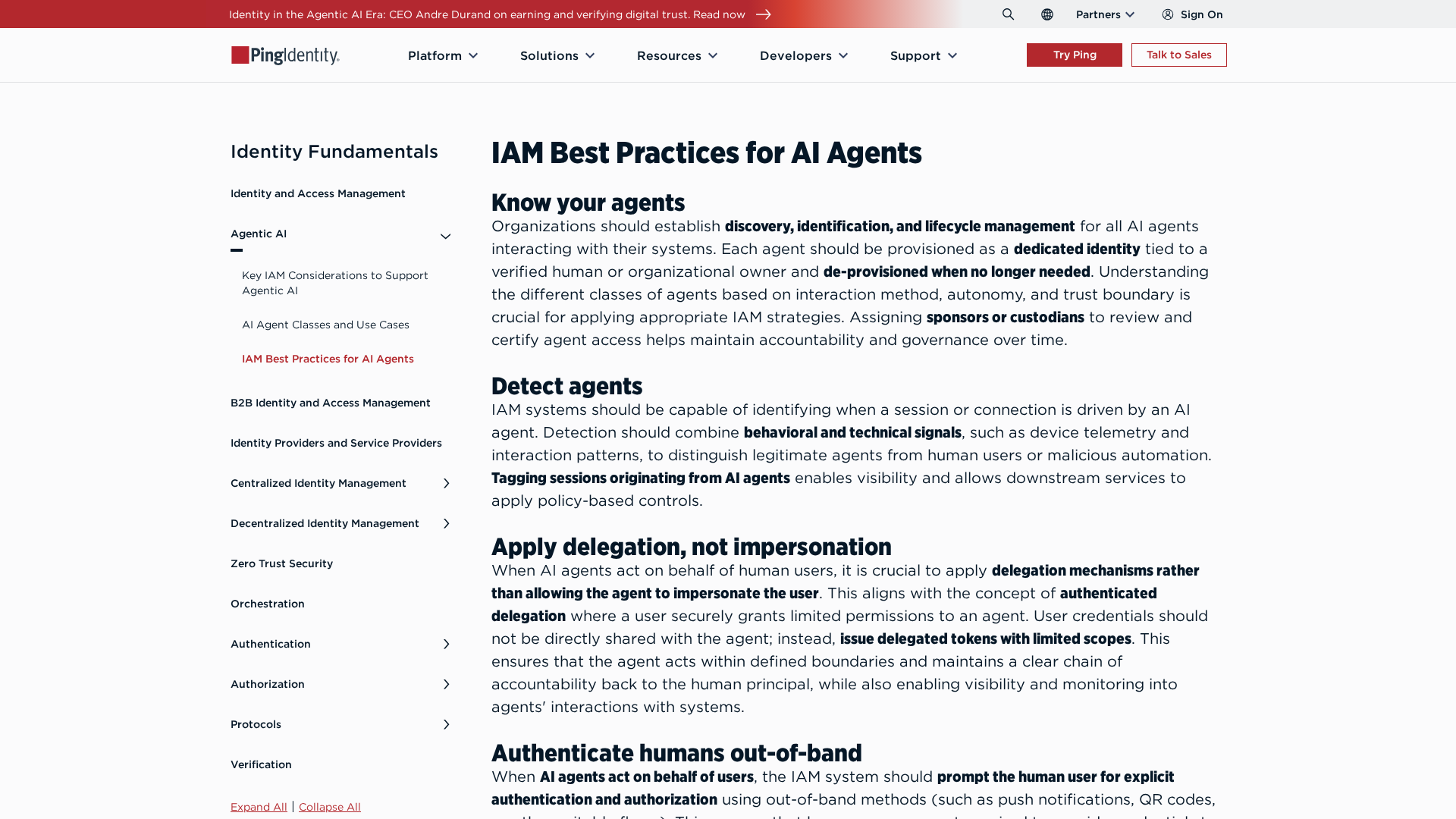

Best-practices for securing AI agents with identity management, delegated access, least privilege, and human oversight.

Adobe nears a $19 billion deal to acquire Semrush, expanding its marketing software capabilities, according to WSJ reports.

Adobe’s Semrush acquisition signals a major AI-driven shift and potential consolidation in SEO tools.

Google expands Canvas travel planning, global Flight Deals, and agentic booking to handle travel research and reservations inside Search AI Mode.

Explains how agentic OCR and LLM-powered workflows enable autonomous, high-accuracy document processing with the LlamaIndex stack.