Topic Overview

This topic examines the intersection of frontier large language models (LLMs) — exemplified by models such as GPT‑5.5, Google’s Gemini family and Anthropic’s Claude — and the enterprise platforms, marketplaces and tooling that operationalize them. As models become more capable and multimodal, organizations are shifting from single-model experimentation to production-grade stacks that include agent frameworks, AI tool and agent marketplaces, data platforms, and GenAI test automation. Key enterprise capabilities include managed APIs and cloud integrations (Google Gemini via Google AI/Vertex AI), enterprise virtual agents and multi-agent orchestration (IBM watsonx Assistant), no-code/low-code agent building and governance platforms (StackAI), and developer-focused coding assistants integrated into IDEs and CI workflows (GitHub Copilot, Tabnine). These tools reflect two concurrent trends: model-driven feature innovation (multimodality and autonomous agents) and platform-driven operationalization (privacy, governance, deployment topology). For enterprises the critical concerns are secure data handling, observability and model evaluation, versioned fine-tuning on proprietary datasets, and automated testing of prompts and agent flows. AI tool and agent marketplaces simplify discovery and procurement, while AI data platforms and GenAI test automation enable continuous validation, drift detection, and compliance. Developer-facing assistants (Copilot, Tabnine) accelerate integration and developer productivity but must be governed where source code and IP are involved. In short, frontier LLMs are catalyzing a shift from point-model usage to integrated enterprise LLM platforms that combine agent frameworks, data infrastructure, marketplaces and automated testing to deliver controlled, observable, and scalable generative AI capabilities.

Tool Rankings – Top 6

Google’s multimodal family of generative AI models and APIs for developers and enterprises.

Enterprise virtual agents and AI assistants built with watsonx LLMs for no-code and developer-driven automation.

End-to-end no-code/low-code enterprise platform for building, deploying, and governing AI agents that automate work onun

Anthropic's Claude family: conversational and developer AI assistants for research, writing, code, and analysis.

An AI pair programmer that gives code completions, chat help, and autonomous agent workflows across editors, theterminal

Enterprise-focused AI coding assistant emphasizing private/self-hosted deployments, governance, and context-aware code.

Latest Articles (60)

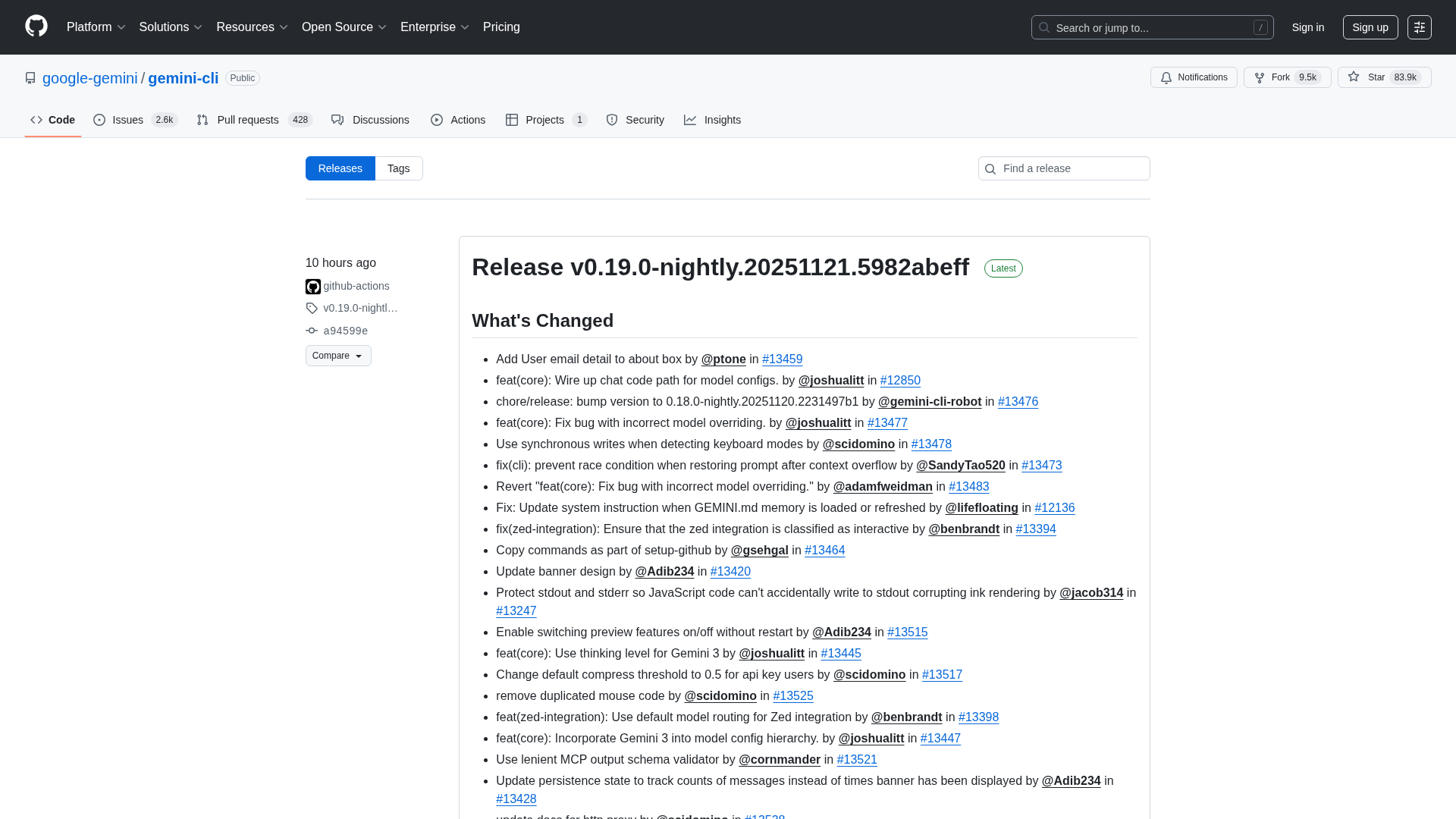

Overview of the Gemini CLI v0.36.0-preview release series, highlighting architectural, CLI, and UI changelogs across multiple pre-release versions.

A comprehensive comparison and buying guide to 14 AI governance tools for 2025, with criteria and vendor-specific strengths.

Adobe nears a $19 billion deal to acquire Semrush, expanding its marketing software capabilities, according to WSJ reports.

Wolters Kluwer expands UpToDate Expert AI with UpToDate Lexidrug to bolster drug information and medication decision support.

A practical, step-by-step guide to fine-tuning large language models with open-source NLP tools.