Topic Overview

This topic examines the practical tradeoffs when deploying machine-learning models—especially large language models and agentic systems—on major cloud providers (AWS, Azure, Google Cloud) and newer entrants such as Nebius. It sits at the intersection of Decentralized AI Infrastructure and AI Data Platforms: teams must balance compute, networking, governance and data lineage while supporting multi-model, multi-environment deployments. As of 2026, priorities driving provider choice include private/self‑hosted options for sensitive data, multi‑cloud and hybrid architectures to reduce vendor lock‑in, and integrated MLOps/LLMOps tooling for reproducibility and governance. Key developer- and ops-facing tools shape how teams deploy and operate models: LangChain provides an SDK and orchestration patterns for building, observing and deploying LLM agents; Qodo (formerly Codium) focuses on context-aware code review, test generation and SDLC governance; Windsurf (formerly Codeium) and Cursor embed agentic features and multi-model support into developer workflows; Tabnine and Tabby offer enterprise and open-source paths for private model serving and IDE integration; GitHub Copilot accelerates developer productivity; and AutoGPT enables packaged autonomous-agent deployments. When comparing providers, evaluate GPU/TPU hardware and region availability, networking and VPC isolation, model registry and artifact storage, autoscaling and inference latency, integration with AI data platforms for labeling/versioning/lineage, and support for self-hosted or on‑prem components. Emerging decentralized approaches and specialized providers aim to reduce data movement and offer novel cost/performance tradeoffs, but established clouds still provide the broadest ecosystem integration. This comparison helps teams choose the right combination of provider and tooling for secure, observable, and maintainable model deployment.

Tool Rankings – Top 6

An open-source framework and platform to build, observe, and deploy reliable AI agents.

Quality-first AI coding platform for context-aware code review, test generation, and SDLC governance across multi-repo,팀

AI-native IDE and agentic coding platform (Windsurf Editor) with Cascade agents, live previews, and multi-model support.

AI-first code editor and assistant by Anysphere embedding AI across editor, agents, CLI and web workflows.

Enterprise-focused AI coding assistant emphasizing private/self-hosted deployments, governance, and context-aware code.

An AI pair programmer that gives code completions, chat help, and autonomous agent workflows across editors, theterminal

Latest Articles (48)

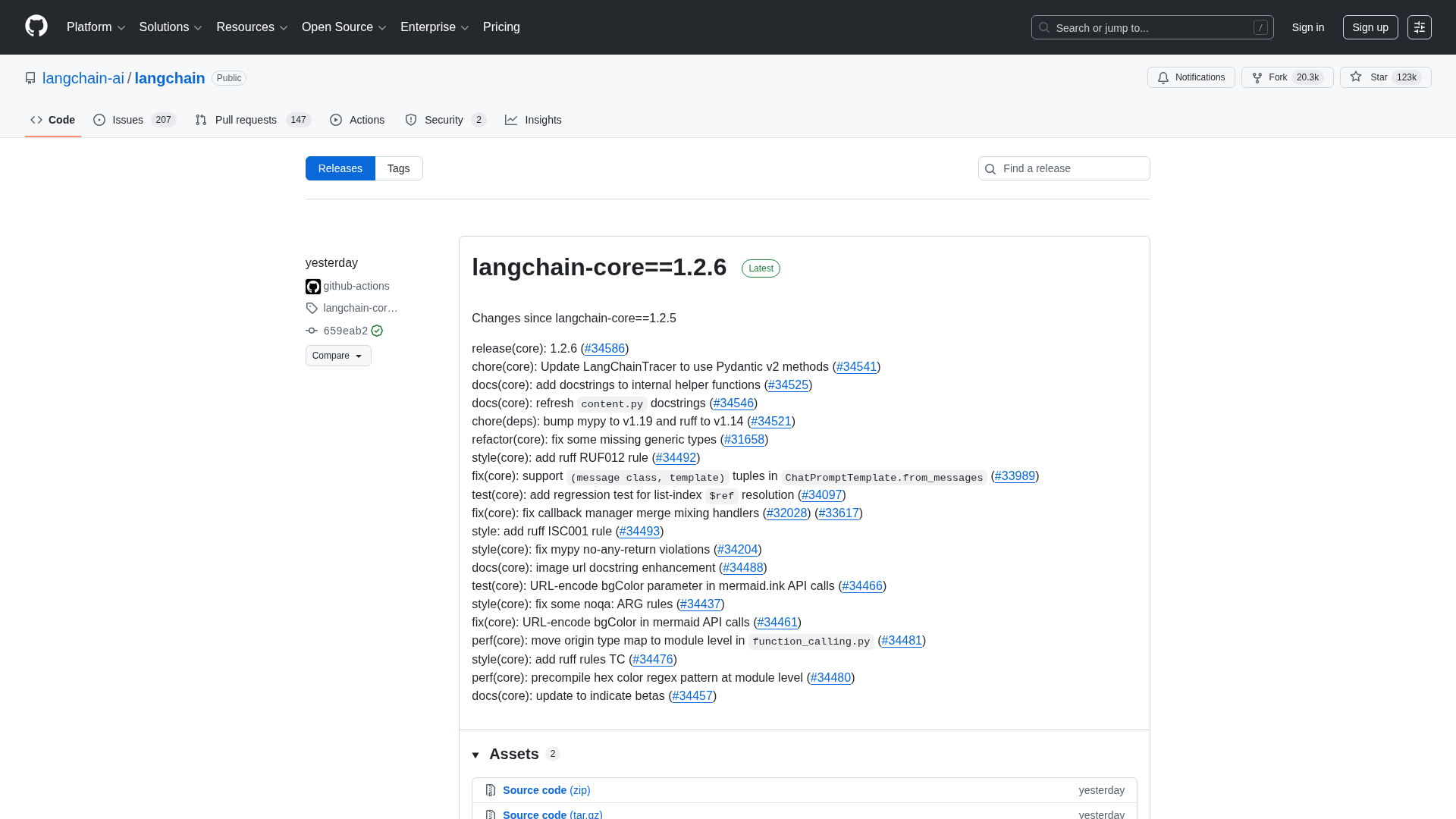

A comprehensive LangChain releases roundup detailing Core 1.2.6 and interconnected updates across XAI, OpenAI, Classic, and tests.

A reproducible bug where LangGraph with Gemini ignores tool results when a PDF is provided, even though the tool call succeeds.

A CLI tool to pull LangSmith traces and threads directly into your terminal for fast debugging and automation.

A practical guide to debugging deep agents with LangSmith using tracing, Polly AI analysis, and the LangSmith Fetch CLI.

A step-by-step guide to building an AI-powered Reliability Guardian that reviews code locally and in CI with Qodo Command.